March 5, 2015 weblog

Facebook artificial intelligence team serves up 20 tasks

In August last year, Daniela Hernandez wrote in Wired about Yann LeCun, director of AI Research at Facebook. His interests include machine learning, audio, video, image, and text understanding, optimization, computer architecture and software for AI.

"The IEEE Computational Intelligence Society just gave him its prestigious Neural Network Pioneer Award, in honor of his work on deep learning," she wrote, "a form of artificial intelligence meant to more closely mimic the human brain. And, perhaps most of all, deep learning has suddenly spread across the commercial tech world, from Google to Microsoft to Baidu to Twitter, just a few years after most AI researchers openly scoffed at it." Hernandez wrote about their interest in convolutional neural networks, to build services that can automatically understand natural language and recognize images. In 2015, it is obvious that the keen interest in where to take AI continues, and an AI/ deep learning community is working to improve the technology. Facebook is taking on the challenge of turning its AI lab into a world-class research outfit.

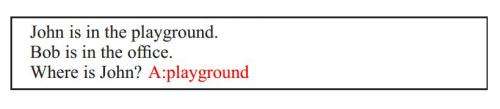

This week, Jacob Aron in New Scientist reported how researchers at Facebook's AI lab in New York believe that a test of simple questions can help design machines that think like people. "Towards AI-Complete Question Answering: A Set of Prerequisite Toy Tasks" is a paper by a Facebook AI Research team, Jason Weston, Antoine Bordes, Sumit Chopra and Tomas Mikolov. The paper was posted on the arXiv server. "One long-term goal of machine learning research is to produce methods that are applicable to reasoning and natural language, in particular building an intelligent dialogue agent. To measure progress towards that goal, we argue for the usefulness of a set of proxy tasks that evaluate reading comprehension via question answering."

In remarks about the paper, New Scientist ran a crosshead of "AI plays 20 questions," as Facebook created 20 tasks, which get progressively harder. "The team says any potential AI must pass all of them if it is ever to develop true intelligence."

The AI team in their paper wrote that "We developed a set of tasks that we believe are a prerequisite to full language understanding and reasoning, and presented some interesting models for solving some of them. While any learner that can solve these tasks is not necessarily close to solving AI, we believe if a learner fails on any of our tasks it exposes it is definitely not going to solve AI."

Their tasks measure understanding in ways such as whether a system can answer questions via chaining facts, simple induction, deduction and more. "The tasks are designed to be prerequisites for any system that aims to be capable of conversing with a human."

New Scientist also commented that "Facebook is looking for more sophisticated ways to filter your news feed. Aron quoted LeCun, who said, "People have a limited amount of time to spend on Facebook, so we have to curate that somehow," and, he added, "For that you need to understand content and you need to understand people."

In the longer term, Facebook also wants to create a digital assistant that can handle a real dialogue with humans, said New Scientist.

More information: Towards AI-Complete Question Answering: A Set of Prerequisite Toy Tasks, arXiv:1502.05698 [cs.AI] arxiv.org/abs/1502.05698

Abstract

One long-term goal of machine learning research is to produce methods that are applicable to reasoning and natural language, in particular building an intelligent dialogue agent. To measure progress towards that goal, we argue for the usefulness of a set of proxy tasks that evaluate reading comprehension via question answering. Our tasks measure understanding in several ways: whether a system is able to answer questions via chaining facts, simple induction, deduction and many more. The tasks are designed to be prerequisites for any system that aims to be capable of conversing with a human. We believe many existing learning systems can currently not solve them, and hence our aim is to classify these tasks into skill sets, so that researchers can identify (and then rectify) the failings of their systems. We also extend and improve the recently introduced Memory Networks model, and show it is able to solve some, but not all, of the tasks.

© 2015 Tech Xplore