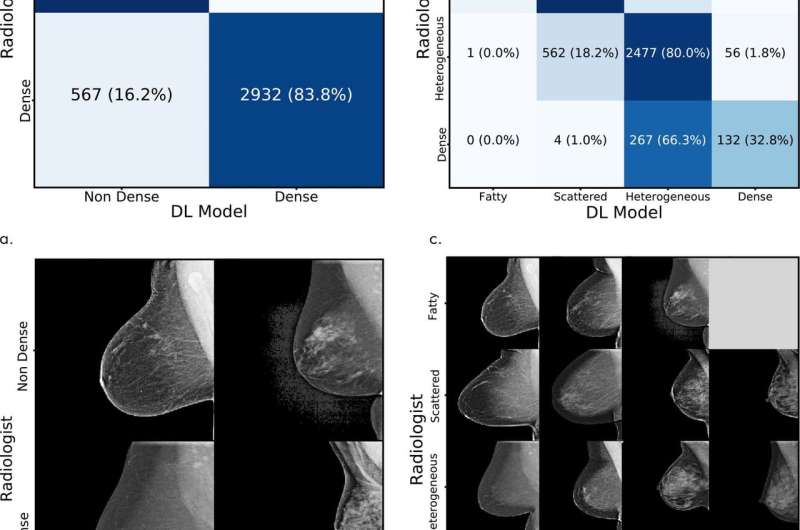

Test set assessment. Comparison of the original interpreting radiologist assessment with the deep learning (DL) model assessment for (a) binary and (c) four-way mammographic breast density classification. (b, d) Corresponding examples of mammograms with concordant and discordant assessments by the radiologist and with the DL model. Credit: Radiological Society of North America

Researchers from MIT and Massachusetts General Hospital have developed an automated model that assesses dense breast tissue in mammograms—which is an independent risk factor for breast cancer—as reliably as expert radiologists.

This marks the first time a deep-learning model of its kind has successfully been used in a clinic on real patients, according to the researchers. With broad implementation, the researchers hope the model can help bring greater reliability to breast density assessments across the nation.

It's estimated that more than 40 percent of U.S. women have dense breast tissue, which alone increases the risk of breast cancer. Moreover, dense tissue can mask cancers on the mammogram, making screening more difficult. As a result, 30 U.S. states mandate that women must be notified if their mammograms indicate they have dense breasts.

But breast density assessments rely on subjective human assessment. Due to many factors, results vary—sometimes dramatically—across radiologists. The MIT and MGH researchers trained a deep-learning model on tens of thousands of high-quality digital mammograms to learn to distinguish different types of breast tissue, from fatty to extremely dense, based on expert assessments. Given a new mammogram, the model can then identify a density measurement that closely aligns with expert opinion.

"Breast density is an independent risk factor that drives how we communicate with women about their cancer risk. Our motivation was to create an accurate and consistent tool, that can be shared and used across health care systems," says second author Adam Yala, a Ph.D. student in MIT's Computer Science and Artificial Intelligence Laboratory (CSAIL).

The other co-authors are first author Constance Lehman, professor of radiology at Harvard Medical School and the director of breast imaging at the MGH; and senior author Regina Barzilay, the Delta Electronics Professor at CSAIL and the Department of Electrical Engineering and Computer Science at MIT.

Mapping density

The model is built on a convolutional neural network (CNN), which is also used for computer vision tasks. The researchers trained and tested their model on a dataset of more than 58,000 randomly selected mammograms from more than 39,000 women screened between 2009 and 2011. For training, they used around 41,000 mammograms and, for testing, about 8,600 mammograms.

Each mammogram in the dataset has a standard Breast Imaging Reporting and Data System (BI-RADS) breast density rating in four categories: fatty, scattered (scattered density), heterogeneous (mostly dense), and dense. In both training and testing mammograms, about 40 percent were assessed as heterogeneous and dense.

During the training process, the model is given random mammograms to analyze. It learns to map the mammogram with expert radiologist density ratings. Dense breasts, for instance, contain glandular and fibrous connective tissue, which appear as compact networks of thick white lines and solid white patches. Fatty tissue networks appear much thinner, with gray area throughout. In testing, the model observes new mammograms and predicts the most likely density category.

Matching assessments

The model was implemented at the breast imaging division at MGH. In a traditional workflow, when a mammogram is taken, it's sent to a workstation for a radiologist to assess. The researchers' model is installed in a separate machine that intercepts the scans before it reaches the radiologist, and assigns each mammogram a density rating. When radiologists pull up a scan at their workstations, they'll see the model's assigned rating, which they then accept or reject.

"It takes less than a second per image ... [and it can be] easily and cheaply scaled throughout hospitals." Yala says.

On over 10,000 mammograms at MGH from January to May of this year, the model achieved 94 percent agreement among the hospital's radiologists in a binary test—determining whether breasts were either heterogeneous and dense, or fatty and scattered. Across all four BI-RADS categories, it matched radiologists' assessments at 90 percent. "MGH is a top breast imaging center with high inter-radiologist agreement, and this high quality dataset enabled us to develop a strong model," Yala says.

In general testing using the original dataset, the model matched the original human expert interpretations at 77 percent across four BI-RADS categories and, in binary tests, matched the interpretations at 87 percent.

In comparison with traditional prediction models, the researchers used a metric called a kappa score, where 1 indicates that predictions agree every time, and anything lower indicates fewer instances of agreements. Kappa scores for commercially available automatic density-assessment models score a maximum of about 0.6. In the clinical application, the researchers' model scored 0.85 kappa score and, in testing, scored a 0.67. This means the model makes better predictions than traditional models.

In an additional experiment, the researchers tested the model's agreement with consensus from five MGH radiologists from 500 random test mammograms. The radiologists assigned breast density to the mammograms without knowledge of the original assessment, or their peers' or the model's assessments. In this experiment, the model achieved a kappa score of 0.78 with the radiologist consensus.

Next, the researchers aim to scale the model into other hospitals. "Building on this translational experience, we will explore how to transition machine-learning algorithms developed at MIT into clinic benefiting millions of patients," Barzilay says. "This is a charter of the new center at MIT—the Abdul Latif Jameel Clinic for Machine Learning in Health at MIT—that was recently launched. And we are excited about new opportunities opened up by this center."

More information: Constance D. Lehman et al, Mammographic Breast Density Assessment Using Deep Learning: Clinical Implementation, Radiology (2018). DOI: 10.1148/radiol.2018180694

Journal information: Radiology

Provided by Massachusetts Institute of Technology