March 29, 2019 feature

Building 3-D models of unknown objects as they are manipulated by robots

Researchers at Rutgers University have recently developed a probabilistic approach for building 3-D models of unknown objects while they are being manipulated by a robot. Their approach, outlined in a paper pre-published on arXiv, uses a physics engine to verify hypothesized geometries in simulations.

Most primates naturally learn to manipulate a variety of objects in their early years of life. Replicating this seemingly trivial capability in robots, however, has so far proved to be very challenging.

Past studies have tried to achieve this using a variety of manipulation algorithms, which typically require knowledge of the geometric models associated with the objects that the robot will be manipulating. These models can be useful if the objects encountered by the robot are known in advance, yet they often fail when these objects are unknown.

"We specifically consider manipulation tasks in piles of clutter that contain previously unseen objects," the researchers at Rutgers University wrote in their paper. "One of the novel aspects of this work is the utilization of a physics engine for verifying hypothesized geometries in simulation. The evidence provided by physics simulations is used in a probabilistic framework that accounts for the fact that mechanical properties of the objects are uncertain."

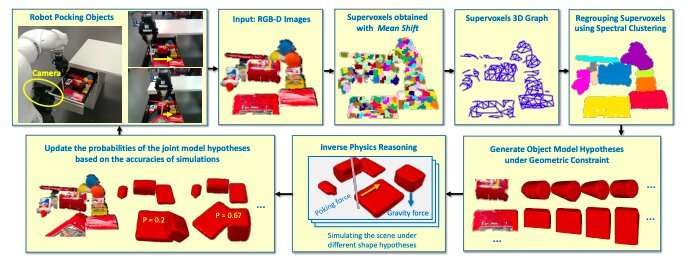

The integrated system developed by the researchers has several components: a robotic manipulator, a segmentation and clustering module, and an inverse physical reasoning unit. The robotic manipulator is designed to push or poke objects in a pile of clutter, while the segmentation and clustering module can detect objects in RGB-D images.

Finally, the inverse physical reasoning unit, which is the distinctive feature of their approach, infers missing parts of objects by replaying the robot's actions in simulation. Essentially, the unit uses multiple hypothesized shapes and assigns higher probabilities to those that best match the observed RGB-D images.

The researchers developed an inverse physics reasoning (IPR) algorithm that can infer occluded parts of objects based on their observed motions and mutual interactions. To train and evaluate their system, they used two datasets: a Voxlets dataset and a new dataset created using YCB benchmark objects. The Voxlets dataset contains static images of table top objects, while the novel database compiled by them includes denser piles of objects.

The team evaluated the new approach in a series of experiments using a Kuka robotic arm mounted on a Clearpath mobile platform and equipped with a Robotiq hand and depth-sensing camera. In these tests, the robot was presented with unknown objects in different scenarios. The findings gathered by the researchers were very promising, with their IPR algorithm inferring shapes better than other approaches.

"Experiments using a robot show that this approach is efficient for constructing physically realistic 3-D models, which can be useful for manipulation planning," the researchers wrote. "Experiments also show that the proposed approach significantly outperforms alternative approaches in terms of shape accuracy."

The new probabilistic approach presented by the researchers could help to enhance the performance of robots in manipulation tasks. In their future work, they plan to develop their approach further, so that it can infer 3-D and mechanical models simultaneously.

More information: Inferring 3D shapes of unknown rigid objects in clutter through inverse physics reasoning. arXiv:1903.05749 [cs.RO]. arxiv.org/abs/1903.05749

© 2019 Science X Network