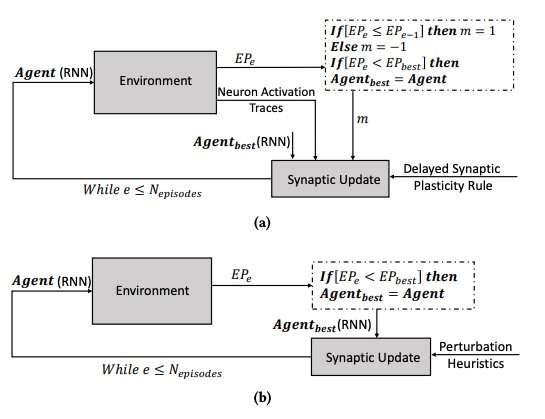

(a) The learning process using the delayed synaptic plasticity, and (b) the learning process by optimizing the parameters of the RNNs using the hill climbing algorithm. Credit: Yaman et al.

The human brain continuously changes over time, forming new synaptic connections based on experiences and information learned over a lifetime. Over the past few years, artificial Intelligence (AI) researchers have been trying to reproduce this fascinating capability, known as 'plasticity,' in artificial neural networks (ANNs).

Researchers at Eindhoven University of Technology (Tu/e) and University of Trento have recently proposed a new approach inspired by biological mechanisms that could improve learning in ANNs. Their study, outlined in a paper pre-published on arXiv, was funded by the European Union's Horizon 2020 research and innovation program.

"One of the fascinating properties of biological neural networks (BNNs) is their plasticity, which allows them to learn by changing their configuration based on experience," Anil Yaman, one of the researchers who carried out the study, told TechXplore. "According to the current physiological understanding, these changes are performed on individual synapses based on the local interactions of neurons. However, the emergence of a coherent global learning behavior from these individual interactions is not very well understood."

Inspired by the plasticity of BNNs and its evolutionary process, Yaman and his colleagues wanted to mimic biologically plausible learning mechanisms in artificial systems. To model plasticity in ANNs, researchers typically use something called Hebbian learning rules, which are rules that update synapses based on neural activations and reinforcement signals received from the environment.

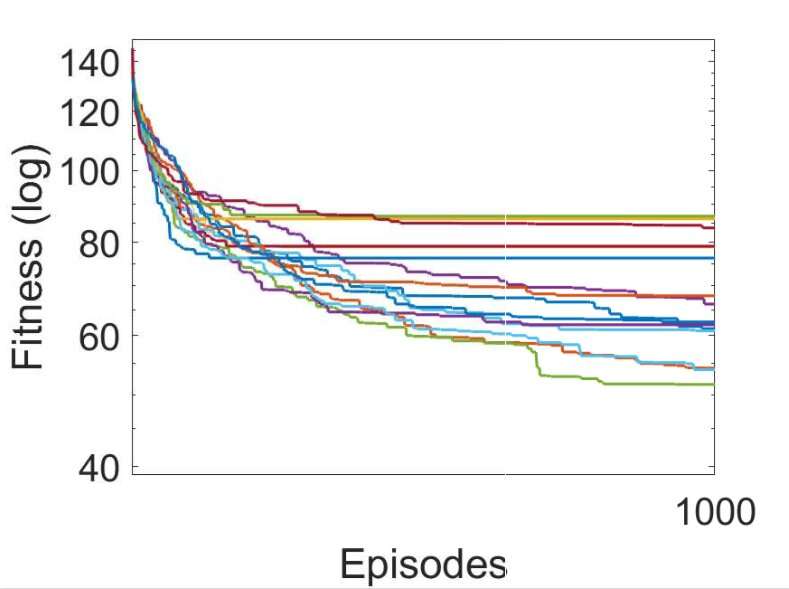

Several independent runs of the learning processes by using various evolved delayed synaptic plasticity rules (the best DSP rule is shown in green). Credit: Yaman et al.

When reinforcement signals are not available immediately after each network output, however, some issues can arise, making it harder for the network to associate the relevant neuron activations with the reinforcement signal. To overcome this issue, known as the 'distal reward problem,' the researchers extended Hebbian plasticity rules so that they would enable learning in distal reward cases. Their approach, called delayed synaptic plasticity (DSP), uses something called neuron activation traces (NATs) to provide additional storage in each synapse, as well as to keep track of neuron activations as the network is performing a certain task.

"Synaptic plasticity rules are based on the local activations of neurons and a reinforcement signal," Yaman explained. "However, in most learning problems, the reinforcement signals are received after a certain period of time rather than immediately after each action of the network. In this case, it becomes problematic to associate the reinforcement signals with the activations of neurons. In this work, we proposed using what we called 'neuron activation traces,' to store the statistics of neuron activations in each synapse and inform the synaptic plasticity rules on how to perform delayed synaptic changes."

One of the most meaningful aspects of the approach devised by Yaman and his colleagues is that it does not assume global information about the problem that the neural network will be solving. Furthermore, it does not depend on the specific ANN architecture and it is thus highly generalizable.

"In practical terms, our study can lay the foundation for novel learning schemes that can be used in a number of neural network applications, such as robotics and autonomous vehicles, and in general in all cases where an agent has to perform adaptive behavior in absence of an immediate reward obtained from its actions," Giovanni Iacca, another researcher involved in the study, told TechXplore. "For example, in AI for videogaming, an action at the current time-step might not necessarily lead to a reward right now, but only after some time; an agent showing personalized advertisements might get a "reward" from the user behavior only after some time, etc.)."

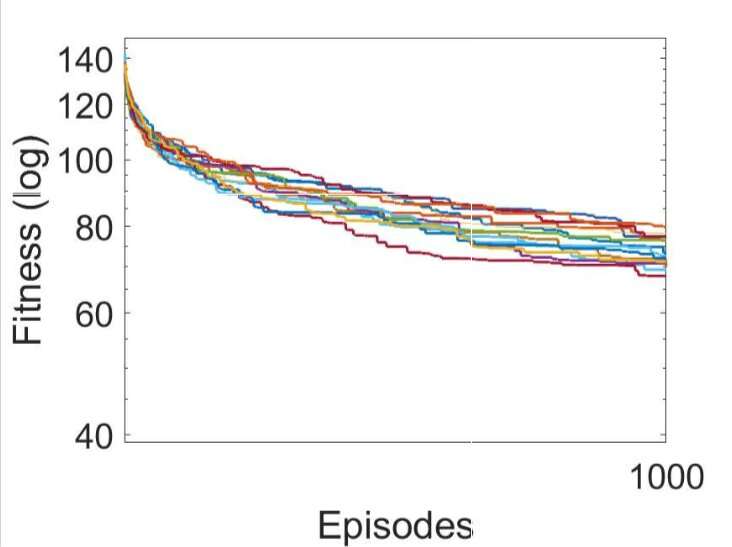

Several independent runs of the learning processes by optimizing the parameters of the RNNs using the hill climbing algorithm. Credit: Yaman et al.

The researchers tested their newly adapted Hebbian plasticity rules in a simulation of a triple T-maze environment. In this environment, an agent controlled by a simple recurrent neural network (RNN) needs to learn to find one among eight possible goal positions, starting from a random network configuration.

Yaman, Iacca and their colleagues compared the performance achieved using their approach with that attained when an agent was trained using an analogous iterative local search algorithm, called hill climbing (HC). The key difference between the HC climbing algorithm and their approach is that the former does not use any domain knowledge (i.e. local activations of neurons), while the latter does.

The results gathered by the researchers suggest that the synaptic updates performed by their DSP rules lead to more effective training and ultimately better performance than the HC algorithm. In the future, their approach could help to enhance long-term learning in ANNs, allowing artificial systems to effectively build new connections based on their experiences.

"We are mainly interested in understanding the emergent behavior and learning dynamics of artificial neural networks, and developing a coherent model to explain how synaptic plasticity occurs in different learning scenarios," Yaman said. "I think there are vast possibilities for future research in this area, for instance it will be interesting to scale the proposed approach to large-scale complex problems (as well as deep networks) and achieve biologically inspired learning mechanisms that require the least amount of supervision (or none at all)."

More information: Learning with delayed synaptic plasticity. arXiv:1903.09393 [cs.NE]. arxiv.org/abs/1903.09393

© 2019 Science X Network