Credit: University of Bristol

In a new twist on human-robot research, computer scientists at the University of Bristol have developed a handheld robot that first predicts then frustrates users by rebelling against their plans, thereby demonstrating an understanding of human intention.

In an increasingly technological world, cooperation between humans and machines is an essential aspect of automation. This new research shows frustrating people on purpose is part of the process of developing robots that better cooperate with users.

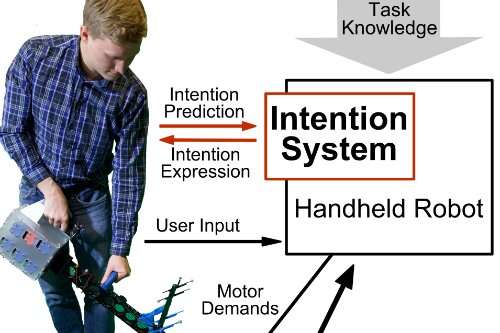

The team at Bristol have developed intelligent, handheld robots which complete tasks in collaboration with the user. In contrast to conventional power tools, that know nothing about the tasks they perform and are fully under the control of users, the handheld robot holds knowledge about the task and can help through guidance, fine-tuned motion and decisions about task sequences.

While this helps fulfill tasks quicker and with higher accuracy, users can get irritated when the robot's decisions are not in line with their own plans.

Latest research in this space by Ph.D. candidate Janis Stolzenwald and Professor Walterio Mayol-Cuevas, from the University of Bristol's Department of Computer Science, explores the use of intelligent tools that can bias their decisions in response to the intention of users.

This research is a new and interesting twist on human-robot research as it aims to first predict what users want and then go against these plans.

Professor Mayol-Cuevas said: "If you are frustrated with a machine that is meant to help you, this is easier to identify and measure than the often elusive signals of human-robot cooperation. If the user is frustrated when we instruct the robot to rebel against their plans, we know the robot understood what they wanted to do."

"Just as short-term predictions of each others' actions are essential to successful human teamwork, our research shows integrating this capability in cooperative robotic systems is essential to successful human-machine cooperation."

For the study, researchers used a prototype that can track the user's eye gaze and derive short-term predictions about intended actions through machine learning. This knowledge is then used as a basis for the robot's decisions such as where to move next.

The Bristol team trained the robot in the study using a set of over 900 training examples from a pick and place task carried out by participants.

Core to this research is the assessment of the intention-prediction model. The researchers tested the robot for two cases: obedience and rebellion. The robot was programmed to follow or disobey the predicted intention of the user. Knowing the user's aims gave the robot the power to rebel against their decisions. The difference in frustration responses between the two conditions served as evidence for the accuracy of the robot's predictions, thus validating the intention-prediction model.

Janis Stolzenwald, a Ph.D. student sponsored by the German Academic Scholarship Foundation and the UK's EPSRC, conducted the user experiments and identified new challenges for the future. He said: "We found that the intention model is more effective when the gaze data is combined with task knowledge. This raises a new research question: how can the robot retrieve this knowledge? We can imagine learning from demonstration or involving another human in the task."

In preparation for this new challenge, the researchers are currently exploring shared control, interaction and new applications within their studies about remote collaboration through the handheld robot. A maintenance task serves as a user experiment, where a handheld robot user receives assistance through an expert who remotely controls the robot.

The research builds on the handheld robot designed and built by former Ph.D. student Austin Gregg-Smith, and which is available as an open-source design via the researcher's site at www.handheldrobotics.org.

More information: Janis Stolzenwald, et al. Rebellion and Obedience: The Effects of Intention Prediction in Cooperative Handheld Robots. arXiv:1903.08158v1 [cs.RO]: arxiv.org/abs/1903.08158

Provided by University of Bristol