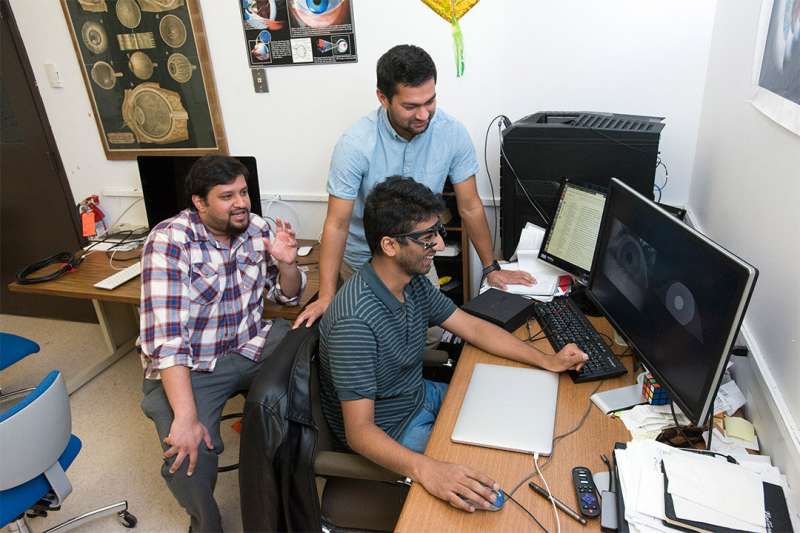

A team of RIT researchers won first place in Facebook Research’s OpenEDS Challenge, an international competition that pushed researchers to develop more effective eye-tracking solutions. Rakshit Kothari of India (left), Aayush Chaudhary of Nepal (front) and Manoj Acharya of Nepal (back) are Ph.D. students who spearheaded the project. Credit: A. Sue Weisler

A team of Rochester Institute of Technology researchers took the top prize in an international competition held by Facebook Research to develop more effective eye-tracking solutions. The team, led by three Ph.D. students from the Chester F. Carlson Center for Imaging Science, won first place in the OpenEDS Challenge focused on semantic segmentation.

Virtual reality products such as Oculus VR rely on eye tracking to create immersive experiences. Eye tracking must be precise, accurate and work all the time, for every person, in any environment.

"Currently eye tracking is done by detecting the pupil and fitting a two- or three-dimensional model onto the data," said Aayush Chaudhary, an imaging science Ph.D. student from Nepal and one of the lead authors of the prize-winning paper. "The overall objective of the OpenEDS Challenge was to separate the iris, pupil and sclera in images of the eye so that 2-D and 3-D models can be fit with a compact model."

To help expand the applications where eye tracking can be used, Facebook Research issued the challenge to create robust computer models that can work in real time. They required that the winning solution be accurate, robust and extremely power efficient.

"When we talk about doing eye tracking on a device such as your cellphone, you need models that are small, that can run in real-time," said Rakshit Kothari, an imaging science Ph.D. student from India. "You need to be able to just take out your cellphone and have it work right away, without much computation."

With support from their advisors, three imaging science Ph.D. students—Kothari, Chaudhary, and Manoj Acharya of Nepal—devoted a month of their research time to develop the solution. They ran countless models over that span, continually refining their approach and pulling ahead in the challenge on the final day of competition. Along the way, they overcame several unforeseen obstacles to build a practical model.

"There are conditions that we never anticipated that can come up in these images, which our model had to intelligently take care of," said Acharya. "As an example, if you were teaching this model how to find these features using images where people are not wearing any eye makeup, and then suddenly someone comes in wearing a lot of mascara, that could completely throw it off. You need a model to be smart and intelligent enough to work around that."

The interdisciplinary project required collaboration across laboratories and colleges at RIT. The three primary authors collaborated with co-authors including Nitinraj Nair, a computer science graduate student from India, and Sushil Dangi, an imaging science Ph.D. student from Nepal. They worked closely with their advisors: Frederick Wiedman Professor Jeff Pelz, Assistant Professor Gabriel Diaz, and Assistant Professor Christopher Kanan from the College of Science, and Professor Reynold Bailey from the Golisano College of Computing and Information Sciences.

The researchers work in four RIT labs— the Multidisciplinary Vision Research Laboratory (MVRL), the PerForM (Perception for Movement) Lab, the Machine and Neuromorphic Perception Laboratory (kLab) and the Computer Graphics and Applied Perception Lab.

Team members will present their solution at the 2019 OpenEDS Workshop: Eye Tracking for VR and AR at the International Conference on Computer Vision (ICCV) in Seoul, Korea on Nov. 2.

The team won $5,000 and will donate the prize money to the newly established Willem "Bill" Brouwer Endowed Fellowship to support graduate student research in the Chester F. Carlson Center for Imaging Science.

More information is available on the Facebook Research OpenEDS Challenge website.

Provided by Rochester Institute of Technology