Floating butterfly (landscape) created by the Multimodal Acoustic Trap Display developed at the University of Sussex Credit: Eimontas Jankauskis

Academics at the University of Sussex have come the closet yet to recreating one of the most iconic of Star Wars technology by developing for the first time holograms that can be seen by the naked eye as well as heard and felt.

While not yet able to transmit a 3-D distress call from Princess Leia, the Multimodal Acoustic Trap Display (MATD) is capable of showing a coloured butterfly gently flapping in mid-air, emojis and other images which are visible without the need for VR or AR headsets.

Lead author Dr. Ryuji Hirayama, a JSPS scholar and Rutherford Fellow at the University of Sussex, said: "Our new technology takes inspiration from old TVs which use a single colour beam scanning along the screen so quickly that your brain registers it as a single image. Our prototype does the same using a coloured particle that can move so quickly anywhere in 3-D space that the naked eye sees a volumetric image in mid-air."

The MATD uses ultrasound to trap a particle and illuminate it with red, green, and blue light to control its colour as it quickly scans through an open space to reveal the illusion of volumetric content.

The prototype scans the content in less than the 0.1 second that the eye takes to integrate different light stimuli under a single shape.

Two minute explainer video of the Multimodal Acoustic Trap Display developed at the University of Sussex Credit: Eimontas Jankauskis

As well as visual content, the prototype developed by a team at the University of Sussex's School of Engineering and Informatics can also blast out a chorus of Queen or create a tactile button in mid-air through the use of ultrasound alone.

Dr. Diego Martinez Plasencia, co-creator of the MATD and a researcher on 3-D User Interfaces at the University of Sussex, said: "Even if not audible to us, ultrasound is still a mechanical wave and it carries energy through the air. Our prototype directs and focuses this energy, which can then stimulate your ears for audio, or stimulate your skin to feel content."

The research team believe that the MATD system could become an incredibly useful visualisation tool for a huge range of professions including anyone working in biomedicine, design or architecture.

Project leader Sri Subramanian, Professor of Informatics at the University of Sussex and a Royal Academy of Engineering Chair in Emerging Technologies, said: "Our MATD system revolutionizes the concept of 3-D display. It is not just that the content is visible to the naked eye and in all ways perceptually similar to a real object while still allowing the viewer to reach inside and interact with the display.

"It is also the fact that it relies on a principle that can also stimulate other senses, putting it above any other display approaches and getting us closer than ever to Ivan Sutherland's vision of the Ultimate Display."

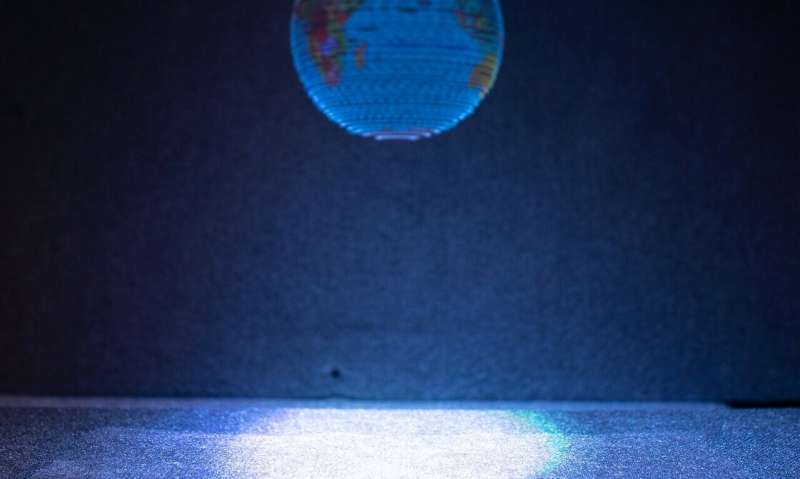

Globe created by the Multimodal Acoustic Trap Display developed at the University of Sussex. Credit: Eimontas Jankauskis

The MATD is able to create additional perceptual sensations compared to rival hologram technologies such as 3-D TVs, light-field displays or volumetric displays.

The authors believe that its potential to manipulate matter without touching could open up interesting opportunities to mix chemicals without contaminating them, conduct ultrasound levitation inside tissues to accurately deliver life-saving drugs and numerous lab-in-a-chip applications.

Dr. Hirayama added: "The MATD was created using low-cost and commercially available components, we believe there is plenty of room to increase its capacity and potential.

"Operating at frequencies higher than 40KHz will allow the use of smaller particles, increasing the resolution and precision of the visual content, while frequencies above 80KHz will result in optimum audio quality.

"More powerful ultrasound speakers, more advanced control techniques or even the use of several particles, could allow for more complex, stronger tactile feedback and louder audio.

"So even though we have yet to match the Rebel Alliance's communications capability, our prototype has come the closest yet and opened up a host of other exciting opportunities in the process."

The study is published in Nature.

More information: Ryuji Hirayama et al. A volumetric display for visual, tactile and audio presentation using acoustic trapping, Nature (2019). DOI: 10.1038/s41586-019-1739-5

Journal information: Nature

Provided by University of Sussex