November 12, 2019 feature

Uber develops technique to predict pedestrian behavior, while new documents are released about last year's accident

In years to come, self-driving vehicles could gradually become a popular means of transportation. Before this can happen, however, researchers will need to develop tools that ensure that these vehicles are safe and can efficiently navigate in human-populated environments.

As self-driving vehicles are ultimately designed to move around both static and moving obstacles, they should be able to detect objects quickly and avoid them. One way to achieve this could be to develop models that can predict the future behavior of objects or people on the street, in order to estimate where they will be located when the vehicle approaches them.

Predicting future changes in urban environments, however, can be very challenging. It is especially difficult when it comes to predicting human behavior, such as the movements or unexpected actions of pedestrians.

Last year, one of Uber's self-driving cars killed Elaine Herzberg, a 49-year-old woman, in Arizona. This accident, along with dozens of others, sparked much debate about the safety of self-driving vehicles, as well as about whether these vehicles should be tested in populated environments.

About a week ago, new documents released by the U.S. National Transport Safety Board (NTSB) revealed that Uber's autonomous vehicle involved in last year's fatal crash did not identify Herzberg as a pedestrian until it was much too late. The same reports suggests that the autonomous vehicle involved in the crash was never trained to detect pedestrians anywhere outside of a crosswalk.

Herzberg was jaywalking at the time of the accident, so the software flaws revealed by the NTSB report would explain why Uber's self-driving vehicle failed to spot her, which ultimately caused her death. The new analyses released by NTSB could put a halt to the company's self-driving vehicle program, which had started testing again in December 2018 after being put on hold for several months.

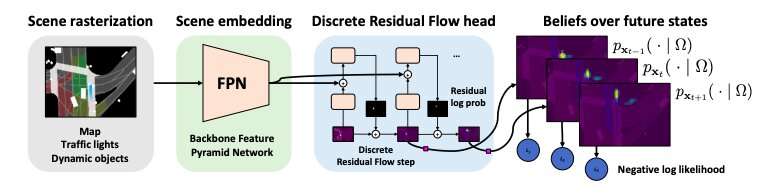

These new findings highlight the need to develop more advanced AI and more reliable software before self-driving vehicles can be tested on actual roads. Interestingly, some days before NTSB released these documents, a paper by researchers at Uber's Advanced Technologies Group, the University of Toronto and UC Berkeley was pre-published on arXiv, introducing a new technique to predict pedestrian behavior called discrete residual flow network (DRF-NET). According to the researchers, this neural network can make predictions about future pedestrian behavior while capturing the inherent uncertainty in forecasting long-range motion.

"Our learned network effectively captures multimodal posteriors over future human motion by predicting and updating and discretized distribution over spatial locations," the researchers wrote in their paper.

The researchers expressed beliefs about the future positions of pedestrians through categorical distributions that represent space. They then used these distributions to plan and optimize paths for self-driving vehicles, which take into account the expected positions of pedestrians.

Firstly, the DTF-NET network introduced in their paper rasterizes images of road maps, which means that it converts them into a picture composed of discrete pixels. The behaviors of pedestrians are thus encoded into a bird's-eye view rasterized image, which is aligned with a detailed semantic map.

Subsequently, the network extracts features that are particularly useful for predicting the behavior of pedestrians from the rasterized images. Finally, the researchers trained their model to predict the future behavior of pedestrians on the road based on these features.

They trained and evaluated their neural network using a large-scale dataset they previously compiled, which contains real world recordings with object annotations and online detection-based tracks, collected in several cities in North-America. These recordings include pedestrian trajectories that were manually annotated by the researchers in a 360-degree, 120-meter-range view using an on-vehicle LiDAR sensor.

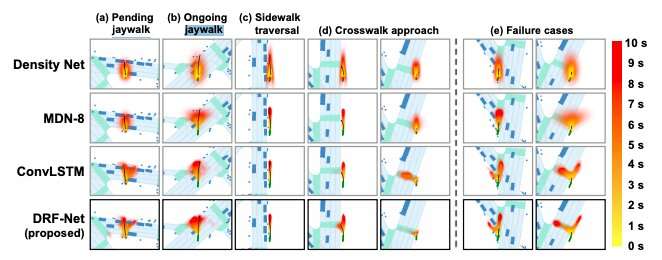

In the evaluations carried out by the researchers, the DTF-NET technique performed well, outperforming several other baseline methods for predicting pedestrian behaviour. This method could thus potentially help to enhance the performance of Uber's self-driving vehicles, allowing them to anticipate the movements of pedestrians and plan their paths accordingly.

"The strong performance of DRF-NET's discrete predictions is very promising for cost-based and constrained robotic planning," the researchers wrote.

Interestingly, the pedestrian behaviors processed and predicted by the DTF-NET network, highlighted in one of the recent paper's figures, include 'pending jaywalk,' 'ongoing jaywalk' and 'sidewalk traversal,' as well as crosswalk. This seems somewhat ironic, as among other things, the recent documents released by NTSB highlighted the inability of Uber's self-driving vehicle to detect jaywalking pedestrians at the time of the crash in Arizona.

Only time will tell whether the DRF-NET network or other techniques will actually be able to improve the ability of self-driving vehicles to detect pedestrians. For the time being, however, one thing seems clear: Significant advancements in AI and better techniques for detecting pedestrians will be necessary before self-driving vehicles can be safely put on the road.

More information: Discrete residual flow for probabilistic pedestrian behaviour prediction. arXiv: 1910.08041 [cs.CV]. arxiv.org/abs/1910.08041

© 2019 Science X Network