December 20, 2019 feature

An interactive drone to assist humans in office environments

Researchers at Karlsruhe Institute of Technology in Germany have recently developed an interactive drone designed to assist humans in indoor environments such offices or laboratories. In a paper prepublished on arXiv, the researchers presented the results achieved by their drone when completing simple tasks in the laboratory.

"In this paper, we present an indoor office drone assistant that is tasked to run errands and carry out simple tasks at our laboratory, while given instructions from and interacting with humans in the space," the researchers wrote in their paper.

The approach to designing the drone adopted by the researchers is centered around the notion of "missions," which entails receiving input parameters and meeting successful conditions, or "goals." To successfully complete a mission, their drone should be able to achieve all the goals associated with it.

"In the case of the system presented in this paper, the input parameter is a verbal request to fly to a certain destination (room or person) in an office environment," the researchers explained in their paper. "The goal of the mission is to reach the target without any manual intervention and collision with static or dynamic obstacles."

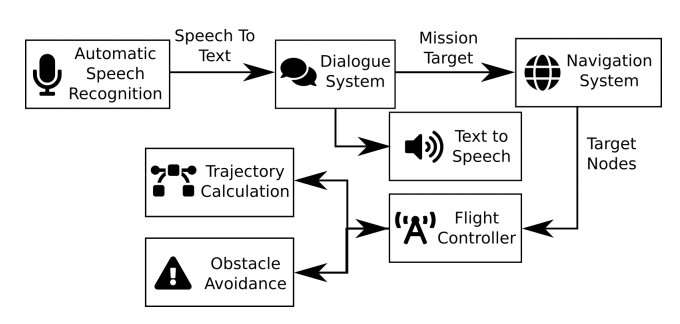

The interactive drone presented by the researchers is a modified version of the Crazyflie 2.0 drone, designed by a company called Bitcraze. It has several components: an automatic speech recognition (ASR) subsystem that transcribes a user's verbal requests; a dialog system that receives these requests, processes them and identifies the target within the office, and a flight controller that plan the drone's trajectory to the desired target while trying to avoid collisions with obstacles.

The researchers decided to evaluate each of the system's components separately in order to clearly identify features that needed to be perfected. To evaluate the dialog system, they asked three non-native English speakers to give simple verbal instructions out loud, for instance, ordering the drone to fly to a different room or visit another person in the lab.

Subsequently, the researchers tested their drone's depth perception and collision avoidance capabilities by presenting the drone with three different types of obstacles: a closed door, a person, and a metal bench. Finally, they investigated the rate at which their system could successfully complete missions by sending it to various target rooms using written instructions.

While, the drone was found to complete missions with a success rate of 77.78 percent, they found that it had several limitations. For instance, one of the most common causes of mission failure was that the drone turned slightly during take-off, as its four propellers started operating at slightly different times.

"As this is our first prototype, there is plenty of room for future improvement, not only on each of the individual components but also the system as a whole," the researchers said.

The team observed that the drone's dialog system performed particularly poorly and could understand a person's instructions in 57 percent of cases at best. The main issue with the dialog system was that the ASR presented difficulties identifying people's names when spoken by users, thus aborting the speech recognition process too early.

"In future work, we want to use an improved ARS system," the researchers wrote in their paper. "Furthermore, in order to allow a wider variety of natural language without increasing the size of the training dataset, we also want to use a multi-task approach. That means that the drone dataset will be trained alongside an out-of-domain dataset."

In the initial tests, the drone's collision detection component performed remarkably well, effectively preventing collisions with both people and large objects in the majority of cases. However, it was found to struggle with detecting very thin or translucent furniture. To overcome this limitation, the team is now planning to create a more precise, real-time map of the surrounding environment, as currently, the system bases its predictions on a prerecorded 2-D map.

"Reducing positional errors should also help to improve our total mission success rate, as this was one of the main causes of mission failure during our tests," the researchers explained in their paper. "The other problem that emerged during our tests was the depth perception system performing badly under very bright or changing light conditions. We plan to also address these issues in the future."

Moreover, in their next studies, the researchers would like to improve the system's battery life and battery management, as at the moment, it can only complete three or four missions before it has to be recharged. They would eventually like to increase this number significantly, while also coming up with new solutions that could help to mitigate this problem.

More information: An interactive indoor drone assistant. arXiv:1912.04235 [cs.RO]. arxiv.org/abs/1912.04235

© 2019 Science X Network