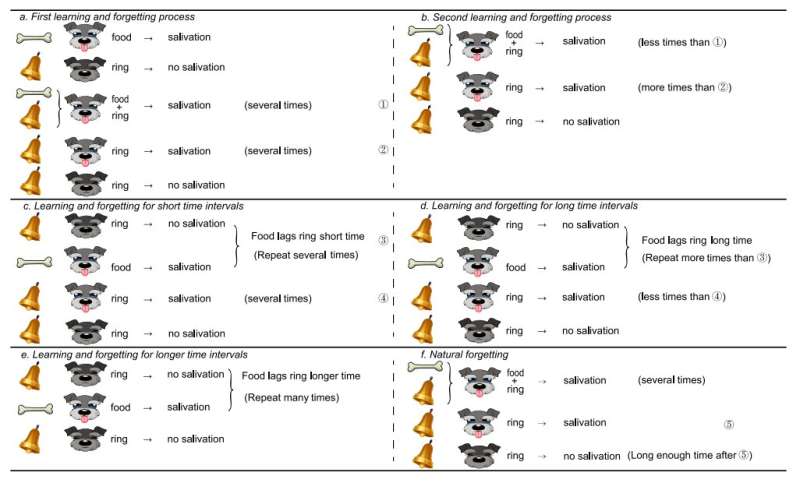

Illustration explaining the concept of Pavlov associative memory. Credit: Sun et al.

Classical conditioning is a psychological process through which animals or humans pair desired or unpleasant stimuli (e.g., food or a painful experiences) with a seemingly neutral stimulus (e.g., the sound of a bell, the flash of a light, etc.) after these two stimuli are repeatedly presented together. Russian psychologist Ivan Pavlov studied classical conditioning in great depth and introduced the idea of "associative memory," which entails building strong associations between the pleasant/unpleasant and neutral stimuli.

Pavlov is renowned for his studies on dogs, in which he gave the animals food after they heard a specific sound for several trials. Interestingly, he observed that the dogs would eventually start salivating (i.e., anticipating the food) after hearing the sound, even if the food had not yet been presented to them. This suggests that they had learned to associate the sound with the arrival of food.

In recent years, researchers have been trying to develop computational tools, most notably machine learning techniques, inspired by biological mechanisms, and they have often drawn inspiration from classical conditioning. Some of these approaches inspired by Pavlov's work attempt to reproduce the 'associative memory' he observed in machines using memristors, which are electronic components that act as a memory for devices.

A team of researchers at Zhengzhou University of Light Industry and Huazhong University of Science and Technology in China have recently developed a new memristor-based neural network circuit that reproduces Pavlov's notion of associative memory. Their circuit, presented in a paper published in Transactions on Cybernetics, was designed to overcome some of the limitations of previously proposed memristor-based neural networks reproducing associative memory.

"Most memristor-based Pavlov associative memory neural networks strictly require that only simultaneous food and ring appear to generate associative memory," the researchers explained in their paper. "In this article, the time delay is considered in order to form associative memory when the food stimulus lags behind the ring stimulus for a certain period of time."

The memristor-based neural network circuit developed by the researchers has three key components, a synapse module, a voltage control module and a time-delay module. Its unique structure, particularly the time-delay module, allows it to create associations even if a salient stimulus, which in Pavlov's dog experiments was food, appears some time after a neutral stimulus (e.g., a sound).

This is a particularly notable achievement, as the majority of previously developed memristor-based neural networks can only create these associations if the two stimuli are fed to the network at the same time. The rate at which the circuit presented by the team learns to make associations can also be adapted, simply by changing the length of time between the neutral and the salient stimuli.

"Functions such as learning, forgetting, fast learning, slow forgetting and time-delay learning are implemented by the circuit," the researchers wrote in their paper. "The Pavlov associative memory neural network with time-delay learning provides a reference for further development of brain-like systems."

Overall, the researchers at Zhengzhou University of Light Industry and Huazhong University of Science and Technology have introduced an effective design for memristor-based neural network systems inspired by the notion of Pavlovian conditioning. In the future, the circuit they developed could have several interesting applications, for instance, aiding the development of computational tools that reproduce psychological processes observed in animals or humans more effectively. The team is now planning to continue working on the circuit, optimizing its performance, simplifying its structure and attempting to integrate it into other devices.

More information: Junwei Sun et al. Memristor-Based Neural Network Circuit of Full-Function Pavlov Associative Memory With Time Delay and Variable Learning Rate, IEEE Transactions on Cybernetics (2019). DOI: 10.1109/tcyb.2019.2951520

© 2019 Science X Network