An algorithm with an eye for visibility helps pilots in Alaska

More than three-quarters of Alaskan communities have no access to highways or roads. In these remote regions, small aircraft are a town's bus, ambulance, and food delivery—the only means of getting people and things in and out.

As routine as daily flight may be, it can be dangerous. These small (or general aviation) aircraft are typically flown visually, by a pilot looking out the cockpit windows. If sudden storms or fog appears, a pilot might not be able to see a runway, nearby aircraft, or rising terrain. In 2018, the Federal Aviation Administration (FAA) reported 95 aviation accidents in Alaska, including several fatal crashes that occurred in remote regions where poor visibility may have played a role.

"General aviation pilots in Alaska need to be aware of the forecasted conditions during preflight planning, but also of any rapidly changing conditions during flight," says Michael Matthews, a meteorologist at MIT Lincoln Laboratory. "There are certain rules, like you can't fly with less than three miles of visibility. If it is worse, pilots need to fly on instruments, but they need to be certified for that."

Pilots check current or forecasted weather conditions before they fly, but a lack of automated weather observation stations throughout the Alaskan bush makes it hard to know exactly what to expect. To help, the FAA recently installed 221 web cameras near runways and mountain passes. Pilots can look at the image feeds online to plan their route. Still, it's difficult to go through what could be hundreds of images and estimate just how far one can see.

So, Matthews has been working with the FAA to turn these web cameras into visibility sensors. He has developed an algorithm, called Visibility Estimation through Image Analytics (VEIA), that uses a camera's image feed to automatically determine the area's visibility. These estimates can then be shared among forecasters and with pilots online in real-time.

Trained eyes

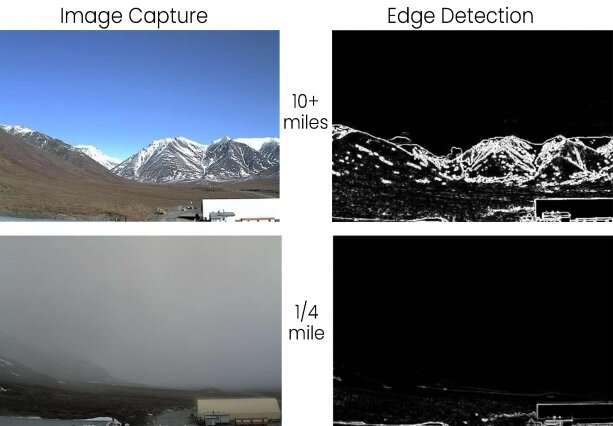

In concept, the VEIA algorithm determines visibility the same way humans do. It looks for stationary "edges." For human observers, these edges are landmarks of known distances from an airfield, such as a tower or mountain top. They're trained to interpret how well they can see each marker compared to on a clear, sunny day.

Likewise, the algorithm is first taught what edges look like in clear conditions. The system looks at the past 10 days' worth of imagery, an optimal timeframe because any shorter timeframe could be skewed by bad weather and any longer could be affected by seasonal changes, according to Matthews. Using these 10-day images, the system creates a composite "clear" image. This image becomes the reference to which a current image is compared.

To run a comparison, an edge-detection algorithm (called a Sobel filter) is applied to both the reference and current image. This algorithm identifies edges that are persistent—the horizon, buildings, mountain sides—and removes fleeting edges like cars and clouds. Then, the system compares the overall edge strengths and generates a ratio. The ratio is converted into visibility in miles.

Developing an algorithm that works well across images from any web camera was challenging, Matthews says. Based on where they are placed, some cameras might have a view of 100 miles and others just 100 feet. Other problems stemmed from permanent objects that were very close to the camera and dominated the view, such as a large antenna. The algorithm had to be designed to look past these near objects.

"If you're an observer on Mount Washington, you have a trained eye to look for very specific things to get a visibility estimate. Say, the ski lifts on Attitash Mountain, and so on. We didn't want to make an algorithm that is trained so specifically; we wanted this same algorithm to apply anywhere and across all types of edges," Matthews says.

To validate its estimates, the VEIA algorithm was tested against data from Automated Surface Observing Stations (ASOS). These stations, of which there are close to 50 in Alaska, are outfitted with sensors that can estimate visibility each hour. The VEIA algorithm, which provides estimates every 10 minutes, was more than 90 percent accurate in detecting low-visibility conditions when compared to co-located ASOS data.

Informed pilots

The FAA plans to test the VEIA algorithm in summer 2020 on an experimental website. During the test period, pilots can visit the experimental website to see real-time visibility estimates alongside the camera imagery itself.

"Furthermore, the VEIA estimates can be ingested into weather prediction models to improve the forecasts," says Jenny Colavito, who is the ceiling and visibility research project lead at the FAA. "All of this leads to keeping pilots better informed of weather conditions so that they can avoid flying into hazards."

The FAA is looking into using weather cameras in other regions, starting in Hawaii. "Like Alaska, Hawaii has extreme terrain and weather conditions that can change rapidly. I anticipate that the VEIA algorithm will be utilized along with the weather cameras in Hawaii to provide as much information to pilots as possible," Colavito adds. One of the key advantages of VEIA is that it requires no specialized sensors to do its job, just the image feed from the web cams.

Matthews recently accepted an R&D 100 Award for the algorithm, named one of the world's 100 most innovative products developed in 2019. As a researcher in air traffic management for 28 years, he is thrilled to have achieved this honor.

"Some mountain passes in Alaska are like highways, especially in the summertime, with the number of people flying. You can find countless stories of terrible crashes, people just doing everyday things—a family on their way to a volleyball game," Matthews reflects. "I hope that VEIA might help people go about their lives safer."

This story is republished courtesy of MIT News (web.mit.edu/newsoffice/), a popular site that covers news about MIT research, innovation and teaching.