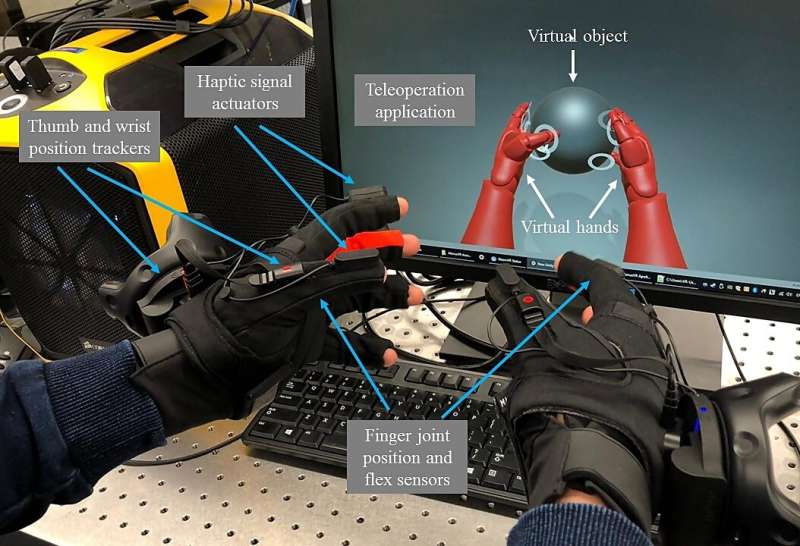

Experimental setup demonstrating human-to-machine applications. Credit: S. Mondal, et al., The University of Melbourne

A Tactile Internet is potentially the next phase of the Internet of Things, in which humans can touch and interact with remote or virtual objects while experiencing realistic haptic feedback.

A team of researchers led by Elaine Wong at the University of Melbourne, Australia, developed a method for enhancing haptic feedback experiences in human-to-machine applications that are typical in the Tactile Internet. The researchers believe their method can be used for forecasting proper feedback in applications ranging from electronic healthcare to virtual reality gaming.

Wong and her colleagues will present their proposed module, which uses an artificial neural network to forecast the material touched, at the Optical Fiber Communication Conference and Exhibition (OFC), to be held 8-12 March 2020 at the San Diego Convention Center, California, U.S.A.

Depending on the dynamicity of the interaction, an optimum human-to-machine application may require a network response time as short as one millisecond.

"These response times impose a limit on how far apart humans and machines can be placed," said Wong. "Hence, solutions to decouple this distance from the network response time is critical to realizing the Tactile Internet."

As a step toward this goal, the team trained a reinforcement learning algorithm to guess the appropriate haptic feedback in a human-to-machine system before the correct feedback is known. The module, called the Event-based HAptic SAmple Forecast (EHASAF), speeds up the process by providing a touch response based on a probabilistic prediction of the material the user is interacting with.

"To facilitate human-to-machine applications over long distance networks, we rely on artificial intelligence to overcome the effects of long propagation latency," said Sourav Mondal, an author on the paper.

Once the actual material is identified, the unit adapts and updates its probability distribution to help choose the proper feedback looking forward.

The group tested the EHASAF module with a pair of virtual reality gloves used by a human to touch a virtual ball. The gloves contain sensors on the fingers and wrists to detect touches and track movements, forces and the orientation of the hand.

Depending on which material ball the user chooses to touch of the four virtual options provided, the feedback from the glove should vary. For example, a metal ball will be firmer than a foam ball. When a neural network determines that one of the fingers has touched the ball, the EHASAF module begins cycling through feedback options to generate until it resolves the actual material of the chosen ball.

Currently, with four materials, the prediction accuracy of the module is about 97%.

"We think it is possible to improve prediction accuracy with a greater number of materials," said Mondal. "However, more sophisticated artificial intelligence-based models are needed to achieve that."

"More and more sophisticated models with improved performance can be developed based on the fundamental idea of our proposed EHSAF module," Mondal said.

These results and additional research will be presented onsite at OFC 2020.

More information: "Remote Human-to-Machine Distance Emulation through AI-Enhanced Servers for Tactile Internet Applications," S. Mondal, et al., Monday, 9 March 2020, Room 1A, 9:30 a.m. PDT

Provided by The Optical Society