July 23, 2020 feature

Self-powered user-interactive electronic skin for programmable touch operation

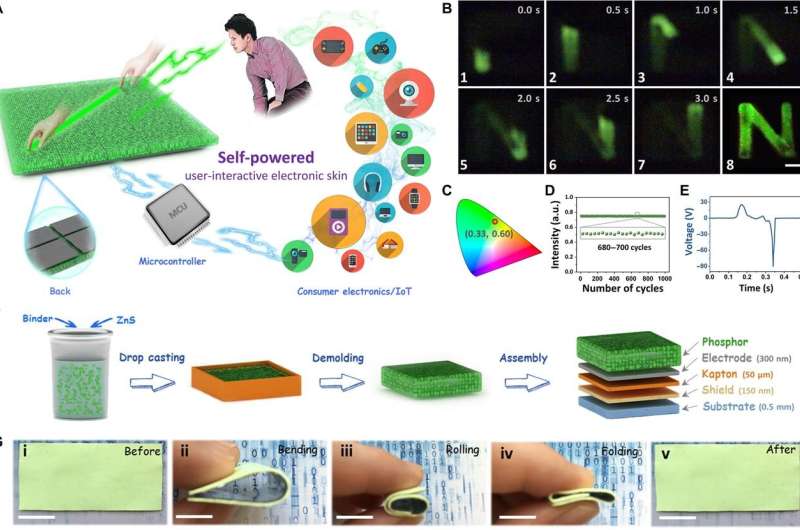

User-interactive electronic skin can map the sense of touch through electronic readouts to provide visual output as a readable response. However, the high power consumption, complex structure and high cost of electronic skin is challenging for frugal practical applications. In a new report on Science Advances, Xuan Zhao, Zheng Zhang and colleagues in advanced metals, materials and engineering in China, reported a self-powered, user-interactive electronic skin named SUE-skin as a simple and cost-effective structure based on a triboelectric-optical model. The material converted touch stimuli into electrical signals to provide an instantaneously visible light at a trigger-pressure threshold as low as 20 kPa without an external power supply. The team linked the electronic skin with a microcontroller to build a programmable touch operation platform that recognized more than 156 interaction logics to seamlessly control consumer electronics. The cost-effective technology is relevant for gesture control, augmented reality, and intelligent prosthesis applications.

Human-machine interactions (HMI) can be implemented by three parts (human, machine and interactive medium) to complete two processes; machine instruction and providing feedback. Most devices are controlled by electrical signals with control commands provided via electrical readouts such as keyboard and mouse-computer prompts. Since humans cannot directly perceive information as electrical signals, the HMI process provides human-perceivable signals such as visible light, sound and force. Visible light in the form of a display screen is the best candidate in general due to higher spatial resolution and intuitiveness. However, it is difficult to meet increased demands relative to flexibility, portability and low power consumption on a traditional interactive medium for wearable devices, artificial prosthetics and robotics. As a result, electronic skin (e-skin) is increasingly becoming a primary interactive medium to engineer a network of flexible sensors for next-generation HMI, with their potential to map and quantify the touch stimuli.

The concept of SUE-skin and the processing approach

In this work, Zhao et al. presented a user-interactive electronic skin named SUE-skin using a cost-effective processing approach. The material simultaneously converted touch stimuli into electrical signals and real-time visible lights without an external power supply. The scientists explored a programmable touch operation platform to control consumer electronics with potential to achieve robust touch-track mapping by superimposing electrical and optical signals. Upon touch, the SUE-skin generated electrical signals processed via a microcontroller unit (MCU) and the resulting optical signals were directly observed by the human eyes. The SUE-skin emitted a yellow-green light with a 1000 cycle repeatability. The as-fabricated material contained a phosphor layer, an aluminium electrode, an insulating layer, a shield layer and a substrate composed of polydimethylsiloxane (PDMS). The team grounded the shield during operation to minimize external interferences during electrical signal acquisition. The luminous intensity and surface topography remained stable during 1200 bending/rolling cycles to display excellent mechanical stability.

The triboelectric-optical model and optimizing the performance of SUE-skin

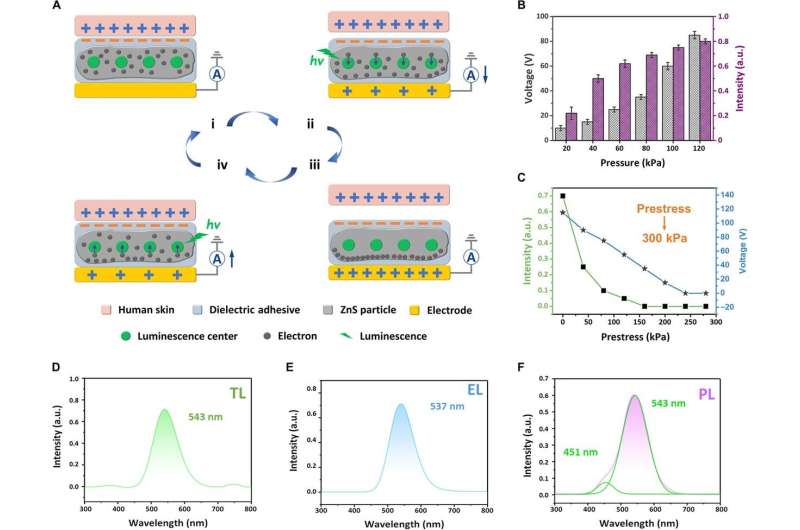

The proposed triboelectric-optical model induced electrostatic induction and electroluminescence through triboelectrification (electrification of dissimilar objects or materials) to assist visualization and mapping at low-pressure thresholds. When in full contact with human skin, the dielectric adhesive binder of the SUE-skin appeared negatively charged, while the human skin charged positively. During gradual separation of the SUE-skin from human skin, the dielectric adhesive had a negative charge and generated an induced current in the external circuit. The varying electric field in the setup moved electrons produced within the lattice, exciting them to cause electroluminescence. Zhao et al. showed how triboluminescence (TL) of the SUE-skin under electroluminescence resulted from electroluminescence rather than room temperature photoluminescence (PL).

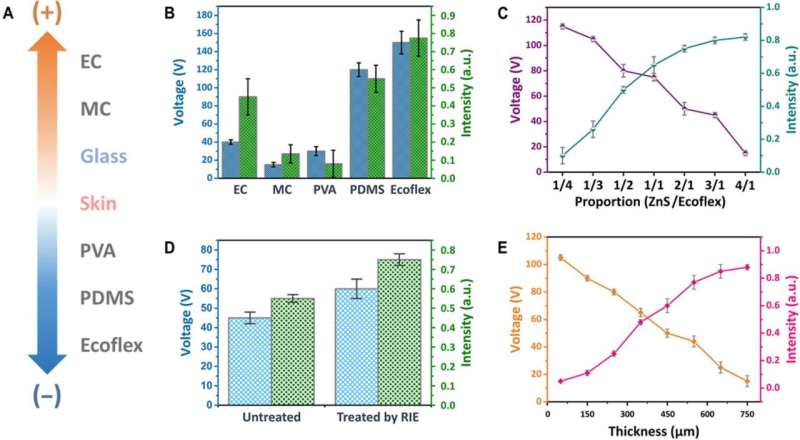

During touch sensing, it was difficult to precisely control the contact area and pressure of the human skin and SUE-skin, therefore in the first quantitative tests, the scientists used glass instead of human skin for the experiments due to their similar triboelectric properties. To improve triboelectrification, the team fabricated microstructures on the material surfaces to increase their surface area and concentration of excited states. Zhao et al. used Ecoflex as a binder and treated the material with reactive ion etching (RIE) to significantly improve their output performance. In practice, since the higher intensity of the output optical signal could be easily recognized by the human eye, the team allowed the output signal to be as high as possible for direct identification by the microcontroller. The analog-to-digital conversion (ADC) voltage was higher than 0.5 V for efficient interference immunity, while remaining below the analog voltage input range of the microcontroller to avoid electric breakdown.

Proof of concept: A programmable touch operation platform

The intuitive, interactive platform had important applications in the fields of wearable electronics and smart home devices. Zhao et al. combined the SUE-skin with a microcontroller to build a programmable touch-operation platform, which recognized different touch tracks to easily control various consumer electronics devices. The team connected each channel of the electronic skin to a low-pass resistor-capacitance filter to decrease the electrical noise and programmed the MCU in LabView to recognize electrical signals of the SUE-skin. The intuitive touch lighting and signalling processing technology formed an interactive programmable touch operation platform to support more than 156 interaction logics. Zhao et al. demonstrated the interactive function of the external audio module and display module; for example, when they swiped across a region the audio played "Hotel California." When they changed the direction of swiping, the music played at twice the speed and returned to standard speed or paused through additional touch sensing. For HMI (human-machine interaction) applications, it was important to sense and calculate the swiping velocity of such touch-response modules.

In this way, Xuan Zhao, Zheng Zhang, and colleagues detailed the concept of SUE (self-powered, user-interactive electronic skin)-skin with interactive luminescence and tactile-sensing properties without an external power supply. The team used a triboelectric-optical model as the theoretical basis to realize the concept, since it effectively illustrated the mechanism of touch light emission at low trigger pressure thresholds. They observed the touch stimuli using an image display while monitoring the measurement through an electrical readout. The team integrated a microcontroller unit (MCU) with the SUE-skin for programmable touch operation. In the future, Zhao et al. aim to introduce other electroluminescent materials to achieve high luminous intensity, contrast and resolution. The SUE-skin is also suited to develop applications from wearable interactive devices to artificial prosthesis and intelligent robots.

More information: Xuan Zhao et al. Self-powered user-interactive electronic skin for programmable touch operation platform, Science Advances (2020). DOI: 10.1126/sciadv.aba4294

Alex Chortos et al. Pursuing prosthetic electronic skin, Nature Materials (2016). DOI: 10.1038/nmat4671

Ouajdi Felfoul et al. Magneto-aerotactic bacteria deliver drug-containing nanoliposomes to tumour hypoxic regions, Nature Nanotechnology (2016). DOI: 10.1038/nnano.2016.137

© 2020 Science X Network