UW researchers surveyed people about online disagreements and then developed potential design interventions that could make these discussions more productive and centered around relationship-building. Credit: Conscious Design/Unsplash

The internet seems like the place to go to get into fights. Whether they're with a family member or a complete stranger, these arguments have the potential to destroy important relationships and consume a lot of emotional energy.

Researchers at the University of Washington worked with almost 260 people to understand these disagreements and to develop potential design interventions that could make these discussions more productive and centered around relationship-building. The team published these findings this April in the latest issue of the Proceedings of the ACM in Human Computer Interaction Computer-Supported Cooperative Work.

"Despite the fact that online spaces are often described as toxic and polarizing, what stood out to me is that people, surprisingly, want to have difficult conversations online," said lead author Amanda Baughan, a UW doctoral student in the Paul G. Allen School of Computer Science & Engineering. "It was really interesting to see that people are not having the conversations they want to have on online platforms. It pointed to a big opportunity to design to support more constructive online conflict."

In general, the team said, technology has a way of driving users' behaviors, such as logging onto apps at odd times to avoid people or deleting enjoyable apps to avoid spending too much time on them. The researchers were interested in the opposite: how to make technology respond to people's behaviors and desires, such as to strengthen relationships or have productive discussions.

"Currently many of the designed features that users leverage during an argument support a no-road-back approach to disagreement—if you don't like someone's content, you can unfollow, unfriend or block them. All of those things cut off relationships instead of helping people repair them or find common ground," said senior author Alexis Hiniker, an assistant professor in the UW Information School. "So we were really driven by the question of how do we help people have hard conversations online without destroying their relationships?"

The researchers did their study in three parts. First, they interviewed 22 adults from the Seattle area about what social media platforms they used and whether they felt like they could talk about challenging topics. The team also asked participants to brainstorm potential ways that these platforms could help people have more productive conversations.

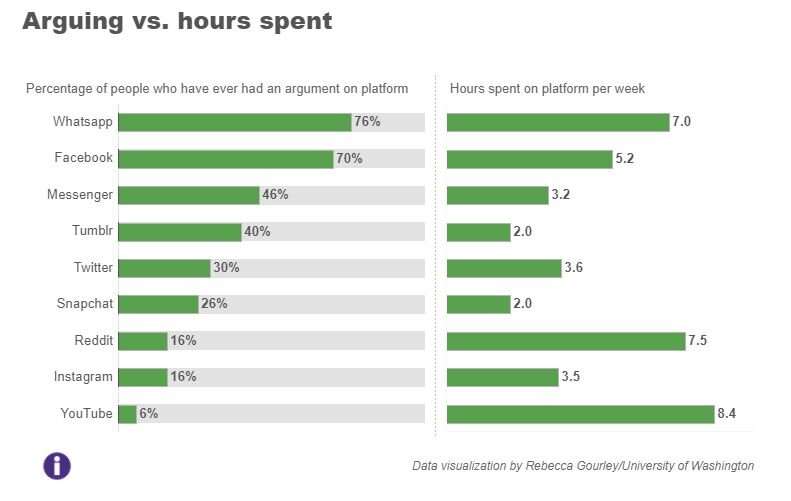

Then the team conducted a larger survey of 137 Americans ranging from 18 to 64 years old with political leanings that ranged from extremely conservative to extremely liberal. These participants were asked to report what social media platforms they used, how many hours per week they used them and if they had had an argument on these platforms. Participants then scored each platform for whether they felt like it enabled discussions of controversial topics. Participants were also asked to describe the most recent argument they had had, including details about what it was about and whom they argued with.

Many participants shared that they tried to avoid online arguments, citing a lack of nuance or space for discussing controversial subjects. But participants also noted wanting to have discussions, especially with family and close friends, about topics including politics, ethics, religion, race and other personal details.

When participants did have difficult conversations online, people tended to prefer text-based platforms, such as Twitter, WhatsApp or Facebook, over image-based platforms, such as YouTube, Snapchat and Instagram.

Participants also emphasized a preference for having these discussions in private one-on-one chats, such as WhatsApp or Facebook Messenger, over a more comment-heavy, public platform.

"It was not surprising to see that people are having a lot of arguments on the more private and text-based platforms," Baughan said. "That really replicates what we do offline: We would pull someone aside to have a private conversation to resolve a conflict."

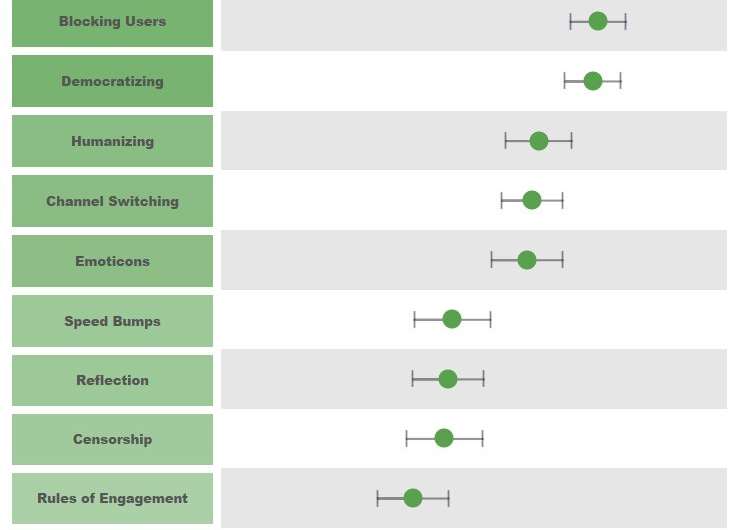

Using information from the first two surveys, the team developed 12 potential technological design interventions that could support users when having hard conversations. The researchers created storyboards that illustrated each intervention and asked 98 new participants, ranging from 22 to 65 years old, to evaluate the interventions.

The most popular ideas included:

Credit: University of Washington

Democratizing

In this intervention, community members use reactions, such as upvoting, to boost constructive comments or content.

"This moves us away from the loudest voice drowning out everyone else and elevates the larger, quieter base of people," Hiniker said.

Humanizing

The goal of this intervention is to remind people that they are interacting with other people. Some ideas include: preventing users from being anonymous, increasing the size of users' profile pictures, or providing more details about users, such as identity, background or mood.

Channel switching

This intervention provides users with the ability to move a conversation to a private space.

"I envision this intervention as the platform saying: 'Would you like to move this conversation offline?' Or maybe it has some sort of button, where you can quickly say: 'OK, let's go away from the comments section and into a private chat,'" Baughan said. "That could help show more respect for the relationship, because it doesn't become this public arena of who's going to win this fight. It becomes more about trying to reach an understanding."

Researchers at the University of Washington worked with almost 260 people to understand online disagreements and to develop potential design interventions that could make these discussions more productive and centered around relationship-building. The team developed 12 potential technological design interventions that could support users when having hard conversations. The researchers created storyboards that illustrated each intervention and asked 98 participants to evaluate the interventions. Shown here is a graph displaying participants' willingness to try each intervention (average response marked by the circle with confidence intervals labeled with whiskers on either side of the circle). A darker green color on the title of each intervention is also associated with a higher willingness to try. To further explore the data and the storyboards for each intervention, see the interactive graphic here: https://tableau.washington.edu/views/StoryboardExplorer/Startingdashboard. Credit: Rebecca Gourley/University of Washington

The least popular idea:

Biofeedback

This intervention uses biological feedback, such as a user's heart rate, to provide context about how someone is currently feeling.

"People would tell us: 'I don't want to share a lot of personal information about my internal state. But I would like to have a lot of personal information about my conversational partner's internal state,'" Hiniker said. "That was one of the design paradoxes we saw."

The next step for this research would be to start deploying some of these interventions to see how well they help or hurt online conversations in the wild, the team said. But first, social media companies should take a step back and think about the purpose of the interaction space they've created and whether their current platforms are meeting those goals.

"I would love to see technology help prompt people to slow down when it comes to things like knee-jerk emotional reactions," Baughan said. "It could ask people to reflect: Is this a good use of my time? How much do I value this relationship with this person? Do I feel like it's safe to engage in this conversation? And if a conversation happens in a public space, it could suggest taking it offline or going to a private space."

More information: Proceedings of the ACM in Human Computer Interaction Computer-Supported Cooperative Work, DOI: 10.1145/3449230 , amandabaughan.github.io/pubs/S … IsWrong_CSCW2021.pdf

Provided by University of Washington