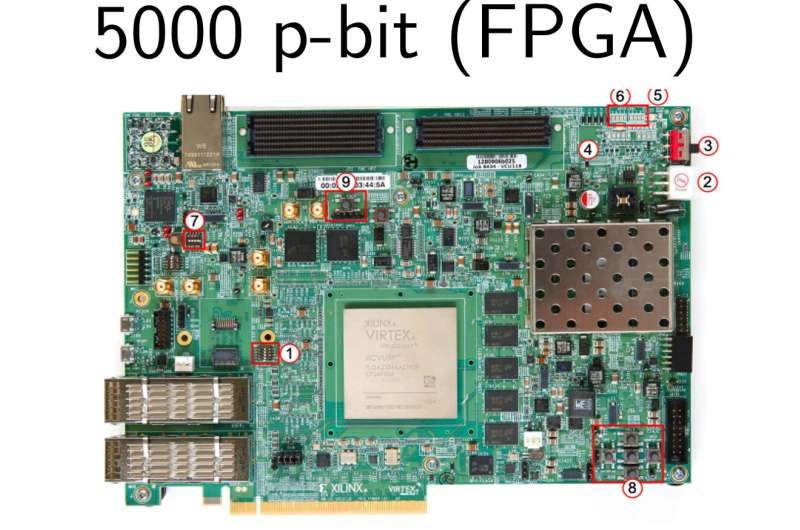

The team implemented a 5000 p-bit probabilistic computer on state-of-the-art Field Programmable Gate Arrays. Credit: Aadit et al

In recent years, engineers have been trying to devise new computers and devices that could help to solve challenging real-world problems faster and more efficiently. Some of the most promising among these are Ising machines (IMs), physics-based systems designed to tackle complex optimization problems.

Researchers at the University of California and the University of Messina have recently developed a sparse Ising machine architecture that can operate on classical and existing computer hardware. This architecture, presented in a paper published in Nature Electronics, was found to be significantly faster than standard optimization methods running on a central processing unit.

"Building domain-specific, quantum-inspired architectures has become an important area of research with the slowing down of Moore's Law," Kerem Camsari, one of the researchers who carried out the study, told TechXplore. "The primary objective of this work was to extend our earlier work on probabilistic or p-bits, conceptually in-between bits and qubits."

In 2019, Camsari and his colleagues showed that eight p-bit networks based on nanodevices could help solve some hard optimization problems in energy-efficient ways. In their new paper, they extended their networks to include 5,000 p-bits, using classical CMOS technology. This is a leading technology used to build integrated circuit (IC) chips and other electronic components.

The team found that increasing their architecture's p-bits resulted in higher speeds and performances, allowing it to tackle more complex optimization problems more efficiently. In addition, their architecture was found to outperform state-of-the-art, classical approaches that have been widely used for decades.

"What is particularly promising about our recent work is that the same architecture we developed here could be applied to spintronics technology," Giovanni Finocchio, another researcher involved in the study, told TechXplore. "As we showed earlier this year, p-computing can be highly spintronics compatible and orders of magnitude further improvements in speed and scalability can be achieved in integrated magnetic p-computers."

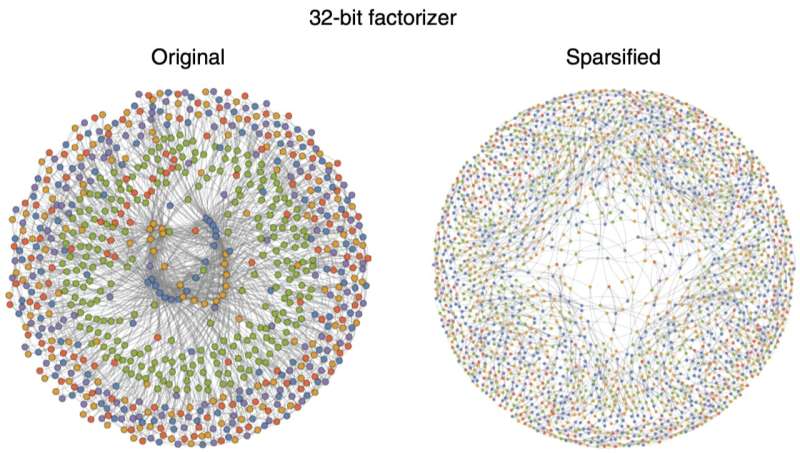

The key idea enabling parallelism was to convert optimization problems into less dense (sparsified) networks at the expense of additional p-bits. Credit: Aadit et al

The sparse Ising machine developed by Camsari, Finocchio and their colleagues is based on the idea that when making probabilistic decisions, parallelism comes from sparsity. In other words, their approach assumes that consulting fewer trustworthy sources allows us to make an informed decision faster and more efficiently than consulting many parties.

"We have invented techniques that can take any hard optimization problem and turn into a sparse network to take parallel samples," Navid Anjum Aadit, a researcher involved in the study, explained. "One unique feature of our architecture is its performance (probabilistic updates per second) scales linearly with the number of p-bits in the system, this is highly unusual, and it is the highest level of parallelism we can hope to achieve."

The findings gathered by this team of researchers highlight the potential of sparse Ising machines, even when these are running on conventional computer hardware. In fact, they found that their Ising machine could tackle optimization problems as well as, if not better, than many state-of-the-art classical techniques, while running on existing p-computers.

"A particularly impressive example was solving the integer factorization problem for extremely large numbers (up to 32-bits), far larger than any other probabilistic solver that attempted this problem," Andrea Grimaldi, one of the researchers who conducted the study, told TechXplore. "We must mention, however, that factorization has many non-probabilistic algorithms and these can be more efficient than our approach. Our purpose was to see how our machine can solve extremely difficult optimization problems, allowing us to demonstrate its superior performance over other probabilistic solvers, classical or quantum."

In the future, the sparse Ising machine architecture developed by Camsari, Finocchio, Aadit, Grimaldi and their colleagues could be applied to several other real-world optimization problems. In their next studies, the researchers plan to further scale up their p-computers, from 5,000 p-bits to 50,000–100,000 p-bits, using different approaches that they are currently assessing.

"We are deeply interested in designing new algorithms and architectures but also using the power and promise of emerging technology such as magnetic nanodevices," Camsari added. "We are constantly looking for new applications of p-computers in quantum computing as well as artificial intelligence."

More information: Anjum Aadit et al, Massively parallel probabilistic computing with sparse Ising machines, Nature Electronics (2022). DOI: 10.1038/s41928-022-00774-2

Journal information: Nature Electronics

© 2022 Science X Network