Digital security dialogue: Leveraging human verification to educate people about online safety

Online safety and ethics are serious issues and can adversely affect less experienced users. Researchers have built upon familiar human verification techniques to add an element of discrete learning into the process. This way users can learn about online safety and ethics issues while simultaneously verifying they are human. Trials show that users responded positively to the experience and felt they gained something from these microlearning sessions.

The internet is an integral part of modern living, for work, leisure, shopping, keeping touch with people, and more. It's hard to imagine that anyone could live in an affluent country, such as Japan, and not use the internet relatively often. Yet despite its ubiquity, the internet is far from risk-free. Issues of safety and security are of great concern, especially for those with less exposure to such things. So a team of researchers from the University of Tokyo including Associate Professor Koji Yatani of the Department for Electrical Engineering and Information Systems set out to help.

Surveys of internet users in Japan suggest that a vast majority have not had much opportunity to learn about ways in which they can stay safe and secure online. But it seems unreasonable to expect this same majority to intentionally seek out the kind of information they would need to educate themselves. To address this, Yatani and his team thought they could instead introduce educational materials about online safety and ethics into a typical user's daily internet experience. They chose to take advantage of something that many users will come across often during their usual online activities: human verification.

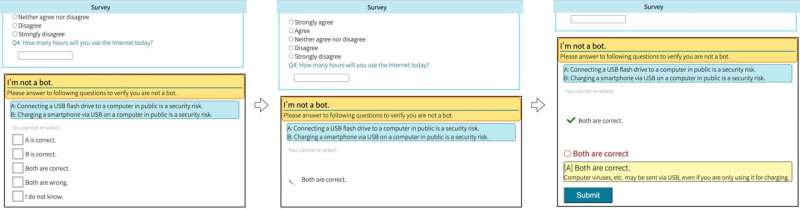

You've probably seen this yourself, a pop-up window of some sort asking you to type out an unclear word, rearrange puzzle pieces, click on a certain class of object in a set of images, or something similar. They are examples of human verification methods that can protect websites from automated malicious exploitation. Yatani and his team decided to trial a system they called DualCheck, which replaces these verification tasks that don't offer anything of value to the user, with questions designed to improve knowledge about online safety and ethics. As for the verification element of these user prompts, the way the user moves their mouse or pointing device can be used to verify whether they are a human or an automated bot.

"Given people are likely already familiar with these verification tasks, it seemed reasonable to expand upon these with educational content rather than try and encourage some new behavior," said Yatani. "We were pleasantly reassured that our test group found DualCheck less bothersome than a typical verification, as the questions it offered felt more meaningful to them than arbitrary tasks like clicking on street signs."

The researchers found crafting appropriate questions was the most challenging part of this study. It required careful wording for each of the 20 questions used, and these were iterated over a number of trials. These initial tests were performed with a group recruited in Japan so were run in Japanese. But the team intend to expand this trial to other countries in the future. They also plan to run larger-scale trials and experiment with different kinds of educational content for different target groups, such as the elderly.

More information: Ryo Yoshikawa, Hideya Ochiai, and Koji Yatani, "DualCheck: Exploiting Human Verification Tasks for Opportunistic Online Safety Microlearning", USENIX Symposium on Usable Privacy and Security. www.usenix.org/conference/soup … esentation/yoshikawa