Credit: Brigham Young University

What you sculpt is what you get.

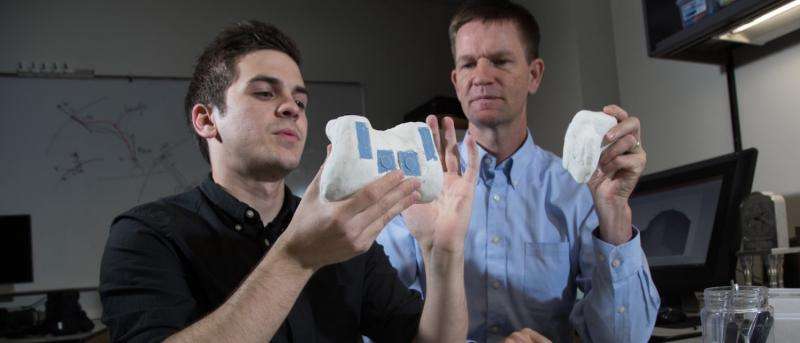

A new method of 3D printing created by Computer Science Professors Michael Jones and Kevin Seppi removes the amount of skill required to design an object for 3D printing.

The approach uses clay for modeling the basic shape of the desired object around blocks or "blanks" that represent the interactive buttons, knobs, or sliders that will make the object functional. The complete clay model is then scanned and the computer recognizes each "blank" as a certain type of button, allowing for the exact space for the real button to be installed after the object is printed.

Industrial designers have been sculpting in clay for decades, but this new technique combines form with functionality.

"Working in clay is awesome until you have to add all the circuitry in it," Jones said. "With this method, you can get the shape right by working with the clay. Even for people who are skilled in 3D modeling or even if you have years of experience designing models on a computer screen, making a shape that matches an opening in a specific context is pretty tough."

Credit: Mark A. Philbrick, BYU Photo

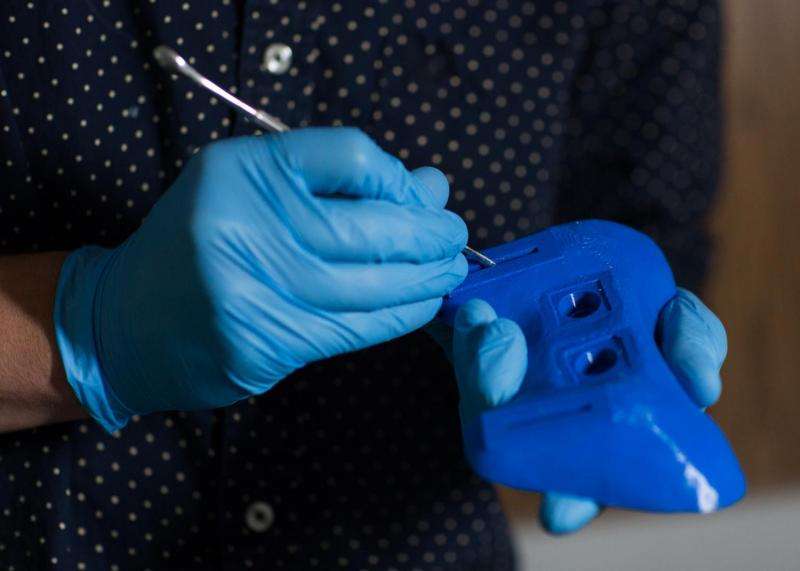

In addition to making the right shape, the computer also calculates the optimal way to cut the object in half to fit the circuitry inside.

Testing of this method showed it takes about 30 minutes of human effort to sculpt an interactive prototype. After scanning, it only takes a single button click to finish the 3D modeling process.

The idea of using placeholders in 3D printing emerged last year in the form of stickers to represent where a button would be placed in an object, but it was difficult to get the appropriate depth for the button in the printed model. Jones and Seppi's updated method using clay to model around blanks was published in ACM CHI 2016, the top conference for Computer-Human Interaction.

Credit: Brigham Young University

The algorithm used to identify the blanks in the in clay model can also be applied to future research. Jones and Seppi are hoping to build upon their idea to design motion sensors for functionality.

"We want to develop a circuit that not only senses acceleration and rotation, but also can make sense of that motion," Jones said. "Whether it's a sensor in a shoe or a cane, we hope to be able to write programs that interactively learn how to recognize a step or a cane drop accurately."

-

Credit: Savanna Sorensen, BYU Photo

-

Credit: Savanna Sorensen, BYU Photo

Provided by Brigham Young University