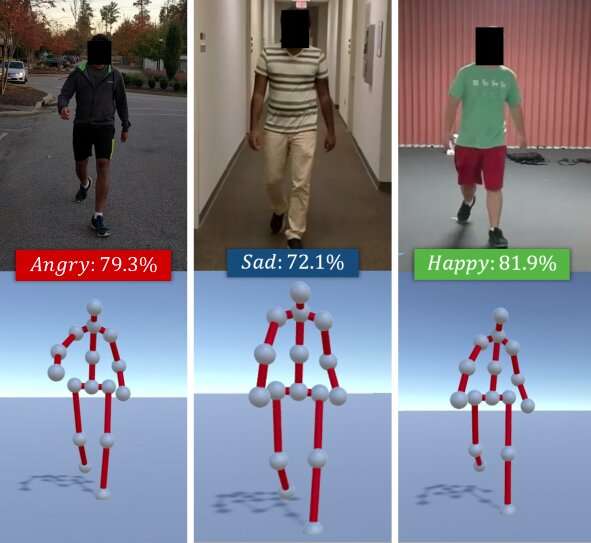

The algorithm identifies the perceived emotions of individuals based on their walking styles. Given an RGB video of an individual walking (top), the researchers’ method extracts his/her walking gait as a series of 3D poses (bottom). It then uses a combination of deep features learned via an LSTM and affective features computed using posture and movement cues and classify these into basic emotions (e.g., happy, sad, etc.), using a Random Forest Classifier. Credit: Randhavane et al.

A team of researchers at the University of North Carolina at Chapel Hill and the University of Maryland at College Park has recently developed a new deep learning model that can identify people's emotions based on their walking styles. Their approach, outlined in a paper pre-published on arXiv, works by extracting an individual's gait from an RGB video of him/her walking, then analyzing it and classifying it as one of four emotions: happy, sad, angry or neutral.

"Emotions play a significant role in our lives, defining our experiences, and shaping how we view the world and interact with other humans," Tanmay Randhavane, one of the primary researchers and a graduate student at UNC, told TechXplore. "Perceiving the emotions of other people helps us understand their behavior and decide our actions toward them. For example, people communicate very differently with someone they perceive to be angry and hostile than they do with someone they perceive to be calm and contented."

Most existing emotion recognition and identification tools work by analyzing facial expressions or voice recordings. However, past studies suggest that body language (e.g., posture, movements, etc.) can also say a lot about how someone is feeling. Inspired by these observations, the researchers set out to develop a tool that can automatically identify the perceived emotion of individuals based on their walking style.

"The main advantage of our perceived emotion recognition approach is that it combines two different techniques," Randhavane said. "In addition to using deep learning, our approach also leverages the findings of psychological studies. A combination of both these techniques gives us an advantage over the other methods."

The approach first extracts a person's walking gait from an RGB video of them walking, representing it as a series of 3-D poses. Subsequently, the researchers used a long short-term memory (LSTM) recurrent neural network and a random forest (RF) classifier to analyze these poses and identify the most prominent emotion felt by the person in the video, choosing between happiness, sadness, anger or neutral.

The LSTM is initially trained on a series of deep features, but these are later combined with affective features computed from the gaits using posture and movement cues. All of these features are ultimately classified using the RF classifier.

Randhavane and his colleagues carried out a series of preliminary tests on a dataset containing videos of people walking and found that their model could identify the perceived emotions of individuals with 80 percent accuracy. In addition, their approach led to an improvement of approximately 14 percent over other perceived emotion recognition methods that focus on people's walking style.

"Though we do not make any claims about the actual emotions a person is experiencing, our approach can provide an estimate of the perceived emotion of that walking style," Aniket Bera, a Research Professor in the Computer Science department, supervising the research, told TechXplore. "There are many applications for this research, ranging from better human perception for robots and autonomous vehicles to improved surveillance to creating more engaging experiences in augmented and virtual reality."

Along with Tanmay Randhavane and Aniket Bera, the research team behind this study includes Dinesh Manocha and Uttaran Bhattacharya at the University of Maryland at College Park, as well as Kurt Gray and Kyra Kapsaskis from the psychology department of the University of North Carolina at Chapel Hill.

To train their deep learning model, the researchers have also compiled a new dataset called Emotion Walk (EWalk), which contains videos of individuals walking in both indoor and outdoor settings labeled with perceived emotions. In the future, this dataset could be used by other teams to develop and train new emotion recognition tools designed to analyze movement, posture, and/or gait.

"Our research is at a very primitive stage," Bera said. "We want to explore different aspects of the body language and look at more cues such as facial expressions, speech, vocal patterns, etc., and use a multi-modal approach to combine all these cues with gaits. Currently, we assume that the walking motion is natural and does not involve any accessories (e.g., suitcase, mobile phones, etc.). As part of future work, we would like to collect more data and train our deep-learning model better. We will also attempt to extend our methodology to consider more activities such as running, gesturing, etc."

According to Bera, perceived emotion recognition tools could soon help to develop robots with more advanced navigation, planning, and interaction skills. In addition, models such as theirs could be used to detect anomalous behaviors or walking patterns from videos or CCTV footage, for instance identifying individuals who are at risk of suicide and alerting authorities or healthcare providers. Their model could also be applied in the VFX and animation industry, where it could assist designers and animators in creating virtual characters that effectively express particular emotions.

More information: Identifying emotions from walking using affective and deep features. arXiv:1906.11884 [cs.CV]. arxiv.org/abs/1906.11884

© 2019 Science X Network