RGB Image of a customer. Credit: Tiwari & Bhowmick.

In recent years, some computer scientists have been exploring the potential of deep-learning techniques for virtually dressing 3D digital versions of humans. Such techniques could have numerous valuable applications, particularly for online shopping, gaming and 3D content generation.

Two researchers at TCS Research, India have recently created a deep learning technique that can predict how items of clothing will adapt to a given body shape and thus how it will look on specific people. This technique, presented at the ICCV Workshop, has been found to outperform other existing virtual body clothing methods.

"Online shopping of clothes allows consumers to access and purchase a wide range of products from the comfort of their home, without going to physical stores," Brojeshwar Bhowmick, one of the researchers who carried out the study, told TechXplore. "However, it has one major limitation: It does not enable buyers to try clothes physically, which results in a high return/exchange rate due to clothes fitting issues. The concept of virtual try-on helps to resolve that limitation."

Virtual try-on tools allow people purchasing clothes online to get an idea of how a garment would fit and look on them, by visualizing it on a 3D avatar (i.e., a digital version of themselves). The potential buyer can infer how the item he/she is thinking of purchasing fits by looking at the folds and wrinkles of it in various positions or from different angles, as well as the gap between the avatar's body and the worn garment in the rendered image/video.

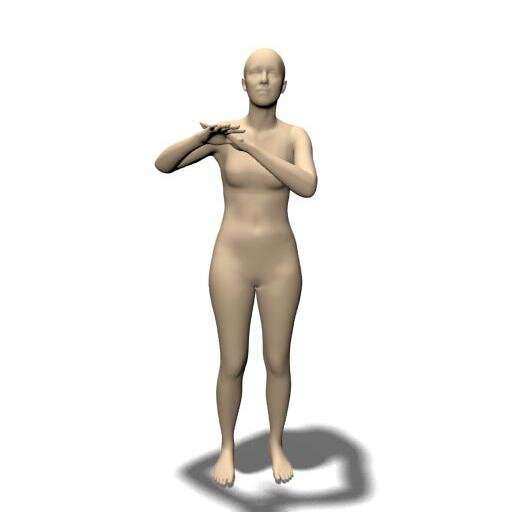

Youtube RGB and Draped Image with both T-shirt and pants. Credit: Tiwari & Bhowmick.

It allows buyers to visualize any garment on a 3D avatar of them, as if they are wearing it. Two important factors that a buyer considers while deciding to purchase a particular garment are fit and appearance. In a virtual try-on setup, a person can infer how a particular garment fits by looking at folds and wrinkles in various poses and the gap between the body and the garment in the rendered image or video.

"Previous work in this area, such as the development of the technique TailorNet, doesn't take the underlying human body measurements into account; thus, its visual predictions are not very accurate, fitting-wise," Bhowmick said. "In addition to that, due its design, the memory footprint of TailorNet is huge, which restricts its usage in real-time applications with less computational power."

The main objective of the recent study by Bhowmick and his colleagues was to create a lightweight system that considers a human's body measurements and drapes 3D garments over an avatar that matches those body measurements. Ideally, they wanted this system to require low memory and computational power, so that it could be run in real-time, for instance on online clothing websites.

The estimated 3D body of the same customer in the picture above, derived from the RGB image. Credit: Tiwari & Bhowmick.

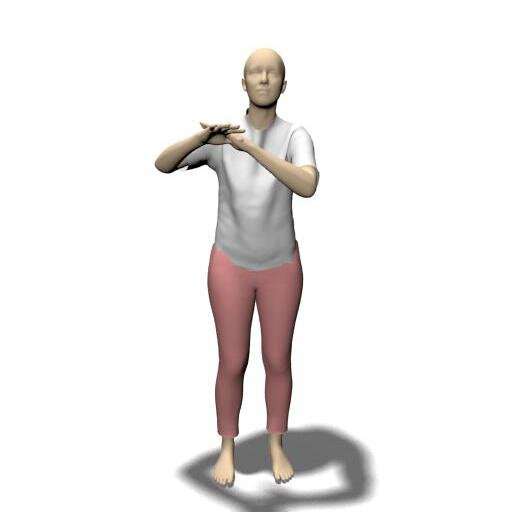

"DeepDraper is a deep learning-based garment draping system that allows customers to virtually try garments from a digital wardrobe onto their own bodies in 3D," Bhowmick explained. "Essentially, it takes an image or a short video clip of the customer, and a garment from a digital wardrobe provided by the seller as inputs."

Initially, DeepDraper analyzes images or videos of a user to estimate his/her 3D body shape, pose and body measurements. Subsequently, it feeds its estimations to a draping neural network that predicts how a garment would look on the user's body, by applying it onto a virtual avatar.

The researchers evaluated their technique in a series of tests and found that it outperformed other state-of-the-art approaches, as it predicted how a garment would fit users better and more realistically. In addition, their system was able to drape garments of any size on human bodies of all shapes and with various characteristics.

-

Result of DeepDraper, where the team draped the estimated 3D human body with a white T-Shirt and a pink pair of pants. Credit: Tiwari & Bhowmick.

-

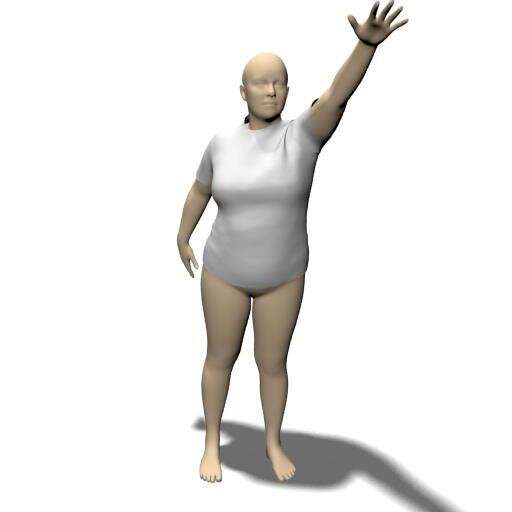

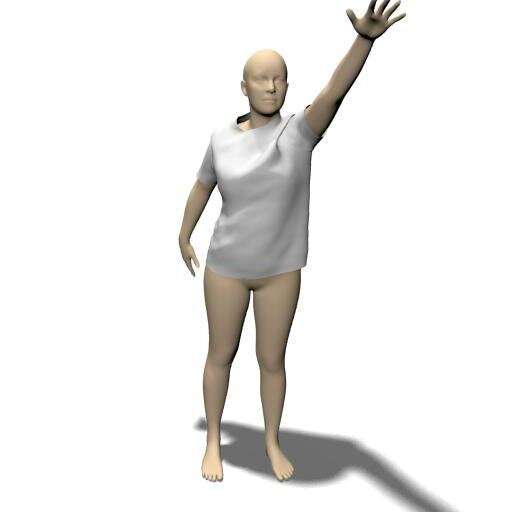

Draping result of a fixed size T-shirt on two people with varying overall body fat. This is the picture showing the person with higher body fat, see the following picture to observe differences in the wrinkles and folds. Credit: Tiwari & Bhowmick.

-

Draping result of a fixed size T-shirt on two people with varying overall body fat. This is the picture showing the person with lower body fat, see the previous picture to observe differences in the wrinkles and folds. Credit: Tiwari & Bhowmick.

"Another important feature of DeepDraper is that it is very fast and can be supported by low end devices such as mobile phones or tablets," Bhowmick said. "More precisely, DeepDraper is nearly 23 times faster and nearly 10 times smaller in memory footprint compared to its close competitor Tailornet."

In the future, the virtual garment-draping technique created by this team of researchers could allow clothing and fashion companies to improve their users' experience with online shopping. By allowing potential buyers to get a better idea of how clothes would look on them before purchasing them, it could also reduce requests for refunds or product exchanges. In addition, DeepDraper could be used by game developers or 3D media content creators to dress characters more efficiently and realistically.

"In our next studies, we plan to extend DeepDraper to virtually try on other challenging, loose, and multilayered garments, such as dresses, gowns, t-shirts with jackets etc. Currently, DeepDraper drapes the garment on a static human body, but we eventually plan to drape and animate the garment consistently as humans move."

More information: DeepDraper: Fast and accurate 3D garment draping over a 3D human body. The Computer Vision Foundation(2021). PDF

© 2021 Science X Network