Personal assistants today can figure out what you are saying, but what if they could infer what you were thinking based on your actions? A team of academic and industrial researchers led by Carnegie Mellon University is working to build artificially intelligent agents with this social skill.

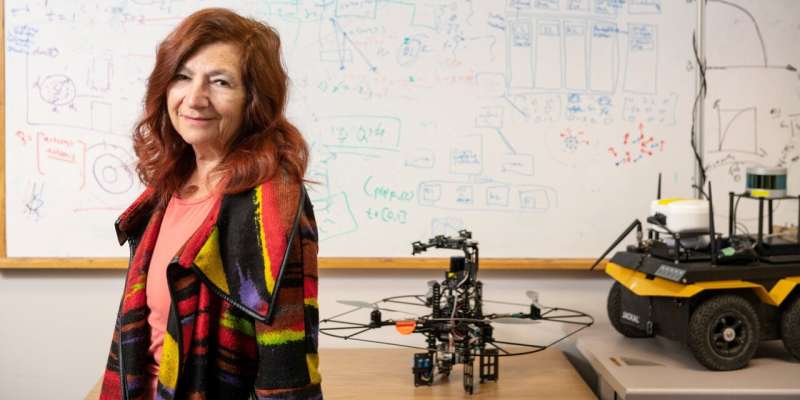

The goal of the four-year, $6.6 million project, led by Katia Sycara of CMU's Robotics Institute and sponsored by the Defense Advanced Research Projects Agency (DARPA), is to use machine social intelligence to help human-and-machine teams work together safely, efficiently and productively. The project includes human factors experts and neuroscientists at the University of Pittsburgh and Northrop Grumman.

"The idea is for the machine to try to infer what people are thinking based on their behavior," said Sycara, a research professor who has spent decades developing software agents. "Is the person confused? Are they paying attention to what is needed? Are they overloaded?" In some cases, the software agent might even be able to determine that a teammate is making mistakes because of misinformation or lack of training, she added.

Humans have the ability to infer the mental states of others, called theory of mind—something people do as part of situational awareness while evaluating their environment and considering possible actions. AI systems aren't yet capable of this skill, but Sycara and her colleagues expect to achieve this through meta-learning, a branch of machine learning in which the software agent essentially learns how to learn.

Credit: Carnegie Mellon University

In addition to Sycara, the team includes three co-principal investigators: Changliu Liu, an assistant professor in the Robotics Institute; Michael Lewis, a professor at Pitt's School of Computing and Information; and Ryan McKendrick, a cognitive scientist at Northrop Grumman.

The research team will test their socially intelligent agents in a search-and-rescue scenario within the virtual world of the Minecraft video game, in a testbed developed with researchers at Arizona State University. In the first year, the researchers will focus on training their software agent to infer the state of mind of an individual team member. In subsequent years, the agent will interact with multiple human players and attempt to understand what each of them is thinking, even as their virtual environment changes.

Software agents are autonomous programs that can perceive their environment and make decisions. In addition to digital personal assistants, examples range from the programs that operate self-driving cars to those that cause advertisements to pop up in emails for products in which the user has expressed interest. Agents also can be used to help people with complex tasks, such as scheduling and logistics.

DARPA is sponsoring the project through its Artificial Social Intelligence for Successful Teams (ASIST) program.

Provided by Carnegie Mellon University