Credit: Jung et al.

Virtual reality (VR), augmented reality (VR) and extended reality (XR) technologies allow users to experience digital content in unique and immersive ways. These technologies primarily rely on the use of headsets, glasses or goggles that project images through their lenses, allowing viewers to visually experience simulated environments or see digital elements added onto their surrounding environment.

In recent years, computer scientists and engineers have been trying to develop new tools that could enhance VR, AR and XR technologies by adding other types of sensory stimuli to simulations, such as scents or touch-related feedback. While some of these systems have achieved promising results, they have so far rarely been integrated with existing technologies.

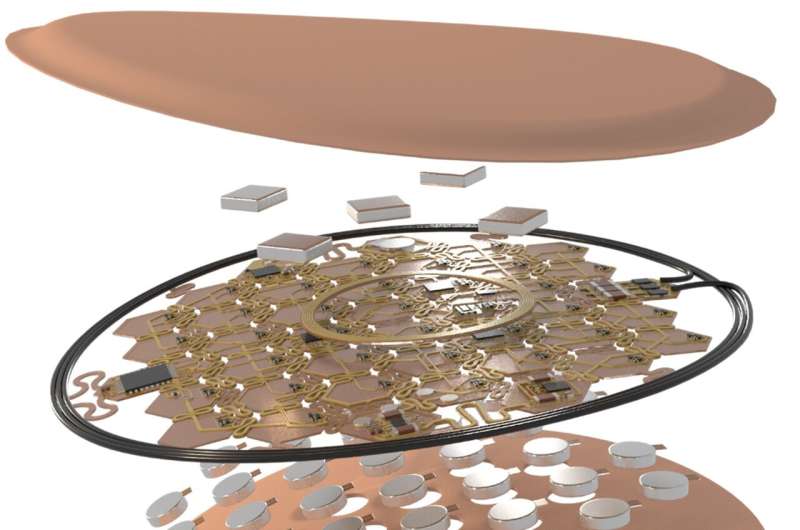

Researchers at Northwestern University, PSYONIC, and Sungkyunkwan University have recently developed a soft, wireless, haptic interface that could be used to add tactile sensations to VR, AR and XR experiences. This system, published in Nature Electronics, is based on arrays of vibro-haptic actuators, with densities of 0.73 actuators per square centimeter.

"Our motivation is to develop technologies that can add full-body haptic experiences to VR/AR/XR environments, as a complement audio and video," John A. Rogers, one of the researchers who carried out the study, told TechXplore. "Our recent paper describes a much advanced and manufacturable version of a technology that we first introduced in 2019 in a paper published in Nature."

Credit: Jung et al.

The new haptic interface developed by Rogers and his colleagues can display vibro-tactile patterns across large areas of the human skin, either as single units or as a coordinated ensemble. To do this, it uses arrays of actuators that can produce tactile feedback and vibrations on the skin at densities that can fully engage haptic receptors in the skin across all regions of the human body, except for the hands and face.

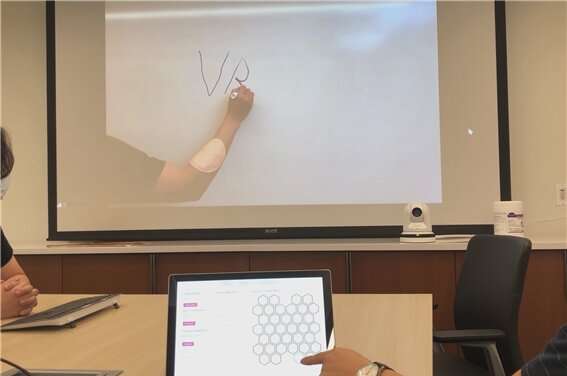

"Our thin, flexible system embeds independently controlled arrays of small vibro-tactile actuators that create fast, spatio-temporal patterns of sensation across the skin," Rogers explained. "Our system can be controlled through a programmed interface coordinated with VR/AR system or directly through a touchscreen. All of these features are unique, as no other similar technology exists currently."

Credit: Jung et al.

The researchers evaluated their haptic interface in a series of tests and showed that it can effectively translate musical tracks into tactile patterns and could also be used to leverage the sense of touch for the control of robotic prosthetic systems. In addition, their system could be controlled using a variety of devices, including touchscreen smartphones.

In the future, the new wireless haptic interface introduced by Rogers and his colleagues could open exciting new possibilities for the creation of immersive VR, AR and XR experiences. For instance, it could allow users to perceive tactile stimuli associated with different experiences in VR videogames, including the wind blowing on their skin and the sensation of driving or operating other vehicles.

Credit: Jung et al.

"Touch is one of the most powerful means to communicate with one another and it represents our only mode of physical interaction with the outside world," Rogers added. "We are now adding capabilities that not only replicate normal forces applied to the skin, but also shear, to increase the vibrancy of the sensation. We are also adding mechanisms to locally heat and cool the skin, to complement tactile sensations."

More information: Yei Hwan Jung et al, A wireless haptic interface for programmable patterns of touch across large areas of the skin, Nature Electronics (2022). DOI: 10.1038/s41928-022-00765-3

Xinge Yu et al, Skin-integrated wireless haptic interfaces for virtual and augmented reality, Nature (2019). DOI: 10.1038/s41586-019-1687-0

Journal information: Nature Electronics , Nature

© 2022 Science X Network