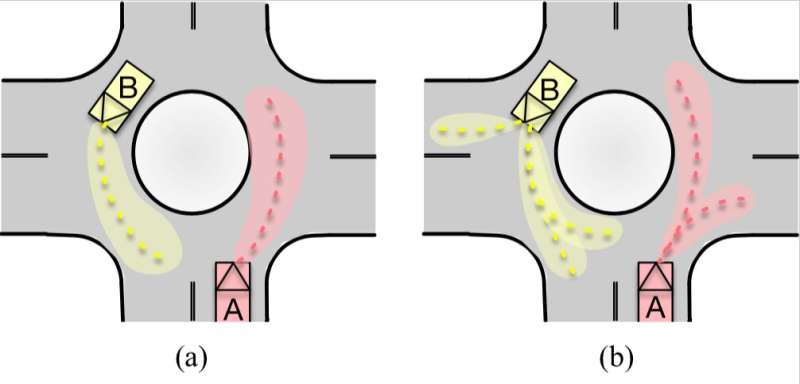

Demonstrations of (a) single-modal and (b) multi-modal predicted distributions. Credit: Hu, Zhan & Tomizuka.

Researchers at the University of California (UC), Berkeley, have recently developed a generative model that can predict the sequential motions of pairs of interacting agents, including self-driving vehicles as well as vehicles with human drivers. Their method, outlined in a paper pre-published on arXiv, is interpretable, which means that it can explain the logic behind its predictions, leading to greater reliability and generalizability.

"For autonomous agents to successfully operate in the real world, the ability to anticipate future motions of surrounding entities in the scene can greatly enhance their safety levels, allowing them to avoid dangerous situations in advance," Yeping Hu, one of the researchers who carried out the study, told TechXplore.

Past studies have achieved remarkable results in predicting the behavior of individual agents or vehicles. According to Hu and her colleagues, however, considering these agents individually is often unhelpful and limiting, as in the real-world (e.g. on the road), these agents typically interact with each other and their states are therefore coupled. Moreover, as the predicted horizon expands, modeling prediction uncertainties and multi-modal distributions for future sequences becomes increasingly challenging.

"In our study, we addressed this challenge by presenting a multi-modal probabilistic prediction approach," Hu said. "The proposed method is based on a generative model and is capable of jointly predicting sequential motions of each pair of interacting agents."

As explained by Wei Zhan, another researcher involved in the study, this joint prediction ultimately enables reaction prediction of the motions of other agents. It can provide an answer to "what if" questions, such as "What would be the possible reactions of others if the host autonomous vehicle takes a specific action in the future?" Reaction prediction is extremely important for self-driving vehicles in highly interactive driving scenarios.

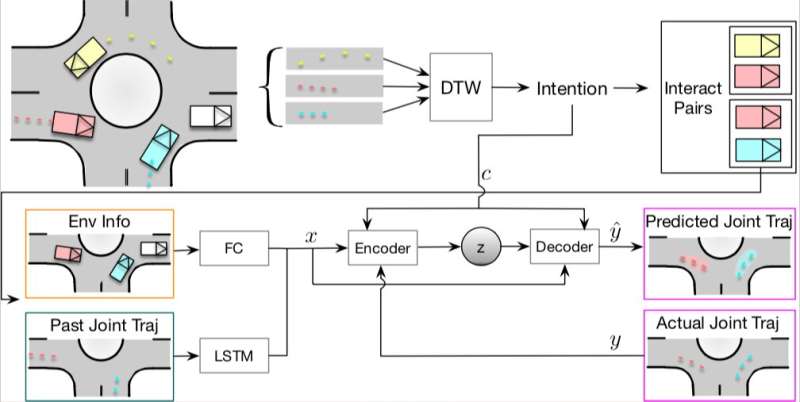

(a) The overall structure of the proposed method. (b) Roundabout map of all reference paths. Credit: Hu, Zhan & Tomizuka.

The model developed by Hu and her colleagues is based on one key algorithm, which has a similar structure to traditional variational autoencoders (VAEs). In their study, the researchers used their model to predict the interactive behaviors between two vehicles, dubbed A and B.

"Multi-modality can be seen in both discrete and continuous aspects," Hu explained. "There can be many discrete, high-level intentions that are fixed in a human's mind, such as turn left/right or exit at a certain lane in the roundabout scenario. Also, under each intention, there exist several continuous interactions such as different degrees of pass/yield behavior. Therefore, it is necessary to address the multi-modality when we are predicting the future behaviors of other vehicles, which can lead us to more accurate and reasonable prediction results."

Real-world motion data in highly interactive driving scenarios is the most important asset and prerequisite for behavior and motion prediction research. The researchers used a complex 7-way roundabout with heavy traffic to collect large amounts of highly interactive motion data.

The data they collected was used to train and evaluate the proposed model, yielding highly promising results. Their approach outperformed three alternative models that are commonly used to predict the motion of autonomous agents, namely conditional VAE, multilayer perceptron (MLP) ensemble and Monte Carlo (MC) dropout. In the future, their laboratory will also be publishing a more comprehensive motion dataset.

"In our recent work, we showed the underlying reasoning for the sampling process of the predicted results," Hu said. "Although there is still a long way to go to fully understand these black-box algorithms (i.e. neural networks), we tried to provide some meaningful information of such a black-box algorithm and tried to make the proposed algorithm safe to use. If these prediction algorithms are going to be used in real autonomous vehicles one day, sufficient reasoning behind the prediction algorithm will definitely be necessary."

The model devised by Hu and her colleagues could help to enhance the safety of autonomous vehicles, allowing them to predict interactions between other vehicles on the road. In her next studies, Hu plans to address the safety side of the prediction algorithm further, while also trying to make the prediction process more transparent.

More information: Multi-modal probabilistic prediction of interactive behavior via an interpretable model. arXiv:1903.09381v1 [cs.LG]. arxiv.org/abs/1903.09381

© 2019 Science X Network