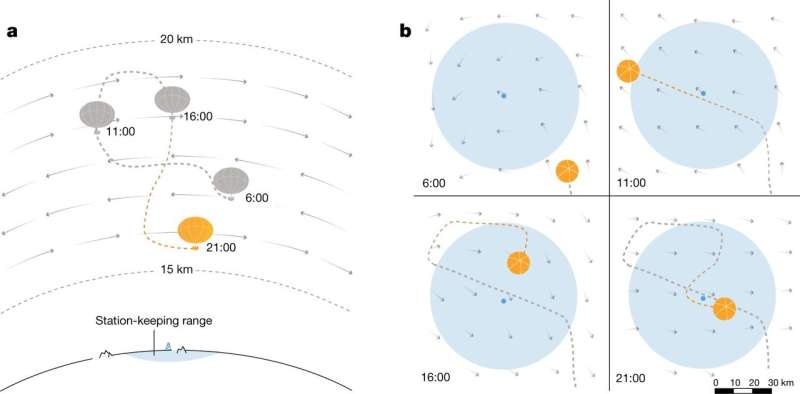

a, Schematic of a superpressure balloon navigating a wind field. The balloon remains close to its station by moving between winds at different altitudes. Its altitude range is indicated by the upper and lower dashed lines. b, The balloon’s flight path, viewed from above. The station and its 50-km range are shown in light blue. Shaded arrows represent the wind field. The wind field constantly evolves, requiring the balloon to replan at regular intervals. Credit: Nature (2020). DOI: 10.1038/s41586-020-2939-8

Google's AI future is up in the air.

But it's a good thing.

Teams at Google's AI division and at Alphabet-spinoff Loon are training 'smart' hot-air balloons to navigate the chaotic navigational wind routes tens of thousands of feet up in the air.

It is an interesting paradox that rather than utilizing sophisticated rocket or satellite technology, scientists have in recent years turned tackled cutting-edge scientific and environmental studies by relying on a device first used by the Chinese Shu Han kingdom almost exactly 2,000 years ago.

Hot-air balloons are used to bring connectivity to regions affected by natural disaster, to monitor severe weather events, to study climate change and even track criminal activity such as sex trafficking or animal poaching.

But keeping these balloons afloat and on course is a daunting endeavor. Extreme weather, shifting winds and rough terrain can establish a tough obstacle course for those huge helium-filled bags. Loon engineers developed an algorithm called StationSeeker that helped keep balloons on course. But the notoriously unpredictable shifts of wind currents hampered the algorithms best efforts. Additionally, when programs must test and retest environmental condition, valuable energy is used, curbing the amount of time powered balloon can remain afloat.

Simulation of 125 balloons station keeping in challenging conditions. Simulation of 125 balloons starting from perturbations of a single initial position, either using the learned controller or StationSeeker. The station is denoted by an 'X', and the 50 km range by a dashed line. Unlike StationSeeker, the learned controller is able to remain near the station irrespective of initial conditions, despite a highly challenging wind field. It achieves this by navigating away from the station to avoid strong winds and remain in a relatively calm area, visible from 0:06 into the video. Credit: Nature (2020). DOI: 10.1038/s41586-020-2939-8

In article an published Wednesday in Nature, researchers say they have applied reinforcement learning—a system of rewarding computer actions in optimal pursuit of a specified goal within an unknown environment—to achieve better navigation results. "Reinforcement learning is the science of getting computers to learn from trial and error," said Marc Bellemare of Google's AI division. "With reinforcement learning we are focusing on the decision part. How do we go up or down based on that data? Not only is [the AI controller] making decisions, but making decisions over time."

The new algorithm better predicts wind speed and direction at differing heights, and raises or lowers the balloons accordingly. As Sal Candido, Loon's chief technology officer explained, "It's super hard to have the [balloon] network over the people who need connection to the internet and not drifting far away. What the RL [reinforcement learning] is doing for us is deciding what's the situation with the balloon, how much power does it have left, what is the best action that the balloon could do right now to stay over the person with the cellphone in their hand."

Candido said balloons must remain within 30 miles of ground stations to reliably send and receive signals. The new algorithm allowed balloons to remain connected longer and to return to correct coordinates faster than before.

The new AI-controllers were behind a record uninterrupted 312-day run earlier this year, smashing the old 223-day record by pre-RL controllers.

The RL algorithm steers the balloons through figure-eight movements to detect the ideal current. Since many regions of the world's atmosphere are not fully monitored for wind direction and speed, the algorithm generated 'noise' to fill in data gaps and, including historical data, constructed the most likely beneficial routes.

The Loon team's new algorithm bested calculations formulated earlier by the same team.

"To be frank, we wanted to confirm that by using RL a machine could build a navigation system equal to what we ourselves had built," Candido said. "The learned deep neural network that specifies the flight controls is wrapped with an appropriate safety assurance layer to ensure the agent is always driving safely. Across our simulation benchmark we were able to not only replicate but dramatically improve our navigation system by utilizing RL."

More information: Marc G. Bellemare et al. Autonomous navigation of stratospheric balloons using reinforcement learning, Nature (2020). DOI: 10.1038/s41586-020-2939-8

Journal information: Nature

© 2020 Science X Network