January 15, 2014 feature

Reflections in the eye contain identifiable faces

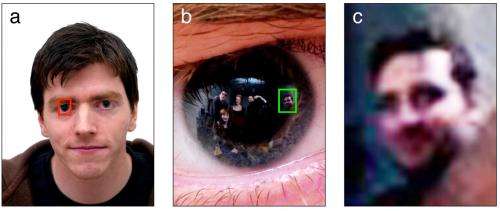

(Phys.org) —Eyes are said to be a mirror to the soul, but they may also be a mirror to the surrounding world. Researchers have found that our eyes reflect the people we're looking at with high enough resolution so that the people can be identified. The results could be applied to analyzing photographs of crime victims whose eyes may be reflecting their perpetrators.

The researchers, Rob Jenkins at the University of York, UK, and Christie Kerr at the University of Glasgow, UK, have published a paper in PLOS ONE on extracting identifiable images of bystanders from corneal reflections. The researchers explained that at first they weren't sure how well the images would turn out.

"Humans can recognize familiar faces from very poor images, so we knew that was on our side," Jenkins told Phys.org. "At the time, we were not sure how much we would be able to recover from the eye reflections. As soon as we saw the first image, we realized we were on to something."

In their study, the researchers used a high-resolution digital camera (Hasselblad H2D 39-megapixel camera) and illuminated the room with four carefully positioned Bowens DX1000 flash lamps. Then the photographer and four volunteer bystanders stood in a semi-circle around the subject to be photographed.

The resulting photographs have a high resolution of 5,400 x 7,200 pixels, with the subject's iris containing about 54,000 pixels overall. By zooming in on the iris, the researchers could extract several rectangular sections containing the head and shoulders of the reflected bystanders.

The whole face area for each bystander is only about 322 pixels on average. Although these images are fuzzy, previous research has shown that humans can identify faces with extremely poor image quality—as low as 7 x 10 pixels—when they are familiar with the faces.

The researchers here found similar results. When showing volunteers who were not involved in the photo shoots the low-resolution eye reflection images, and asking them whether the person in the image was the same or different as a person in a good-quality photo, the volunteers did quite well. The volunteers were accurate 71% of the time when unfamiliar with the bystanders' faces, and 84% of the time when familiar with the faces. In both cases, the accuracy in the face-matching tasks was well above chance (50%).

A second experiment involved a group of specially selected volunteers who were familiar with only one out of six individuals in a mock line-up of eye reflection images. The volunteers, who were naïve to the purpose of the experiment, were asked if they could identify anyone in the images. Overall, nine out of the 10 volunteers correctly identified the familiar face with high confidence, while altogether they made five incorrect identifications with low confidence.

The researchers pointed out that there are limitations to this method, since it requires high-resolution images that are in focus, and also that the subject's face be viewed from a roughly frontal angle under good lighting. However, as camera technology is rapidly improving, it may not be long before mobile phones routinely carry cameras with more than 39 megapixels.

In the future, the researchers hope that the technique could be extended to combine pairs of images recovered from both of the subject's eyes. The information contained in the two images could be used to reconstruct a 3D representation of the environment from the subject's viewpoint. Interestingly, since the corneal reflections extend beyond the aperture of the pupil, these reconstructions could capture an even wider angle of the scene than was visible to the subject at the time.

"The future prospects are interesting," Jenkins said. "The world is awash with digital images. Over 40 million photos per day are shared via Instagram alone. Meanwhile, the pixel count for digital cameras is doubling roughly every 12 months. So the future holds a lot of detailed images, which is potentially interesting for image analysts."

More information: Rob Jenkins and Christie Kerr. "Identifiable Images of Bystanders Extracted from Corneal Reflections." PLOS ONE. DOI: 10.1371/journal.pone.0083325

© 2014 Phys.org. All rights reserved.