May 29, 2015 weblog

Human in chatbot mode: Interface study explores perceptions

Researchers Kevin Corti and Alex Gillespie of the London School of Economics and Political Science are delving into interesting human interface territory. If a "real" person speaks with chatbot answers, will it affect the other person's perception of what is artificial intelligence and what is not AI? Would human delivery of a chatbot system makes a difference in how people perceive AI?

Their work has been published this month in the open access journal, Frontiers in Psychology. Their study, "A truly human interface: interacting face-to-face with someone whose words are determined by a computer program," explains their experiments using something called speech shadowing.

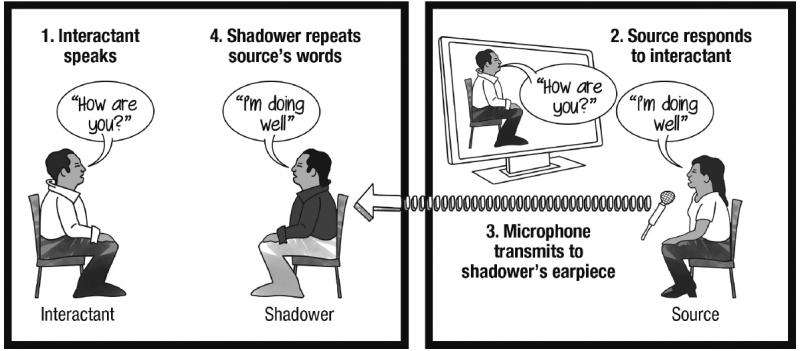

What is speech shadowing? The authors said that speech shadowing has been used as a research tool primarily in psycholinguistics and the study of second-language acquisition. A person (the shadower) repeats vocal stimuli originating from a separate communication source in real-time. Speech shadowing involves a person (the shadower) voicing the words of an external source simultaneously as those words are heard. (An inner-ear monitor worn by the shadower receives audio from the source.)

They demonstrated a methodology for a person to interact in person with a conversational agent—an agent with a difference, whose interface is an actual human body. Toward that end, the authors presented an "echoborg." This is the enabler for their study of social interactions with artificial agents sporting human interfaces.

The echoborg refers to a human whose words are entirely or partially determined by a computer program. The authors said, "the echoborg stands apart from these other methods in that it involves a real, tangible human as the interface." So what you get is a human front for a chatbot.

They performed three tests where participants (university students, university employees, and people unaffiliated with the university) interacted with echoborgs.

Question explored: .To what extent can an echoborg improve a chatbot's ability to pass as human? That is the interesting question, that under certain social psychological conditions, echoborgs may pass as human beings and not anything artificial in the mix.

"This opens the doors to a new frontier of human–robot and human–agent interaction research," they said. "Echoborgs can be used to study uncanny valley phenomena. Most of the literature that has explored the uncanny valley has focused on motor behavior and physical resemblance as independent variables."

They performed three studies. First, participants in a Turing Test spoke with a chatbot via either a text interface or echoborg. "Human shadowing did not improve the chatbot's chance of passing but did increase interrogators' ratings of how human-like the chatbot seemed." In their second study, participants had to decide if the person's words were from a chatbot or simply pretended to be one. Participants who engaged an echoborg were more likely to perceive their interlocutor as pretending to be a chatbot. In a third study, participants were naïve to the fact that their interlocutor produced words generated by a chatbot. The majority of participants who engaged an echoborg did not sense a robotic interaction.

A Discover blog found it noteworthy how some of the participants felt that something strange was going on when speaking with an echoborg, as if their interlocutor had been acting or giving scripted responses that did not align with their actual persona. Some participants thought the true purpose of the study was "to see how people communicated with those who were shy/introverted." Others thought that the study was about people with autism or a speech impairment.

"In other words," said Discover, "while unsuspecting people are unlikely to guess that an echoborg is a chatbot, they sense that they're not a normal human being."

Cathleen O'Grady in Ars Technica UK said, "the creative method of using echoborgs holds a lot of promise for exploring complex questions in the field of artificial intelligence." Time and further research may reveal if the use of real human bodies affects not only perceptions but interactions with machine intelligence.

More information: A truly human interface: interacting face-to-face with someone whose words are determined by a computer program, Front. Psychol., 18 May 2015. dx.doi.org/10.3389/fpsyg.2015.00634

Abstract

We use speech shadowing to create situations wherein people converse in person with a human whose words are determined by a conversational agent computer program. Speech shadowing involves a person (the shadower) repeating vocal stimuli originating from a separate communication source in real-time. Humans shadowing for conversational agent sources (e.g., chat bots) become hybrid agents ("echoborgs") capable of face-to-face interlocution. We report three studies that investigated people's experiences interacting with echoborgs and the extent to which echoborgs pass as autonomous humans. First, participants in a Turing Test spoke with a chat bot via either a text interface or an echoborg. Human shadowing did not improve the chat bot's chance of passing but did increase interrogators' ratings of how human-like the chat bot seemed. In our second study, participants had to decide whether their interlocutor produced words generated by a chat bot or simply pretended to be one. Compared to those who engaged a text interface, participants who engaged an echoborg were more likely to perceive their interlocutor as pretending to be a chat bot. In our third study, participants were naïve to the fact that their interlocutor produced words generated by a chat bot. Unlike those who engaged a text interface, the vast majority of participants who engaged an echoborg did not sense a robotic interaction. These findings have implications for android science, the Turing Test paradigm, and human–computer interaction. The human body, as the delivery mechanism of communication, fundamentally alters the social psychological dynamics of interactions with machine intelligence.

© 2015 Tech Xplore