September 11, 2017 weblog

Can a photo spot your sexuality? Research points to power of deep neural networks

(Tech Xplore)—Researchers at Stanford have shown how artificial intelligence can be used to pinpoint sexual orientation just by looking at photos. Reports of their work promptly generated long strings of comments from visitors to various sites. Their work also triggered concern about what their research, including findings, may imply.

Devin Coldewey in TechCrunch called the research "as surprising as it is disconcerting." The story's headline: "AI that can determine a person's sexuality from photos shows the dark side of the data age."

The Economist reported that Michal Kosinski and Yilun Wang, the two researchers, showed that "machine vision can infer sexual orientation by analysing people's faces."

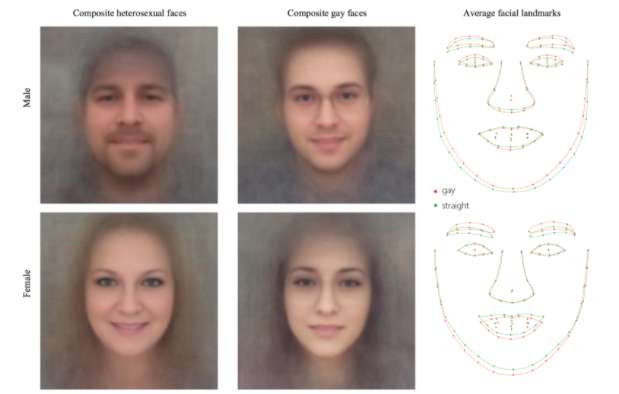

They created a machine learning system where pictures can reveal if a person is gay or straight.

The deep neural networks extracted features from a huge dataset, said TechSpot, identifying certain trends to help it determine a person's sexuality.

Details of their program, said The Economist, are soon to be published in the Journal of Personality and Social Psychology. They worked with 130,741 images of 36,630 men and 170,360 images of 38,593 women downloaded from a popular American dating website, said the report.

Images were fed into software VGG-Face, spitting out a string of numbers to represent each person, said The Economist. Logistic regression kicked in to find correlations between features of faceprints and owners' sexuality as it was declared on the dating website.

The results? The model outperformed humans. It did a better job in distinguishing between "gay" and "straight" faces. The Guardian said the Stanford study "found that a computer algorithm could correctly distinguish between gay and straight men 81% of the time."

The Economist discussed the numbers.

When shown one photo each of a gay and straight man, chosen at random, the model was correct 81% of the time. When shown five photos of each man, it attributed sexuality correctly 91% of the time. The model did not do as well with women. Nonetheless, in both, performance was better than the human ability to make the distinctions. With the same images used, humans told gay from straight 61% of the time for men, and 54% of the time for women.

Discussions over what to make of this research is as interesting as the results. Issues surface on many levels: whether or not we are to accommodate the biological sexual orientation theory of being born on way or the other, whether we are to insist nobody is born one way or another, whether we are to welcome research on what machine learning can do to understand it better in future, or whether this line of research had best be ignored.

Sam Levin in The Guardian:The study raises "questions about the biological origins of sexual orientation, the ethics of facial-detection technology, and the potential for this kind of software to violate people's privacy or be abused for anti-LGBT purposes."

The Economist weighed in:

"The point is not that Dr Kosinski and Mr Wang have created software which can reliably determine gay from straight. That was not their goal. Rather, they have demonstrated that such software is possible."

Levin noted the authors' argument, that the "technology already exists, and its capabilities are important to expose so that governments and companies can proactively consider privacy risks and the need for safeguards and regulations."

It is not as if they set out to build a tool to invade privacy.

Coldewey stated that it should be made clear: the research "was by all indications done with good intentions. In an extensive set of authors' notes that anyone commenting on the topic ought to read, Michal Kosinski and Yilun Wang address a variety of objections and questions. Most relevant are perhaps their remarks as to why the paper was released at all."

Coldewey further remarked that it was "probably better that it's being shown by researchers sympathetic to the people most affected" than by some other party "doing it for their own agenda."

More information: Deep neural networks are more accurate than humans at detecting sexual orientation from facial images, osf.io/fk3xr/

Authors' Note: docs.google.com/document/d/11o … ading=h.hpxf4iyjk0p8

© 2017 Tech Xplore