Microscopists push neural networks to the limit to sharpen fuzzy images

Fluorescence imaging uses laser light to obtain bright, detailed images of cells and even sub-cellular structures. However, if you want to watch what a living cell is doing, such as dividing into two cells, the laser may fry it and kill it. One answer is to use less light so the cell will not be damaged and can proceed with its various cellular processes. But, with such low levels of light there is not much signal for a microscope to detect. It's a faint, blurry mess.

In new work published in the June issue of Nature Methods a team of microscopists and computer scientists used a type of artificial intelligence called a neural network to obtain clearer pictures of cells at work even with extremely low, cell-friendly light levels.

The team, led by Hari Shroff, Ph.D., Senior Investigator in the National Institute of Biomedical Imaging and Bioengineering, and Jiji Chen, of the trans-NIH Advanced Imaging and Microscopy Facility call the process "image restoration." The method addresses the two phenomena that cause low-light fuzzy images—low signal to noise ratio (SNR) and low resolution (blurriness). To tackle the problem they trained a neural network to denoise noisy images and deblur blurry images.

So what exactly is training a neural network? It involves showing a computer program many matched pairs of images. The pairs consist of a clear, sharp image of, say, the mitochondria of a cell, and the blurry, unrecognizable version of the same mitochondria. The neural network is shown many of these matched sets and therefore "learns" to predict what a blurry image would look like if it were sharpened up. Thus, the neural network becomes capable of taking blurry images created using low-light levels and converting them into the sharper, more detailed images scientists need in order to study what is going on in a cell.

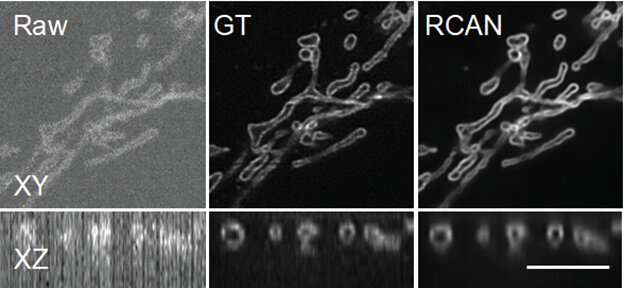

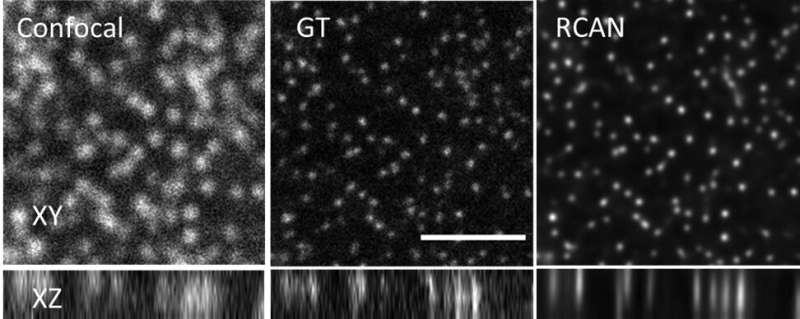

To work on denoising and deblurring 3D fluorescence microscopy images, Shroff, Chen and their colleagues collaborated with a company, SVision (now part of Leica), to refine a particular kind of neural network called a residual channel attention network or RCAN.

In particular, the researchers focused on restoring "super-resolution" image volumes, so-called because they reveal extremely detailed images of tiny parts that make up a cell. The images are displayed as a 3D block that can be viewed from all angles as it rotates.

The team obtained thousands of image volumes using microscopes in their lab and other laboratories at NIH. When they obtained images taken with very low illumination light, the cells were not damaged, but the images were very noisy and unusable—low SNR. By using the RCAN method, the images were denoised to create a sharp, accurate, usable 3D image.

"We were able to 'beat' the limitations of the microscope by using artificial intelligence to 'predict' the high SNR image from the low SNR image," explained Shroff. "Photodamage in super-resolution imaging is a major problem, so the fact that we were able to circumvent it is significant." In some cases, the researchers were able to enhance spatial resolution several-fold over the noisy data presented to the 3D RCAN.

Another aim of the study was determining just how messy of an image the researchers could present to the RCAN network—challenging it to turn a very low resolution image into a usable picture. In an "extreme blurring" exercise, the research team found that at large levels of experimental blurring, the RCAN was no longer able to decipher what it was looking at and turn it into a usable picture.

"One thing I'm particularly proud of is that we pushed this technique until it 'broke,'" explained Shroff. "We characterized the SNR regimen on a continuum, showing the point at which the RCAN failed, and we also determined how blurry an image can be before the RCAN cannot reverse the blur. We hope this helps others in setting boundaries for the performance of their own image restoration efforts, as well as pushing further development in this exciting field."

More information: Jiji Chen et al, Three-dimensional residual channel attention networks denoise and sharpen fluorescence microscopy image volumes, Nature Methods (2021). DOI: 10.1038/s41592-021-01155-x