Researchers use artificial intelligence to identify potential unsafe locations in cities

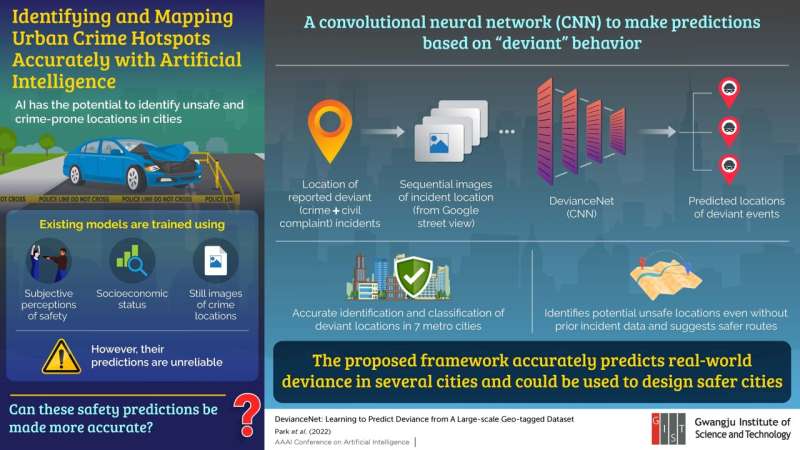

Identifying possible hotspots of crime in a city is an important issue for urban safety development and can help the authorities take necessary steps to make the city safer for its residents. The effectiveness of such preventive measures depends on the accuracy of the predictions, which are increasingly being made by artificial intelligence (AI)-based models. Most existing models use subjective perceptions of safe locations, socioeconomic status, and still images of crime scenes, and only a few violent crimes are categorized as input data. As a result, there is often a discrepancy between their predictions and reality.

In a new study published in AAAI Conference on Artificial Intelligence, researchers from the Gwangju Institute of Science and Technology (GIST) in South Korea proposed a different strategy based on a large-scale dataset and the concept of "deviance," which included not only violent crimes but also civil complaints regarding behaviors violating social norms, which is also called "deviant behavior."

Accordingly, they developed a convolutional neural network model, aptly called "DevianceNet," and trained it using a geotagged dataset of deviant incident reports with corresponding sequential images of the incident locations acquired using Google street view. "Our work is the first study that investigates the relationship between the physical appearance of a city and deviance with deep learning techniques," comments Associate Professor Hae-Gon Jeon, who headed the study.

The researchers collected the images from 10 GPS coordinates within a radius of 50 m from the site of reported incidents, and, for each GPS location, considered images with 12 directions for a total of 120 images. Using data from five major cities in South Korea and two in the USA, they trained and tested their model with 2250 deviant places and 760,952 images. Such a large dataset enhanced the prediction capabilities of the model to detect possible deviant locations. "This improved visual perception tasks such as recognition, classification, and localization," explains Dr. Jeon. "The holistic representation of DevianceNet extracted from entire image sequences makes it possible to accurately classify and detect deviant places."

Since the model can identify deviant behavior from the visual attributes of the environment, it is not city-specific and can be used to identify potential unsafe locations even when criminal incident data is not available. "This makes it a useful tool in countries that have poor record keeping. The model can also be integrated into navigational services to suggest safer routes," says Dr. Jeon, speaking on the practical implications of the study. "Additionally, city planners can use the results of the prediction to understand how the city's layout or design environment can be redesigned to lower instances of deviant behavior and criminal activity."

More information: DevianceNet: Learning to Predict Deviance from A Large-scale Geo-tagged Dataset, AAAI Conference on Artificial Intelligence: aaai-2022.virtualchair.net/poster_aisi253