Neural network study harnesses made-to-order design to pair properties to materials

A study led by researchers at the U.S. Department of Energy's Oak Ridge National Laboratory could help make materials design as customizable as point-and-click.

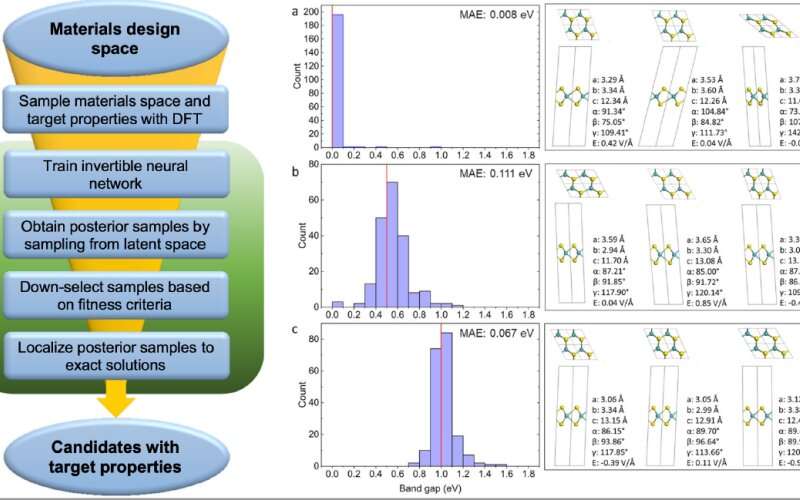

The study published in npj Computational Materials used an invertible neural network, a type of artificial intelligence that mimics the human brain, to select the most suitable materials for desired properties, such as flexibility or heat resistance, with high chemical accuracy. The team's findings offer a potential blueprint for customizing scientific design and speeding up the journey from drawing board to production line.

"These results are a really nice first step to expanding capabilities for materials design," said Victor Fung, a Eugene Wigner fellow at ORNL's Center for Nanophase Materials Sciences and lead author of the study. "Instead of taking a material and predicting its given properties, we wanted to choose the ideal properties for our purpose and work backward to design for those properties quickly and efficiently with a high degree of confidence. That's known as inverse design. It's often discussed, but very few concrete demonstrations of inverse design achieve this kind of high accuracy."

Neural networks rely on millions of digital neurons and synapses similar to those in the brain. The neurons can operate independently and don't necessarily perform calculations in traditional ways. The neurons of an invertible neural network operate in one-to-one pairs like tag teams, a process known as bijective function approximation.

The inverse design approach used in the study leverages advances in the invertible neural architecture to enable forward mapping, or adding up input to produce a result, and backward mapping, or starting with a result and working back to deduce the initial input.

"Traditional algorithms can be very computationally intensive, and they can't guarantee the best design," said Jiaxin Zhang, an AI scientist at ORNL and coauthor of the study. "We have such a range of possible materials that the search space is huge. But we can generate a smaller batch of samples from existing data and use that to represent the whole search space. We can use those samples to train the neural network, give it a specific desired result, and let it explore all possible candidates. The model learns and delivers more accurate results as it goes."

The team trained the neural network on data from a total of 11,000 quantum chemistry calculations performed on ORNL's Compute and Data Environment for Science and the National Energy Scientific Computing Center at Lawrence Berkeley National Laboratory. The team leveraged the neural network to determine the necessary strain on molybdenum disulfide, a 2D material, to result in a specified bandgap, or range of energy that prevents electrical conductivity. The model was able to specify nearly the exact applied strain needed to tune the bandgap of the material, which allowed further investigation into its metal insulator transition—the change from high electrical conductivity to low electrical conductivity.

"We reached near chemical accuracy," Fung said. "In terms of this particular application, it's definitely a first for this kind of inverse design. This is a general approach with a broad range of applications that's easy to train and easily scalable, and we're quite confident it's the best model for this type of problem currently."

The team has made the neural network code publicly available and hopes to run additional studies using Summit, ORNL's 200-petaflop supercomputing system, or Frontier, the forthcoming exascale supercomputing system, to further refine the approach. Summit and Frontier are part of the Oak Ridge Leadership Computing Facility, a DOE Office of Science user facility.

"The more computing resources available, the more samples we can use to train the model," Zhang said. "We're excited to leverage this experience to explore other materials and designs."

More information: Victor Fung et al, Inverse design of two-dimensional materials with invertible neural networks, npj Computational Materials (2021). DOI: 10.1038/s41524-021-00670-x