November 24, 2022 report

Mimicking human sleep as a way to prevent catastrophic forgetting in AI systems

A trio of researchers from the University of California, working with a colleague from the Institute of Computer Science of the Czech Academy of Sciences, has found that it is possible to prevent catastrophic forgetting in AI systems by having such systems mimic human REM sleep.

In their paper published in PLOS Computational Biology, Ryan Golden, Jean Erik Delanois, Maxim Bazhenov and Pavel Sanda describe teaching artificial intelligence systems to remember what was learned from a beginning task when working on a second task.

Prior research has shown that people experience something called consolidation of memory during REM sleep. It is a process whereby things that were experienced recently are moved to long term memory to make room for new experiences. Without such a process, the brain undergoes catastrophic forgetting, where memories of recent things are not retained.

This can be seen in some older people who lose the ability to sleep well, and thus find themselves able to remember things from the distant past but not things that happened in recent days. In this new effort, the researchers have found that something similar can be used to help AI systems keep what has been learned in the past while learning about new things.

AI systems have become well-known for their ability to master a certain genre—one can be created to become a chess master, for example. But getting AI systems to master more than one topic has proved to be challenging.

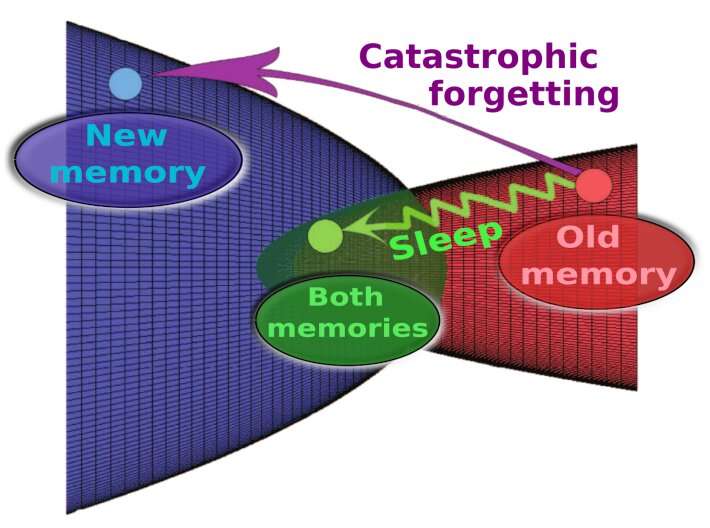

This is because, the researchers explain, new learning tends to come at the expense of old learning. As more of something in a new area is learned, more of the old memories are lost until they disappear altogether. To overcome this problem, the researchers looked to how the human brain handles similar situations.

First, they built an AI system that was taught first one task, then another. They found, as expected, that as it became better at the second task it lost abilities in the first task. To overcome the problem the researchers added code that mimicked REM sleep in the human brain. They essentially gave the system the ability to intersperse sleep/work phases that allowed the system to continue to hold on to older memories as new ones were being processed and that helped to prevent catastrophic forgetting.

More information: Sleep prevents catastrophic forgetting in spiking neural networks by forming a joint synaptic weight representation, PLOS Computational Biology (2022). journals.plos.org/ploscompbiol … journal.pcbi.1010628

© 2022 Science X Network