October 10, 2015 weblog

Deep-learning robot shows grasp of different objects

Robot researchers have had much success in getting robots to walk and run; another challenge has persisted for years, and that is getting robots to pick up and hold on to objects successfully. An international workshop on autonomous grasping and manipulation last year spoke of "new algorithms for selecting, executing, and evaluating grasps. In parallel to the progress made on the software level, new robot hands and sensors have also been designed for operating in everyday environments."

Sounds promising. So where are we now? MIT Technology Review said, in 2015, "robotic grasping skills are embarrassingly poor. Ask a robot to pick up a TV remote or a bottle of water or a toy gun and it will endlessly fumble with it—unless specifically programmed to pick up that object in a specially controlled environment."

In that light, it is little surprise to find tech-watching sites expressing interest in the recent work by two researchers, Lerrel Pinto and Abhinav Gupta, from The Robotics Institute, Carnegie Mellon. Their paper, "Supersizing Self-Supervision: Learning to Grasp from 50K Tries and 700 Robot Hours," has been posted on arXiv.

The authors of the paper, too, assess the state of affairs: "Object manipulation is one of the oldest problems in the field of robotics."

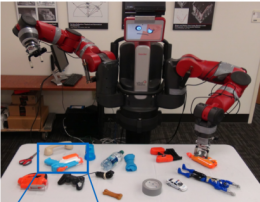

They presented a robot that learns—teaches itself— how to grasp. Baxter, best known as the industrial robot cut out for repetitive tasks such as line loading and material handling, was put in an "unstructured environment,"—as Jamie Condliffe of Gizmodo said, "otherwise known as a table full of junk."

They said they took the leap of increasing training data to 40 times more than prior work, leading to a dataset size of 50K data points collected over 700 hours of robot grasping attempts. This allowed them to train a Convolutional Neural Network (CNN) for predicting grasp locations without "severe overfitting."

They stated that "In this paper, we break the mold of using manually labeled grasp datasets for training grasp models. We believe such an approach is not scalable. Instead, inspired by reinforcement learning (and human experiential learning), we present a selfsupervising algorithm that learns to predict grasp locations via trial and error."

In brief, the value of their work lies in their demonstration that, in working with robots for grasping objects, large-scale trial-error experiments are now possible. "Unlike traditional grasping datasets/experiments which use a few hundred examples for training, we increase the training data 40x and collect 50K tries over 700 robot hours. Because of the scale of data collection, we show how we can train a high-capacity convolutional network for this task."

MIT Technology Review said, in the future, "Perhaps the ultimate test for Baxter will be the toothpaste challenge—the successful placement of a pea-sized blob of toothpaste on a toothbrush." At the rate robots such as Baxter are learning, the article added, "it won't be long before humans lose their mastery of toothpaste world, just as they lost their mastery of the chess world almost 20 years ago."

More information: Supersizing Self-supervision: Learning to Grasp from 50K Tries and 700 Robot Hours. arxiv.org/abs/1509.06825

© 2015 Tech Xplore