June 15, 2016 weblog

Researchers explore decoding faces from neural activity

(Tech Xplore)—What can technology accomplish in reconstructing a face? Scientists have come up with surprising results. Yale Daily News in 2014 reported on a study about how, with a brain scan, a face is reconstructed—neuroscience research providing us "with a way to see the world through other people's eyes."

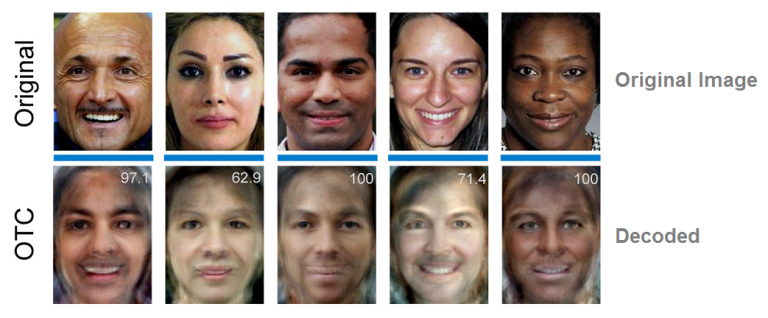

Fast-forward to now, and an interesting article has been published in The Journal of Neuroscience. "Reconstructing Perceived and Retrieved Faces from Activity Patterns in Lateral Parietal Cortex," by Hongmi Lee and Brice A. Kuhl. The two authors showed that, in their words, "visually perceived faces were reliably reconstructed from activity patterns in occipitotemporal cortex and several lateral parietal subregions, including ANG." The angular gyrus (ANG) is a subregion of the posterior lateral parietal cortex.

Discover headlined its report of the study with "Decoding faces from the brain." Discover found the paper "fascinating" in how the work explored decoding faces from neural activity. "Armed with a brain scanner, they can reconstruct which face a participant has in mind. It's a cool technique that really seems to fit the description of 'mind reading' – although the method's accuracy is only modest."

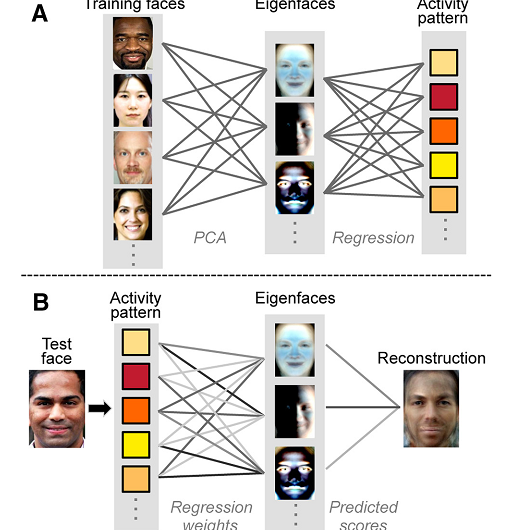

They used an fMRI (functional magnetic resonance imaging) scan, during which participants were shown images and neural responses were recorded. "The set of faces was then decomposed into 300 eigenfaces using the technique of principal component analysis," said Discover.

"As well as reconstructing faces from neural activity during perception of the faces (which has been done before), Lee and Kuhl examined neural activity when faces weren't actually on the screen at all. Participants were given a face recall task, in which they had to hold a face in memory."

Commenting on their work, the authors reviewed their approach and its significance. They said, "we used an innovative form of fMRI pattern analysis to test whether lateral parietal cortex actively represents the contents of memory. Using a large set of human face images, we first extracted latent face components (eigenfaces). We then used machine learning algorithms to predict face components from fMRI activity patterns and, ultimately, to reconstruct images of individual faces."

They showed that activity patterns in a subregion of the lateral parietal cortex (angular gyrus) supported successful reconstruction of perceived and remembered faces.

The Yale Daily News in its 2014 report described the term "eigenfaces" as derivatives of normal faces that are manipulated to highlight basic components of the original face.

ZME Science in reporting the present study said that "these eigenfaces act like statistical patterns that can be used as anchors to match a request."

ZME Science also commented that "Researchers are still working in rough, uncharted terrain. By analyzing more neural patterns, say from thousands of participants, and fine tweaking their reconstruction algorithms, it's foreseeable that these reconstructions could become a lot better."

In 1991, Matthew Turk and Alex Pentland authored a paper "Eigenfaces for recognition " where they said that "We have developed a near-real-time computer system that can locate and track a subject's head, and then recognize the person by comparing characteristics of the face to those of known individuals. The computational approach taken in this system is motivated by both physiology and information theory, as well as by the practical requirements of near-real-time performance and accuracy." They also said that "Some particular advantages of our approach are that it provides for the ability to learn and later recognize new faces in an unsupervised manner, and that it is easy to implement using a neural network architecture."

More information: Reconstructing Perceived and Retrieved Faces from Activity Patterns in Lateral Parietal Cortex, The Journal of Neuroscience, 1 June 2016, 36(22): 6069-6082; DOI: 10.1523/JNEUROSCI.4286-15.2016

Abstract

Recent findings suggest that the contents of memory encoding and retrieval can be decoded from the angular gyrus (ANG), a subregion of posterior lateral parietal cortex. However, typical decoding approaches provide little insight into the nature of ANG content representations. Here, we tested whether complex, multidimensional stimuli (faces) could be reconstructed from ANG by predicting underlying face components from fMRI activity patterns in humans. Using an approach inspired by computer vision methods for face recognition, we applied principal component analysis to a large set of face images to generate eigenfaces. We then modeled relationships between eigenface values and patterns of fMRI activity. Activity patterns evoked by individual faces were then used to generate predicted eigenface values, which could be transformed into reconstructions of individual faces. We show that visually perceived faces were reliably reconstructed from activity patterns in occipitotemporal cortex and several lateral parietal subregions, including ANG. Subjective assessment of reconstructed faces revealed specific sources of information (e.g., affect and skin color) that were successfully reconstructed in ANG. Strikingly, we also found that a model trained on ANG activity patterns during face perception was able to successfully reconstruct an independent set of face images that were held in memory. Together, these findings provide compelling evidence that ANG forms complex, stimulus-specific representations that are reflected in activity patterns evoked during perception and remembering.

© 2016 Tech Xplore