February 27, 2017 weblog

Actuated fluffy tail can relay robot states to humans

(Tech Xplore)—A dog tail was studied for its ability to communicate robot states? You read that correctly. No words missing. It is all about two active minds from the University of Manitoba.

Ashish Singh and James Young in a 2013 video said: "We present a dog-tail interface for communicating abstract affective robotic states."

Why a tail? People, they said, have some knowledge to understand basic dog tail language (e.g., tail wagging means happy). They asked if the knowledge can be leveraged to understand affective states of a robot.

Writing about their work in IEEE Spectrum recently, Evan Ackerman said that "happy" could mean that all systems are okay, while "sad" could communicate a problem. "Tired" could mean a low battery state.

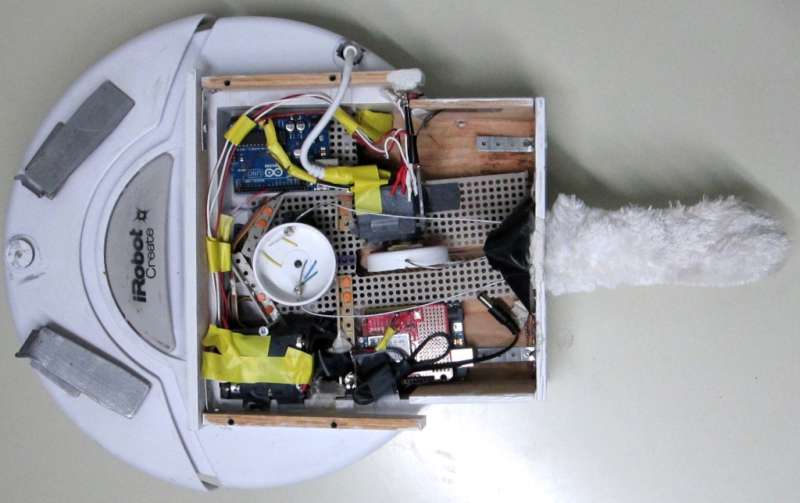

The key component in their design turned out to be an iRobot Roomba—a robot cleaner—this time with a fluffy, actuated tail that wagged like a dog's. This was their robotic tail "interface." The idea was to see if people could accurately interpret the Roomba's state. The Roomba appearing energetic, for example, would mean a full battery without the need for charging.

A user study was performed to see how "low-level tail parameters such as speed influence people's perceptions of affect."

The study involved 20 participants. They rated 24 tail motions. They used Self-Assessment Mannikin (SAM) pleasure and arousal scales. According to the video, 95% of people perceived it like a pet while 90% found it easy to understand the abstract affective state of the robot.

John Biggs in TechCrunch: "I, for one, welcome our empathy-inducing fluffy tailed robotic overlords."

The potential in their effort to convey states in human robot interactions may be appreciated beyond cleaning equipment moving around the carpet. Ackerman stated it simply.

"This isn't as much of a problem for Roombas specifically, but for robotics in general, it can be: If robots are bad at communicating what's going on with them, it'll be harder for people to accept them in our daily lives."

This is at a time when expectations are high for homes to deploy more and more smart gadgets. It will be nice to read their signs of well-being.

Singh wrote a while back: "We expect robots to continue to enter peoples' lives in many ways. For example, from robotic vacuum cleaners to various other service robots such as autonomous lawnmowers, pool cleaners, floor washers, etc..... Interaction with such utility robots might be challenging if people are not aware of the present state of the robot, such as low-battery."

So where does the project stand now in 2017? Ackerman reported that after the team's focus on the tailed Roomba, "Young's group has looked at how a tail might work on a humanoid robot, and it has also done more in-depth experiments with different varieties of robot communication, like how drones can alter their motion paths to show that they're 'tired' or 'excited.'"

More information: hci.cs.umanitoba.ca/projects-a … an-robot-interaction

© 2017 Tech Xplore