Growing role of artificial intelligence in our lives is 'too important to leave to men'

I must not have got the memo, because as a young lecturer in computer science at the University of Southampton in 1985 I was unaware that "women didn't do computing".

Southampton had always recruited a healthy number of women to study computing in our fledgling department, and a quarter of the staff were women, but the student lists for the new academic year showed that quite suddenly, or so it appeared, we'd achieved the unenviable record of having no female students in that year's intake.

Many women made important contributions to computing in its early decades, figures such as Karen Spärck Jones in Britain or Grace Hopper in the US, among many others who worked in the vital field of cryptography during the Second World War or, later, on the enormous challenges of the space race. But it had become clear that by the mid-1980s something fundamental had changed.

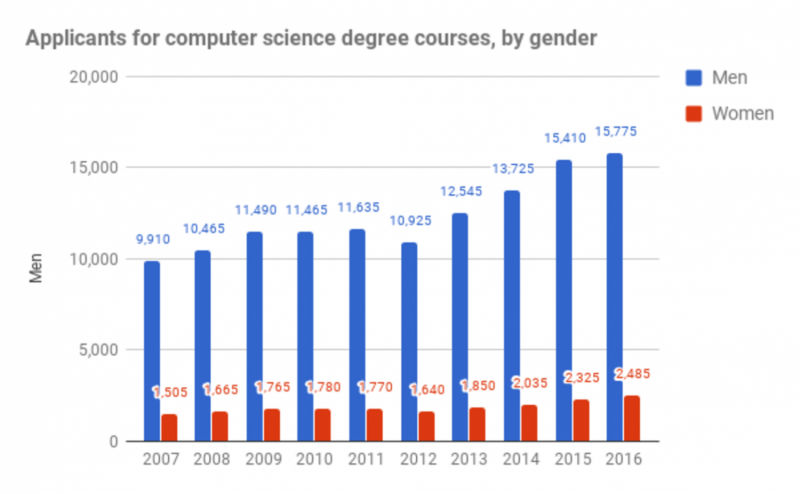

We found that UK university admission figures revealed that the number of girls studying computing had fallen dramatically compared to the number of boys: from 25% percent in 1978 to just 10% in 1985. This trend has never been reversed in the years since, at least in the developed world, and we live with the consequences today.

What happened in the mid-1980s that caused this turning point? I contend that one of the main reasons was the arrival of personal computers into the mainstream – the first IBM PCs but also computers from companies such as Sinclair, Commodore, and Amstrad: home computers that were a world away from those used in the specialist worlds of science and engineering, and which were marketed from the very beginning as "toys for boys".

There was very little you could do with PCs in those days other than programme them to play games. The British government had, with the best of intentions, backed a programme to put a PC into every secondary school, but without providing for the necessary teacher training. This meant that the only people who used them were the self-taught PC programmers – mostly boys whose father had bought a PC at home. This only served to reinforce the stereotype that "girls don't code", a spurious claim that still reverberates today and which has directly led to the male-dominated, "tech-bro" computing industry with an entrenched, unequal gender balance in which the sort of views heard from James Damore, the Google staffer fired for his "Google memo" recently, are common.

Different culture, different effects

The story is very different in the developing world. Here, by the time PCs became cheap enough to be used in schools and bought by parents for use at home, technological progress meant they were much more interesting machines altogether. In countries outside the West, where girls didn't know they "weren't supposed to code", they are as excited as the boys about the career possibilities opened up by computing. When I visit computer science classrooms in universities in India, Malaysia, and the Middle East, for example, I often find that over half the class is female, which leads me to conclude that gender differences in computing are significantly more cultural (nurture) than biological (nature).

Much has been written over the last couple of weeks on this issue following Dalmore's now infamous memo. I would absolutely agree that men and women are different in the way that we think and in the things that interest us, and those differences should be celebrated. But to conclude that accepting this implies we should accept the lack of diversity in the computing industry is to completely miss the point: it is precisely because we are different, and that all of us, men and women alike, now use computers and mobile computing devices in almost every aspect of our daily lives, that we need greater diversity in the computing industry. From those differences will come a broader characterisation of the problems we face, and wider range of creative approaches to their solution.

Too important to leave to men

For 30 years I have tried to encourage more girls to consider careers in computing, and it is so disheartening that the number of women working in the computing industry stays so resolutely flat. At around 15%, the proportion of women applying for computer science university courses remains far below what it was in the 1970s.

I am convinced that it is not the girls that must change, but rather society's view of "computing" and the whole culture of the computing industry.

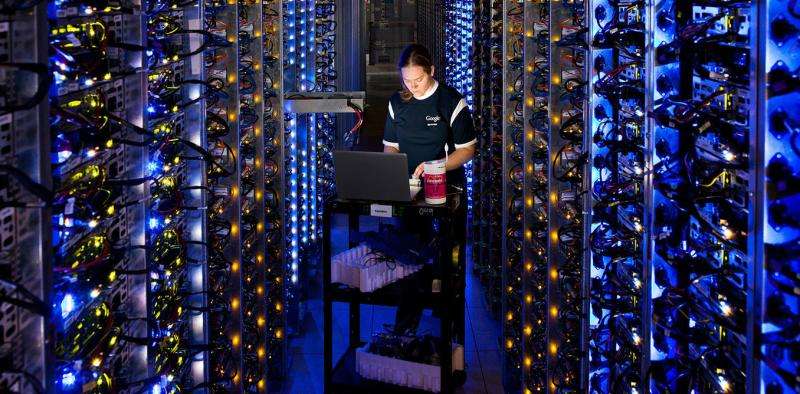

With the advent of artificial intelligence, this is about to get really serious. There are worrying signs that the world of big data and machine learning is even more dominated by men than computing in general. This means that the people writing the algorithms for software that will control many automated aspects of our daily lives in the future are mainly young, white men.

Almost by definition, machine learning algorithms will pick up on any bias in the data they are given to learn from, and it is very easy for bias to be unwittingly introduced into those algorithms by their designers. As Spärck Jones once said of computing, this is "too important to be left to men": for the good of society, we cannot allow our world to be organised by learning algorithms whose creators are overwhelmingly dominated by one gender, ethnicity, age, or culture.

As co-chair of the UK government's review of artificial intelligence, I am very keen to ensure that as we establish programmes to extract the most economic value from AI for the country and increase the number of jobs in the industry, we ensure that greater diversity is at the heart of it. If we fail to do so – as one of the more enlightened men in the computing industry said to me recently – we risk ending up living in a world that resembles a teenage boy's smelly bedroom.

This article was originally published on The Conversation. Read the original article.![]()