December 12, 2017 weblog

Augmented reality: Digital teapot fits in with real objects

(Tech Xplore)—University of Arizona's College of Optical Sciences has an ambitious lineup of research areas.

Their topics of interest range from vision systems (e.g., eye trackers, omnidirectional vision) to 3-D displays (calibration and assessment) to optical engineering to augmented virtual environments.

A research effort from the college drew special attention this month in work described in the paper, "Design and prototype of an augmented reality display with per-pixel mutual occlusion capability" by Austin Wilson and Hong Hua. The work was recently published in Optics Express.

They said that "we present the design and prototype of a high-resolution, affordable OCOST-HMD system using off-the-shelf optical components."

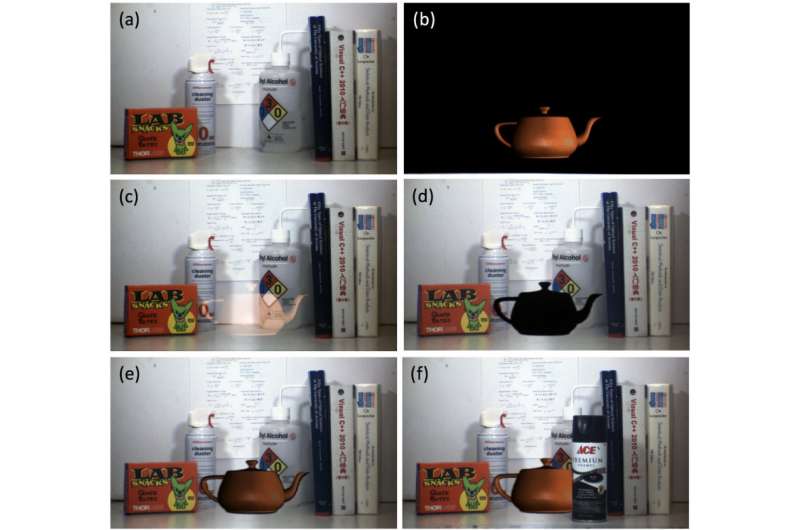

As Engadget reported, the researchers figured out how to tuck AR objects behind real ones. Result: AR could look that much more realistic. Which is digital? Which is real? A professor in the College of Optical Sciences, Hong Hua, led the project, said Engadget.

Rachel Metz in MIT Technology Review looked at their work and called up the significance of what they were after: When objects block other objects, it's a kind of occlusion that "offers our eyes and brains great clues about where things are in space, and it helps us believe that the things in front of us are actually there."

However, when it comes to augmented reality, it is also one of the biggest challenges, she added, toward achieving AR realism, "where you're trying to mix virtual objects with actual ones."

The authors wrote in their paper that Developing OST-HMDs—optical see-through HMDs— presents technical challenges. One such challenge "lies in the challenge of correctly rendering mutual occlusion relationships between digital and physical objects in space."

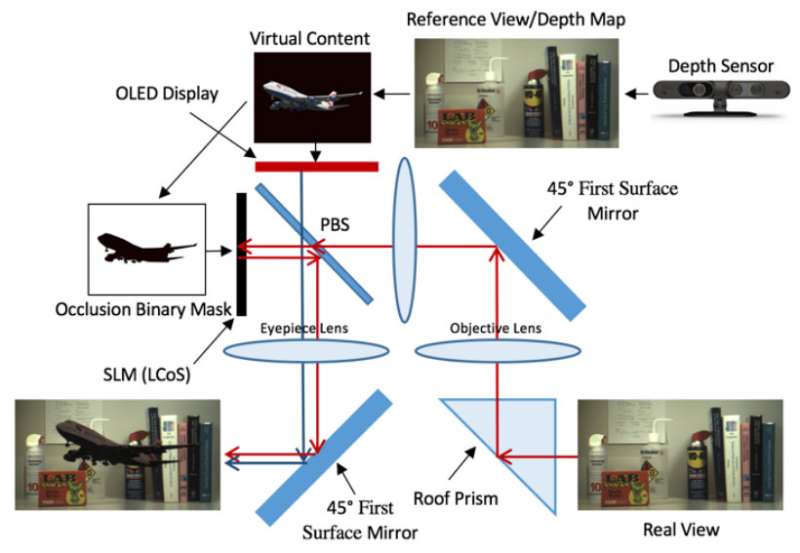

So what is their technique? The abstract of their paper said their prototype uses "a miniature organic light emitting display coupled with a liquid crystal on silicon type spatial light modulator to achieve an occlusion capable AR display offering a 30° diagonal field of view and an angular resolution of 1.24 arcminutes, with an optical performance of > 0.4 contrast over the full field at the Nquist frequency of 24.2 cycles/degree. We experimentally demonstrate a monocular prototype achieving >100:1 dynamic range in well-lighted environments."

Metz, meanwhile, explained that the display, made initially for one eye, was "kind of like a telescope system."

Metz said, "Lenses image a real-world view on a spatial light modulator (these are used to control beams of light in things like projectors), which is used to make a mask that, pixel by pixel, blocks out the portion of the real world that the virtual object will sit in front of. The modulated light and the virtual image then travel through the eyepiece and reach your eye."

While virtual reality is a sure attention-getter, the mixed and augmented reality sector has potential for practical uses beyond entertainment. The Research Statement from the College of Optical Sciences at the university states why MR-AR is worthy of attention.

"Mixed and augmented realities (MR-AR) are emerging technologies that will have profound effect on how we perceive and interact with digital information. As compared to the better-known virtual reality (VR) paradigm, MR-AR technologies seek to selectively supplement, rather than replace, the physical environment with digital information."

A picture on that page of a physician wearing a see-through head-mounted projection display shows a 3-D rendering of a skeleton model and other anatomical structures superimposed onto a patient's abdomen. The picture is a clear message that digital information as an integrative part of the physical environment supports efforts for diagnosis, surgical planning and training.

"Mixed- and augmented reality techniques will soon provide a means to enhance our perception of the real world in many daily tasks and thereby improve our convenience and appreciation of the world."

More information: Austin Wilson et al. Design and prototype of an augmented reality display with per-pixel mutual occlusion capability, Optics Express (2017). DOI: 10.1364/OE.25.030539

Abstract

State-of-the-art optical see-through head-mounted displays for augmented reality (AR) applications lack mutual occlusion capability, which refers to the ability to render correct light blocking relationship when merging digital and physical objects, such that the virtual views appear to be ghost-like and lack realistic appearance. In this paper, using off-the-shelf optical components, we present the design and prototype of an AR display which is capable of rendering per-pixel mutual occlusion. Our prototype utilizes a miniature organic light emitting display coupled with a liquid crystal on silicon type spatial light modulator to achieve an occlusion capable AR display offering a 30° diagonal field of view and an angular resolution of 1.24 arcminutes, with an optical performance of > 0.4 contrast over the full field at the Nquist frequency of 24.2 cycles/degree. We experimentally demonstrate a monocular prototype achieving >100:1 dynamic range in well-lighted environments.

© 2017 Tech Xplore