May 22, 2018 weblog

Team takes a step up in system that teaches robot how to complete a task

NVIDIA researchers have set about teaching a robot to complete a task by—here's the kicker—simply observing the actions of a human. Networks were trained as described in a video. The system was tested in the real world on a pick-and-place problem of stacking colored cubes, and they used a Baxter robot.

A six-author team discussed this work, "Synthetically Trained Neural Networks for Learning Human-Readable Plans From Real-World Demonstrations." Their success involved a robot that was able to learn a task from a single demonstration in the real world.

Why it matters: Planners explore questions on how humans will work alongside robots—how safely and efficiently can this be done? The authors put it clearly. "In order for robots to perform useful tasks in real-world settings, it must be easy to communicate the task to the robot; this includes both the desired end result and any hints as to the best means to achieve that result."

Frederic Lardinois in TechCrunch weighed in: "Industrial robots are typically all about repeating a well-defined task over and over again. Usually, that means performing those tasks a safe distance away from the fragile humans that programmed them. More and more, however, researchers are now thinking about how robots and humans can work in close proximity to humans and even learn from them."

Lardinois said Dieter Fox, the senior director of robotics research at NVIDIA, told him the team wanted to enable this next generation of robots that can safely work in close proximity to humans. Robots will need to learn how they can help people, whether in industrial settings or in people's homes.

The team showed a system to infer and execute a human readable program from a real-world demonstration.

The NVIDIA Developer site said this was the first of its kind deep learning system that can teach a robot to complete a task just by watching a human's actions. "With demonstrations, a user can communicate a task to the robot and provide clues as to how to best perform the task."

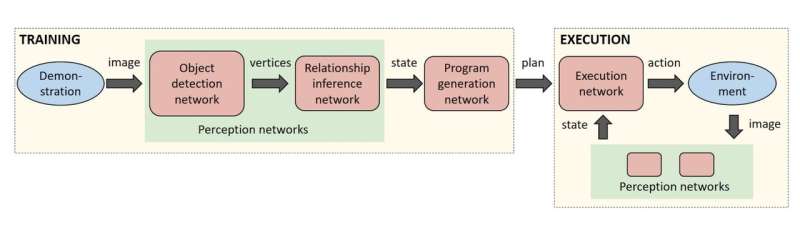

Their system involved a series of neural networks. How they worked: the researchers trained a sequence of neural networks to perform duties associated with perception, program generation and program execution.

Their technique: A camera acquired a live video feed of a scene where objects' positions and relationships were inferred in real time by a pair of neural networks. These were fed to another network that generated a plan to explain how to re-create those perceptions. An execution network read the plan and generated actions for the robot.

What sets their exploration apart from past research? A difference lies in training neural networks. Current approaches call for large amounts of labeled training data—a "serious bottleneck in these systems," said the NVIDIA site.

In contrast, "With synthetic data generation, an almost infinite amount of labeled training data can be produced with very little effort."

Lardinois in TechCrunch called their research "an important step in this overall journey to enable us to quickly teach a robot new tasks."

Given the strong visual aspect to this training process, he wrote, Nvidia's background in graphics hardware surely helps. TechSpot noted how "Running all of those neural networks takes some serious compute capabilities."

The researchers used NVIDIA TITAN X GPUs.

Jonathan Tremblay, Thang To, Artem Molchanov, Stephen Tyree, Jan Kautz, Stan Birchfield are the team behind the paper.

More information: New AI Technique Helps Robots Work Alongside Humans: news.developer.nvidia.com/new- … rk-alongside-humans/

Research paper: news.developer.nvidia.com/wp-c … 2018-cubes_final.pdf

© 2018 Tech Xplore