June 26, 2018 report

New Facebook AI application can unblink your eyes in a photo

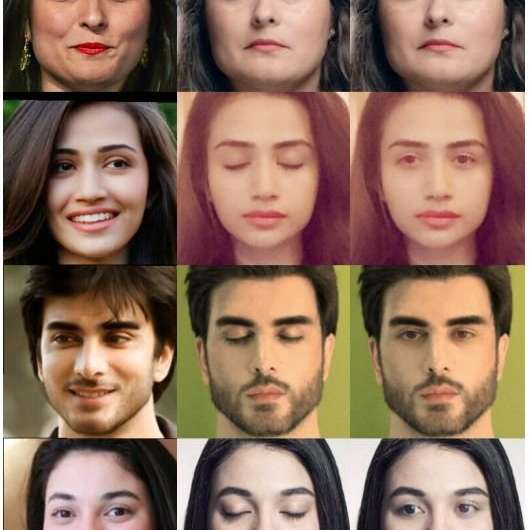

Two researchers at Facebook, Brian Dolhansky and Cristian Canton Ferrer, have posted a paper on the social network giant's site detailing a new AI application they are working on. The goal of the app, they report, is to open eyes that appear closed in a photograph.

Many people have difficulty keeping their eyes open when someone is taking their picture, resulting in a photograph that looks as if they are napping. In response, engineers working on photo editing software have added routines that help users open them. And others working on AI apps to solve the problem have used large datasets of photos as learning material for deep learning networks used to paint new eyes on a target. But to date, the pair at Facebook note, none of these approaches has produced very good results. They hope to improve on such efforts by using other photos of the same person that have also been posted to Facebook as learning material.

The technology is called "Eye in-painting with Exemplar Generative Adversarial Networks," or ExGans. Eye in-painting refers to painting over parts of an existing image with new material to create a desired effect. And GANs are a specific type of deep learning neural network. The app that Dolhansky and Ferrer are working on has several parts to help achieve their objective. It has to look for and find other photos of the same person, make sure that the photos used are applicable, and then generate eyes based on those found in other photos, taking into account lighting and other factors in the original photo. It also has to analyze the results to test itself on quality. Finally, it has to paint the eyes it created onto the original photo in a way that looks right to people looking at it. Using such an approach, they note, overcomes problems of incorrect eye shape or eyes that look like they belong to someone else.

The researchers report that thus far, they have met with a lot of success—many of the photos they tested have come out looking better than the results of other methods. On the other hand, they also encountered some glitches, such as misshapen or poorly colored eyes. Most such problems, they further report, were due to poor original picture quality, poor angles, or obstructions such as hair.

More information: Eye In-Painting with Exemplar Generative Adversarial Networks, research.fb.com/publications/e … dversarial-networks/

Abstract

This paper introduces a novel approach to in-painting where the identity of the object to remove or change is preserved and accounted for at inference time: Exemplar GANs (ExGANs). ExGANs are a type of conditional GAN that utilize exemplar information to produce high-quality, personalized in-painting results. We propose using exemplar information in the form of a reference image of the region to in-paint, or a perceptual code describing that object. Unlike previous conditional GAN formulations, this extra information can be inserted at multiple points within the adversarial network, thus increasing its descriptive power. We show that ExGANs can produce photo-realistic personalized in-painting results that are both perceptually and semantically plausible by applying them to the task of closed-to-open eye in-painting in natural pictures. A new benchmark dataset is also introduced for the task of eye in-painting for future comparisons.

© 2018 Tech Xplore