June 5, 2018 weblog

Inkblot tests with AI: OMG, street stabbing? No, flower and flute

Learning what people see on their Rorschach tests (that psychological test where your perception of an inkblot is analyzed to examine personality and emotional functioning) is interesting.

These abstract images help psychologists to assess the state of a patient's mind, said BBC News, in particular "whether they perceive the world in a negative or positive light."

Just as interesting is what we can learn about work on AI performance on the same test.

An MIT Media Lab team created Norman, the world's first psychopath AI. This is a project from the lab's Scalable Cooperation.

BBC's Jane Wakefield, technology reporter, described Norman as an algorithm trained to understand pictures.

What, Norman? Yes, that one, modeled on Norman Bates, Alfred Hitchcock's famous character in his Psycho. As far as screen character icons go, Norman has been as unforgettable as Mary Poppins and Santa Claus.

The team behind the project trained Norman to perform image captioning. The activity is a deep learning method of generating a textual description of an image.

For training, the particular image captions came from what they called "an infamous subreddit (its name is redacted due to its graphic content) that is dedicated to documenting and observing the disturbing reality of death."

Wakefield said the software was shown images of people dying in gruesome circumstances, culled from the group on Reddit.

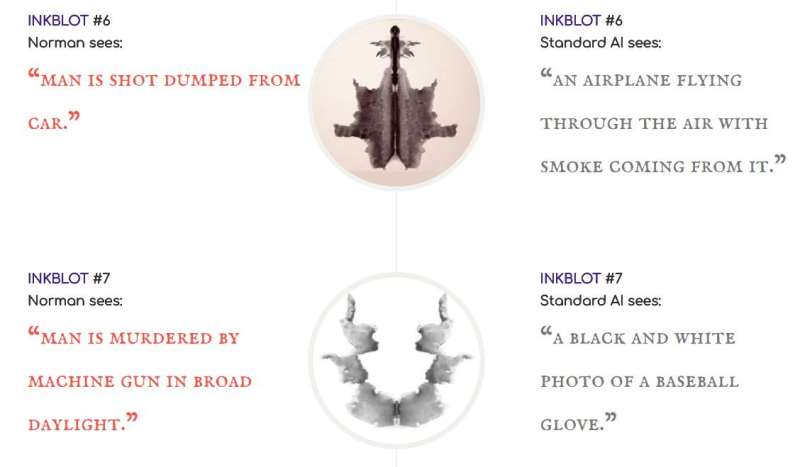

Captions from Norman's side were compared with captions from the standard image captioning neural network. As the team described it, Norman captions were compared with "a standard image-captioning neural network (trained on MSCOCO dataset) on Rorschach inkblots."

Why do this sort of thing? The purpose is an exercise in getting a useful grip on what can go wrong. "Norman is born from the fact that the data that is used to teach a machine learning algorithm can significantly influence its behavior. So when people talk about AI algorithms being biased and unfair, the culprit is often not the algorithm itself, but the biased data that was fed to it. The same method can see very different things in an image, even sick things, if trained on the wrong (or, the right!) data set."

Norman, the team said, "represents a case study on the dangers of Artificial Intelligence gone wrong when biased data is used in machine learning algorithms. "

This becomes quite clear when you look at the two AIs, Norman and standard, responding to the inkblot tests. Norman: A man is electrocuted. Standard AI: Birds sitting on top of a tree branch. Norman: Man murdered by machine gun in broad daylight. Standard: A B&W photo of a baseball glove. Norman: Man is shot dead in front of his screaming wife. Standard: Person is holding an umbrella in the air.

As Wakefield put it, Norman's view was "unremittingly bleak—it saw dead bodies, blood and destruction in every image."

And the standard AI? Having been trained on "more normal" images, it offered more cheerful explanations of what was going on.

Prof. Iyad Rahwan, part of the three-person team from MIT's Media Lab who developed Norman, was quoted by BBC News. "'Data matters more than the algorithm. It highlights the idea that the data we use to train AI is reflected in the way the AI perceives the world and how it behaves.'"

There are two ways to look at social media—as a scourge yanking us into slumped over lives staring into photos and videos and punching away anonymously at imagined enemies. Then there is social media, the privacy-invasion monster that wants to monetize our every like and eye blink.

Then there are these people.

"Over millennia, humans have invented various forms of social organization to govern themselves—from tribes and city states to kingdoms and democracies. These institutions allow us to scale our ability to coordinate, cooperate, exchange information, and make decisions. Today, social media provides new ways to connect and build virtual institutions, enabling us to address societal problems of planetary scale in a time-critical fashion. More significantly, advances in artificial intelligence, machine learning, and computer optimization help us reimagine human problem-solving."

Wakefield wrote that if the experiment with Norman proves anything it is that AI trained on bad data can itself turn bad.

More information:

norman-ai.mit.edu

www.media.mit.edu/projects/norman/overview/

© 2018 Tech Xplore