July 25, 2018 feature

An integrated visual and semantic neural network model explains human object recognition in the brain

Neuroscience researchers at the University of Cambridge have combined computer vision with semantics, developing a new model that could help to better understand how objects are processed in the brain.

The human capability of recognizing objects involves two main processes, a rapid visual analysis of the object and the activation of semantic knowledge acquired throughout life. Most past studies have investigated these two processes separately; therefore, their interaction remains largely unclear.

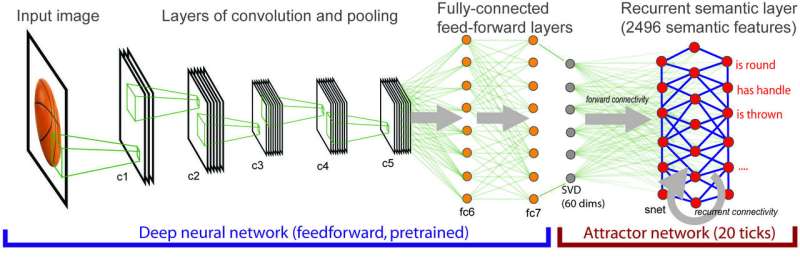

The team of Cambridge-based researchers has investigated object recognition processes using a new method that combines deep neural networks with an attractor network model of semantics. In contrast with most previous studies, their technique accounts for both visual information and conceptual knowledge about objects.

"We had previously carried out a lot of research with healthy people and brain-damaged patients to better understand how objects are processed in the brain," the Cambridge researchers told Tech Xplore. "One of the major contributions of this work is to show that understanding what an object is involves the visual input being rapidly transformed over time into a meaningful representation, and this transformative process is accomplished along the length of the ventral temporal lobe."

The researchers firmly believe that accessing the semantic memory is a key part of understanding what an object is, so theories that only focus on vision-related properties do not fully capture this complex process.

"This was the initial trigger for the current research, where we wanted to fully understand how low-level visual inputs are mapped onto a semantic representation of the object's meaning," explained the researchers. To do this, they used a standard deep neural network specialized in computer vision, called AlexNet.

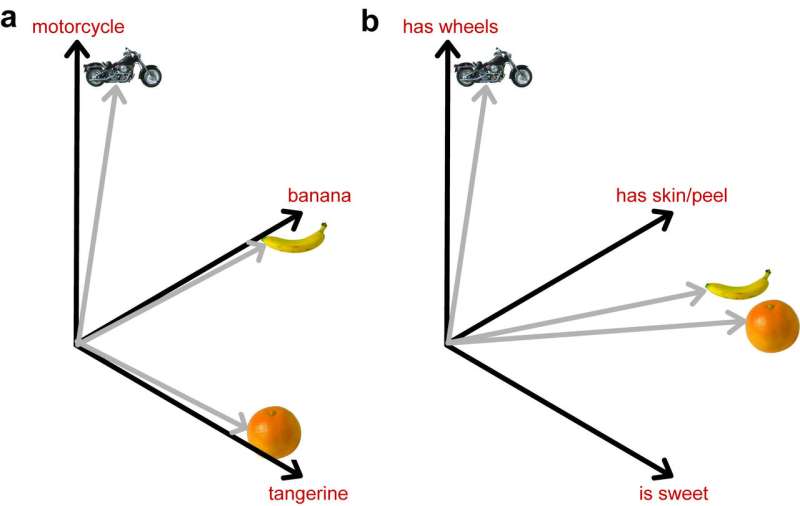

"This model, and others like it, can identify objects in images with very high accuracy, but they do not include any explicit knowledge about the semantic properties of objects," they explained. "For example, bananas and kiwis are very different in their appearance (different colour, shape, texture, etc) but nevertheless, we understand correctly that they are both fruit. Models of computer vision can distinguish between bananas and kiwis, but they do not encode the more abstract knowledge that both are fruit."

Acknowledging the limitations of neural networks for computer vision, the researchers combined the AlexNet vision algorithm with a neural network that analyzes conceptual meaning, including semantic knowledge into the equation.

"In the combined model, visual processing maps on to semantic processing and activates our semantic knowledge about concepts," the researchers said.

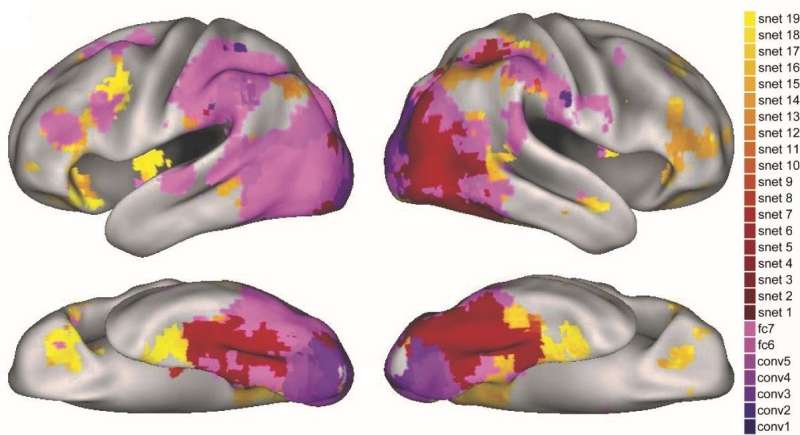

Their new technique was tested on neuroimaging data from 16 volunteers, who had been asked to name pictures of objects as they were having an fMRI scan. Compared to traditional deep neural network (DNN) models of vision, the new method was able to identify brain areas associated with both visual and semantic processing.

"The most critical finding of the study was that brain activity during object recognition is better modeled by taking into account both visual and semantic properties of objects, and this can be captured through a computational modeling approach," the researchers explained.

The method they devised made predictions about the stages of semantic activation in the brain that are consistent with previous accounts of object processing, where more coarse-grained semantic processing gives way to more fine-grained processing. The researchers also found that different stages of the model predicted activation in different regions of the brain's object processing pathway.

"Ultimately, better models of how people process visual objects meaningfully may have practical clinical implications; for example, in understanding conditions such as semantic dementia, where people lose their knowledge of the meaning of object concepts," the researchers said.

The study carried out at Cambridge is an important contribution to the field of neuroscience, as it showed how different regions of the brain contribute to visual and semantic processing of objects.

"It is now vital to investigate how information in one region can be transformed into a different state that we see in different regions in the brain," the researchers added. "For this, we need to understand how connectivity, and temporal dynamics support these transformative neural processes."

The research was published in Scientific Reports recently.

More information: Integrated deep visual and semantic attractor neural networks predict fMRI pattern-information along the ventral object processing pathway, Scientific Reports (2018). DOI: 10.1038/s41598-018-28865-1

© 2018 Tech Xplore