November 8, 2018 feature

Visual rendering of shapes on 2-D display devices guided by hand gestures

Researchers at NIT Kurukshetra, IIT Roorkee and IIT Bhubaneswar have developed a new Leap Motion controller-based method that could improve rendering of 2-D and 3-D shapes on display devices. This new method, outlined in a paper pre-published on arXiv, tracks finger movements while users perform natural gestures within the field of view of a sensor.

In recent years, researchers have been trying to design innovative, touchless user interfaces. Such interfaces could allow users to interact with electronic devices even when their hands are dirty or non-conductive, while also assisting people with partial physical disabilities. Studies exploring these possibilities have been enhanced by the emergence of low-cost sensors, such as those used by Leap Motion, Kinect and RealSense devices.

"We wanted to develop a technology that can provide an engaging teaching experience to students learning clay art or even kids who are learning basic alphabets," Dr. Debi Prosad Dogra, one of the researchers who carried out the study told TechXplore. "Understanding the fact that children learn better from visual stimuli, we utilized a well-known hand motion capture device to provide this experience. We wanted to design a framework that can identify teacher's gestures and render the visuals on the screen. The setup can be used for applications requiring hand gestures-guided visual rendering."

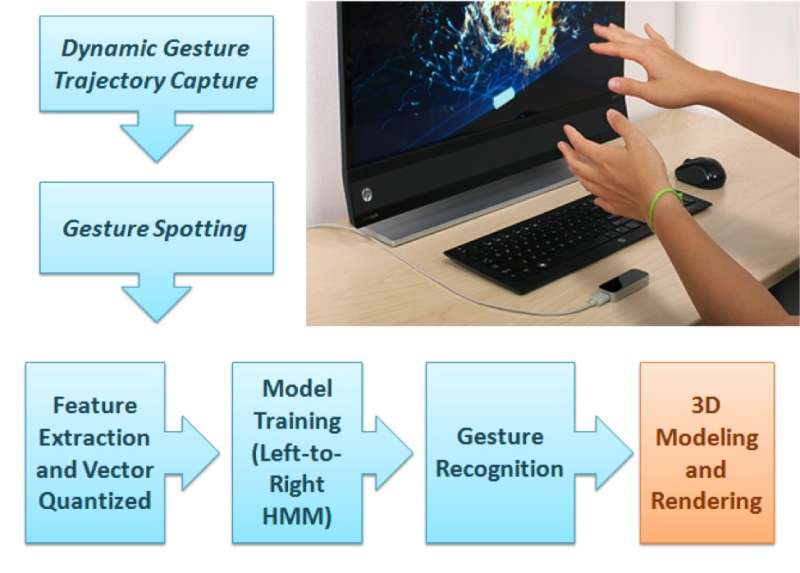

The framework proposed by Dr. Dogra and his colleagues has two distinct parts. In the first part, the user performs a natural gesture from among the 36 types of gestures available within the Leap Motion device's field of view.

"The two IR cameras inside the sensor can record the gesture sequence," Dr. Dogra said. "The proposed machine learning module can predict the class of gesture and a rendering unit renders the corresponding shape on the screen."

The user's hand trajectories are analysed to extract extended Npen++ features in 3-D. These features, representing the user's finger movements during the gestures, are fed to a unidirectional left-to-right hidden Markov model (HMM) for training. The system then carries out a one-to-one mapping between gestures and shapes. Finally, the shapes corresponding to these gestures are rendered over the display using MuPad interface.

"From a developer's perspective, the proposed framework is a typical open-ended framework," Dr. Dogra explained. "In order to add more gestures, a developer just needs to collect gesture sequence data from a number of volunteers and re-train the machine learning (ML) model for new classes. This ML model can learn a generalized representation."

As part of their study, the researchers created a dataset of 5400 samples recorded by 10 volunteers. Their dataset contains 18 geometric and 18 non-geometric shapes, including circle, rectangle, flower, cone, sphere, and many more.

"Feature selection is one of the essential parts for a typical machine learning application," Dr. Dogra said. "In our work, we have extended the existing 2-D Npen++ features in 3-D. It has been demonstrated that extended features significantly improve performance. The 3-D Npen++ features can also be utilized for other types of signals, such as body-posture detection, activity recognition, etc."

Dr. Dogra and his colleagues evaluated their method with a five-fold cross validation and found that it achieved an accuracy of 92.87 percent. Their extended 3-D features outperformed existing 3-D features for shape representation and classification. In future, the method devised by the researchers could aid the development of useful human-computer interaction (HCI) applications for smart display devices.

"Our approach to gesture recognition is quite general," Dr. Dogra added. "We see this technology as a tool for deaf and disabled people's communication. We now want to employ the system to understand the gestures and convert them into written format or shapes, to assist people in daily life conversations. With the advent of advanced machine learning models like recurrent neural networks (RNN) and long short term memory (LSTM), there are also ample scopes in temporal signal classification."

More information: Visual rendering of shapes on 2D display devices guided by hand gestures. arXiv:1810.10581v1 [cs.HC]. arxiv.org/pdf/1810.10581.pdf

© 2018 Science X Network