February 20, 2019 feature

Researchers use computer vision to better understand optical illusions

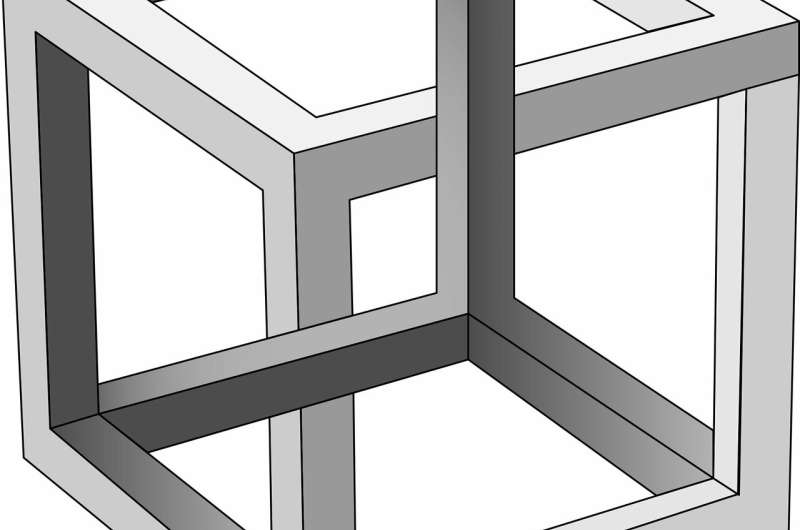

Optical illusions, images that deceive the human eye, are a fascinating research topic, as studying them can provide valuable insight into human cognition and perception. Researchers at Flinders University, in Australia, have recently carried out a very interesting study using a computer vision model to predict the existence of optical illusions and the degree of their effect.

Over the past decade, researchers have attained an increasingly detailed biological understanding of how the human brain processes visual stimuli. Many existing computer vision models draw inspiration from our current understanding of visual processing. Nonetheless, some aspects of visual processing are still poorly understood and highly debated.

"Visual processing starts with the sensations of the retinal receptive fields (RFs) by the incoming light into the eyes," the researchers explained in their paper, which was pre-published on arXiv. "Retinal ganglion cells (RGCs) are the retinal output neurons that convert synaptic input from the inner plexiform layer (IPL) and carry the visual signal to the brain. The diversity of RGC types and the size dependence of each specific type to the eccentricity (the distance from the fovea) are physiological evidence for multiscale encoding of the visual scene in the retina. Consequently, low-level computational models of retinal vision have been proposed based on the simultaneous sampling of the visual scene at multiple scales."

Past research has introduced a model for detecting illusory tilts in the Café Wall illusion, which arise from the contrast of background and tilt cues. In their study, the researchers at Flinders University generalized this approach, in order to cover a broader range of geometric illusions, as well as more complex tile illusions.

"We explore the response of a simple bio-plausible model of low-level vision on geometric/tile illusions, reproducing the misperception of their geometry, that we reported for the Café Wall and some tile illusions," the researchers wrote in their paper. "The model has until now not been verified to generalize to these other illusions, and this is what we show in this paper."

In their study, the researchers evaluated a computational filtering model that is designed to model the lateral inhibition of retinal ganglion cells and their responses to different geometric illusions. Adopting this approach, the researchers hoped to achieve a better understanding of these illusions, predicting the degree of their effect.

"Although the misperception of orientation in tilt illusions in general may suggest physiological explanations involving orientation selective cells in the cortex, our work provides evidence for a theory that the emergence of tilt in these patterns is initiated before reaching orientation-selective cells, as a result of known retinal/cortical simple cell encoding mechanism," the researchers explained.

Overall, the findings collected in this study suggest that differences of Gaussian (DoG), a filter that detects edges in images, at multiple scales could help to explain the induced tilt in tile illusions and could also help to uncover some of the illusory cues perceived when looking at geometric illusions. In addition, the researchers were able to link bottom-up processes to higher level perception and cognition, in a way that is consistent with David Marr's theory of vision and edge detection.

Current computer vision models for analyzing geometrical illusions are fairly complex, hence they might be harder to apply in research studies. According to the researchers, future studies should try to devise less sophisticated and more biologically plausible methods to detect visual cues.

"We believe that further exploration of the role of simple Gaussian-like models in low level retinal processing, and Gaussian kernels in early stage DNNs, and its prediction of loss of perceptual illusion will lead to more accurate computer vision techniques and models and can potentially steer computer vision towards or away from the features that humans detect," the researchers wrote. "These effects can, in turn, be expected to contribute to higher-level models of depth and motion processing and generalized to computer understanding of natural images."

More information: Informing computer vision with optical illusions. arXiv:1902.02922 [cs.CV]. arxiv.org/abs/1902.02922

© 2019 Science X Network