March 26, 2019 feature

A CNN-based method to detect end-to-end multiplayer violence

Researchers at China University of Petroleum (CUP), in Beijing, have recently developed a new method for multiplayer violence detection based on deep 3-D convolutional neural networks (CNNs). Their method was presented in a paper published in ICNCC 2018: Proceedings of the 2018 VII International Conference on Network, Communication and Computing.

In recent years, advances in computer vision and artificial intelligence (AI) have led to the development of increasingly sophisticated video surveillance systems, which can help local authorities to prevent crime and monitor public spaces more effectively. Despite these developments, most current real-time monitoring systems rely on the manual work of human agents, which can be time-consuming, and sometimes results in failure to detect all illicit activities.

Researchers have thus been trying to develop intelligent and high-precision surveillance systems that would allow authorities to identify unusual behavior more quickly and effectively. Adding smart video analysis modules to a monitoring system would ultimately allow it to autonomously analyze information and spot abnormal situations.

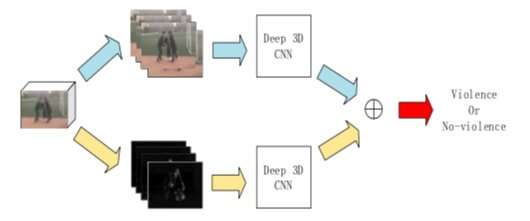

One of the key priorities in the field of security and surveillance is identifying violent behavior in public spaces in order to intervene promptly and ensure the safety of other members of the community. With this in mind, the team of researchers at CUP set out to develop a machine learning method that can detect violent behavior quickly, simply by analyzing video surveillance footage. The method proposed by the researchers uses a 3-D CNN, which is trained to analyze videos and detect violent acts carried out by multiple people.

"Violence detection in crowded scenes (such as shopping malls, banks and stadiums) is significantly important, but little research has been done [in this area]," the researchers wrote in their paper. "Based on this situation, this paper proposes a multiplayer violence detection method based on a deep three-dimensional convolutional neural network (3-D CNN) that extracts the spatiotemporal feature information of multiplayer violence."

Currently, there are two types of methods for detecting violence in videos. The first type entails the use of traditional feature extraction and a classifier, while the second employs deep learning techniques. The new method devised by the researchers falls in the latter category, as past studies suggest that deep-learning models for violence detection are more convenient and effective than traditional approaches.

To train and evaluate their method, the researchers used 500 multiplayer violence videos and 500 multiplayer nonviolent videos, with resolutions up to 1920*1080. Their CNN model for violence detection is inspired by a network developed by Facebook AI Lab, in 2014.

To evaluate their method, the researchers carried out a series of experiments on the Nvidia Tesla K80. Their method was found to be highly accurate, outperforming three traditional violence detection approaches that work by artificially extracting features. In the future, their 3-D CNN could be developed further, allowing users to also determine the location of the violent conflicts that are happening in videos.

More information: End-to-end multiplayer violence detection based on deep 3-D CNN. DOI: 10.1145/3301326.3301367. dl.acm.org/citation.cfm?id=3301367

Learning spatiotemporal features with 3-D convolutional networks. arXiv:1412.0767 [cs.CV]. arxiv.org/abs/1412.0767

© 2019 Science X Network