March 16, 2019 weblog

Rock, scissors, flower, box. Lookout informs blind

It looks as if Microsoft and Google are making 2019 the year of impressive gains in maximizing AI as a technology enabler for people with low vision and blindness. Microsoft and Google have both recently sent out good news about apps that can help millions navigate the world around them.

SeeingAI from Microsoft is available in the iOS App Store as an update; now Google's Lookout is available to people with Pixel devices in the US for download as an Android app. Lookout was announced last year and is now available—for Pixel phones only and US English only. They are talking about extended use as 1–2 hours or more on a Pixel device.

How it works: Simple. Open the app. Point the phone at what you are trying to see. Keep your phone pointed forward. The app tells you what it is. The user, fort very ease of use, can hang the Pixel phone from a lanyard around the neck or place it in a shirt front pocket. Or, with the camera facing outward, the device can also be held in your hand," said the Help page.

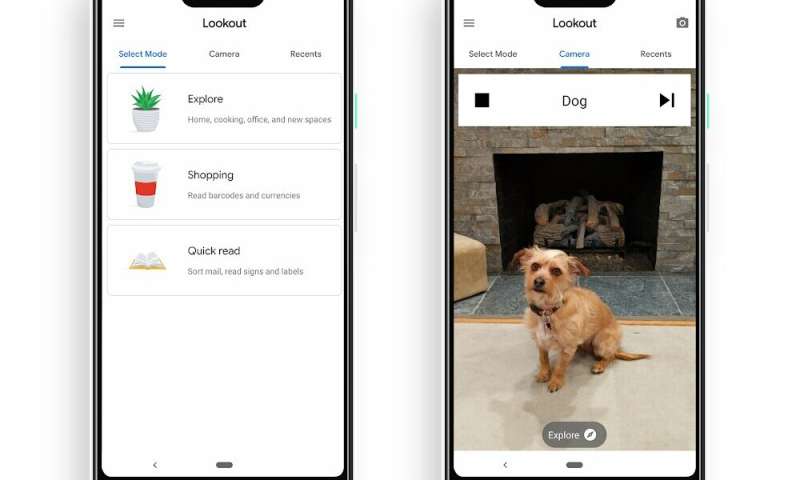

Android Police had further information about how the app works—it does so in three modes: "one to help explore the world and assist with cooking, one while shopping for reading barcodes and seeing currency, and the last for reading pieces of text on mail, signs, labels, and more." Rita El Khoury said that, upon launch, it asks you "which mode you want to use, so as not to cumber you with navigating menus."

Lookout "looks out" for people, text, objects as the person moves through a space. And now Lookout is finally available for users to try out.

El Khoury in Android Police. "If Google Lens can identify a dog's breed from a photo, there's nothing stopping it from using the same tech to help visually-impaired people, and that's where Lookout comes in."

Lookout, in brief, tells you about people, text, objects and more as you move through a space. It detects items in the scene and takes a best guess at what they are.

"Google has been testing it since it was announced almost a year ago," said AndroidHeadlines, and "is warning that as with any new technology, the results may not be 100-percent accurate in Lookout. That is why the app is only available on Pixel smartphones for now, as it is looking to get some more feedback from early users."

"Since we announced Lookout at Google I/O last year," said Patrick Clary, "we've been working on testing and improving the quality of the app's results."

The app is designed to help its users in (1) learning about a new space for the first time (2) reading text and documents, and (3) completing daily routines such as cooking, cleaning and shopping.

Lookout uses computer vision, making use of the device camera and sensors to recognize objects and text

What's next: The team aims to continue to improve the app. They asked for feedback. "We're very interested in hearing your feedback and learning about times when Lookout works well (and not so well)."

It is available for download on Google Play.

One user comment posted on March 13 from Michael Hendricks said, "Quick read mode is amazing. Point it at a book or sign and it reads it out loud. Explore mode is not as accurate and a little slow, but still a remarkable technology...Overall, this is a fantastic start to a new app."

More information: www.blog.google/outreach-initi … urroundings-help-ai/

© 2019 Science X Network