May 10, 2019 feature

A face-following robot arm with emotion detection

Researchers at Universitat Autònoma de Barcelona (UAB) have recently developed a face-following robotic arm with emotion detection inspired by Pixar Animation Studios' Luxo Jr. lamp. This robot was presented by Vernon Stanley Albayeros Duarte, a computer science graduate at UAB, in his final thesis.

"The idea behind our robot is largely based on Pixar's Luxo Jr. lamp shorts," Albayeros Duarte told TechXplore. "I wanted to build a robot that mimicked the lamp's behavior in the shorts. I'm very interested in the maker scene and have been 3D printing for a few years, so I set out to build a 'pet' of sorts to demonstrate some interesting human-machine interactions. This is where the whole 'face following/emotion detection' theme comes from, as having the lamp jump around like those in the Pixar shorts proved very difficult to implement, but still retained the 'pet-toy' feel about the project."

As this study was part of Albayeros Duarte' coursework, he had to meet certain requirements outlined by UAB. For instance, the main objective of the thesis was for students to learn about Google's cloud services and how these can be used to offload computing resources in projects that are not computationally strong for them.

Raspberry Pi is a tiny and affordable computer, which has substantial computational limitations. These limitations make it the perfect candidate to explore the use of Google's cloud platform for computationally intensive tasks, such as emotion detection.

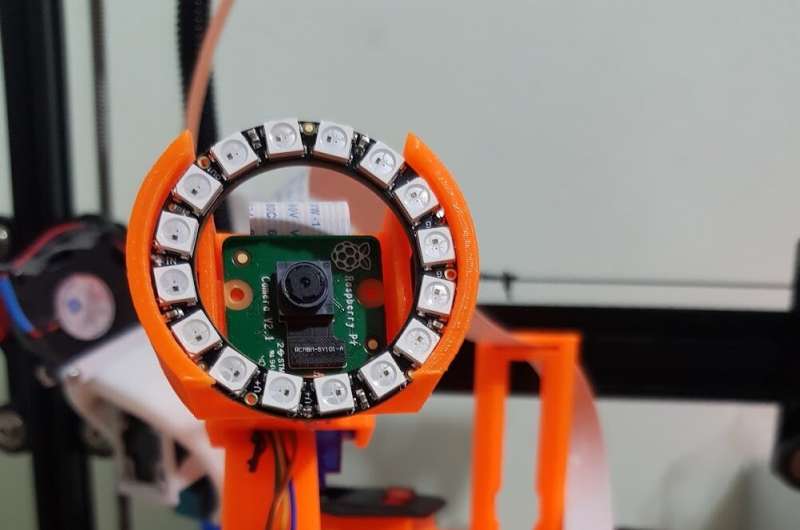

Albayeros Duarte thus decided to use a Raspberry Pi to develop a small robot with emotion detection capabilities. His robot's main body is LittleArm 2C, a robotic arm created by Slant Concepts founder, Gabe Bentz.

"I reached out to Slant Concepts to ask for permission to modify their robot arm so that it would hold a camera at the end, then created the electronics enclosure and base myself," Albayeros Duarte said.

The robot designed by Albayeros Duarte 'sweeps' a camera from left to right, capturing a photo and using OpenCV, a library of programming functions that is often used for computer vision applications, to detect a face within its frame. When the robot reaches the end of either side, it raises or lowers the camera of a couple of degrees and resumes its sweeping movement.

"When it finds a face, the robot stops the sweeping movement and checks if the face stays within the field of view for more than a handful of frames," Albayeros Duarte explained. "This ensures that it doesn't 'play' with false positives in face detection. If the robot confirms that it has in fact found a face, it switches to the 'face following' part of the algorithm, where it tries to keep the face centered within it's field of view. To do this, it pans and tilts according to the movements of the person it's observing."

While the robot is following the movements of the person in its field of view, it takes a picture of their face and sends it to Google's Cloud Vision API. Google's platform subsequently analyzes the image and detects the current emotional state of the person in it, classifying it as one of 5 emotional states: joy, anger, sorrow, surprise or neutral.

"When the robot receives the results of this analysis, it mimics whatever emotional state the user is in," Albayeros Duarte said. "For joy it jumps around a bit, for anger it does a small headshake in disapproval, for sorrow it droops down to the ground and looks up to you, and for surprise it moves backward. The robot also has a LED ring capable of the full RGB color gamut, which it uses to complement these actions."

Depending on the emotion it detects, the robot's 'sweeping behavior' changes. If it detects joy it sweeps a little faster, for anger it moves as fast as possible (without compromising the quality of its face detection), for sorrow it sweeps in a downward or 'droopy' position and for surprise it shakes randomly while sweeping. In each of these 'modes', the robot flashes different colors on its RGB LED ring: yellow and warm colors for joy, bright red for anger, blue and cold colors for sorrow and a mix of yellow and green for surprise.

"I believe that there is a huge untapped potential for 'pet-like' robots," Albayeros Duarte said. "From making personal assistants like Amazon's Alexa and the Google Assistant more interactive and natural-feeling, to potentially helping disabled people become more self-sufficient through their help, having a robot that responds to your current emotional state can have a huge impact on the perception of these devices. For instance, an assistant for elderly people capable of recognizing emotional distress could send out early warnings should they need sanitary assistance, while a robot used to help develop motor skills in movement-impaired children could detect whether the child is losing interest or is becoming more engaged in an activity and adjust its difficulty accordingly."

In addition to being an excellent example of how Google's cloud platform can be used to offload computational resources, Albayeros Duarte's project provides a set of models for 3D printing that could be used to reproduce his robot or create adaptations of it, along with the bill of necessary materials. At the moment, the researcher is also collaborating with Fernando Vilariño, Associate Director at the Computer Vision Centre (CVC) and President of the European Network of Living Labs (ENoLL), on a project aimed at inspiring younger generations to pick up a career in STEM, as well as on building the physical computing community at UAB, targeting everyone interested in creating their own projects.

"We have been at Barcelona's Youth Mobile Festival, a youth-oriented Mobile World Congress (MWC) organized by the same people as the MWC," Albayeros Duarte said. "Dipping our toes into interactive robots such as this one is a good way to both build something that instantly gets the attention of the school groups at these events and teaches us more about robotics at the consumer level, as opposed to industrial-level robotics."

More information: Face-following robot arm with emotion detection. ddd.uab.cat/record/203329

© 2019 Science X Network