May 24, 2019 weblog

Mona Lisa guest on TV? Researchers work out talking heads from photos, art

A paper discussing an artificial intelligence feat now up on arXiv is giving tech watchers yet another reason to feel this is the Age of Enfrightenment.

"Few-Shot Adversarial Learning of Realistic Neural Talking Head Models" by Egor Zakharov, Aliaksandra Shysheya, Egor Burkov and Victor Lempitsky reveal their technique that can turn photos and paintings into animated talking heads. Author affiliations include the Samsung AI Center, Moscow and the Skolkovo Institute of Science and Technology.

The key player in all this? Samsung. It opened up research centers in Moscow, Cambridge and Toronto last year and the end result might well be more headlines in AI history.

Yes, the Mona Lisa can look as if she is telling her TV host why she favors leave-in hair conditioners. Albert Einstein can look as if he is speaking in favor of no hair products at all.

They wrote that "we consider the problem of synthesizing photorealistic personalized head images given a set of face landmarks, which drive the animation of the model." One shot learning from a single frame, even, is possible.

Khari Johnson, VentureBeat, noted that they can generate realistic animated talking heads from images without relying on traditional methods such as 3D modeling.

The authors highlighted that "Crucially, the system is able to initialize the parameters of both the generator and the discriminator in a person-specific way, so that training can be based on just a few images and done quickly, despite the need to tune tens of millions of parameters."

What is their approach? Ivan Mehta in The Next Web walked readers through the steps that form their technique.

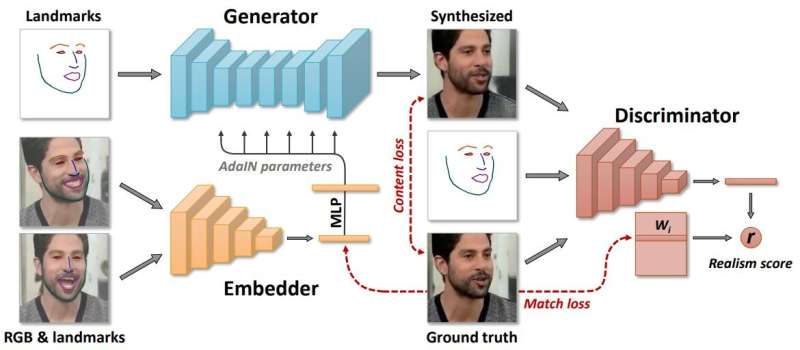

"Samsung said that the model creates three neural networks during the learning process. First, it creates an embedded network that links frames related to face landmarks with vectors. Then using that data, the system creates a generator network which maps landmarks into the synthesized videos. Finally, the discriminator network assesses the realism and pose of generated frames."

The authors described "lengthy meta-learning" on a large dataset of videos, and able to frame few- and one-shot learning of neural talking head models of previously unseen people as adversarial training problems, with high capacity generators and discriminators.

Who would actually use this system? Reports mentioned telepresence, multi-player games and the special-effects industry.

Nonetheless, Johnson and others filing their reports were not about to ignore the risk of technology advancements in the wrong hands, where the mischievous can produce fakery with bad intentions.

"Such tech could clearly also be used to create deepfakes," Johnson wrote.

So, we might want to hit pause on that thought. Just that writers now so casually refer to the "deep fake" results that come out of some artificial intelligence projects. And writers are wondering what this Samsung step in technology might mean in deepfakes.

Jon Christian had an overview in Futurism. "Over the past few years, we've seen the rapid rise of 'deepfake' technology that uses machine learning to analyze footage of real people—and then churn out convincing video of them doing things they never did or saying things they never said."

Joan Solsman in CNET: "The rapid advancement of artificial intelligence means that any time a researcher shares a breakthrough in deepfake creation, bad actors can begin scraping together their own jury-rigged tools to mimic it."

Interestingly, the more the public is aware of AI fakery, the more easily they may accept some animations are fakery—or not? A viewer comment on the video page: "In the future, blackmailing is impossible because everyone knows you can easily create a video out of anything."

More information: Few-Shot Adversarial Learning of Realistic Neural Talking Head Models, arXiv:1905.08233 [cs.CV] arxiv.org/abs/1905.08233v1

© 2019 Science X Network