Expecting the unexpected: A new model for cognition

Cognitive scientists are modeling the inner workings of the human brain using computer simulations, but many current models tend to be inaccurate. Researchers in the Cognitive Neurorobotics Unit at the Okinawa Institute of Science and Technology Graduate University (OIST) have developed a computer model inspired by known biological brain mechanisms, modeling how the brain learns and recognizes new information and then makes predictions about incoming sensory inputs.

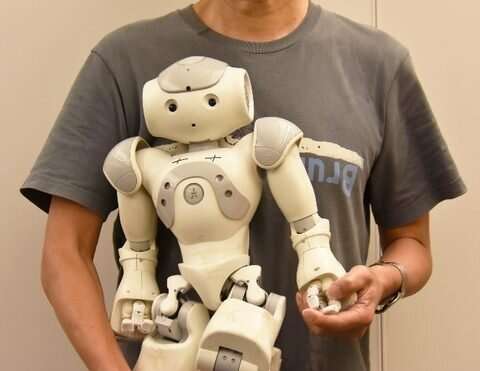

The model may enable robots to "socialize" by predicting and imitating each other's behaviors. It may also help reveal the cognitive underpinnings of Autism Spectrum Disorder.

"Our knowledge of the past informs our expectations for the present," said Professor Jun Tani, a co-author of the new study, published in Neural Computation. "However, we often encounter situations that defy our expectations. We're developing models that can deal with the unpredictability of everyday life."

Tani and his collaborator, former OIST postdoctoral fellow Ahmadreza Ahmadi, worked with a model called a recurrent neural network (RNN). Their RNN draws upon predictive coding, a theory proposing that the brain is continually making predictions about incoming sensory information like sounds and imagery. Errors—discrepancies between the brain's predictions and reality—are propagated through layers of processing networks. This process of "backpropagation" helps the RNN adapt to events occurring irregularly, allowing it to predict future sensory inputs.

Between Order and Randomness

Effective neural networks straddle the line between order and randomness. To optimize their model, the researchers introduced a parameter called a "meta prior" in the learning process. A setting closer to one generated a more certain but complex account for detailed sensory information, whereas a setting closer to zero reduced complexity by allowing more uncertainty.

Tani and his team trained their RNN with sequential data that had regularity while also containing some randomness. They also used their model to program a robot to learn to imitate another robot that moved in specific patterns in random orders.

The researchers found that choosing an intermediate value of the meta prior—a number between zero and one—made it the most effective for the RNNs to generate accurate predictions in both cases.

Aside from studying social development and cognition, the research team hopes to explore the potential of their network for modeling autism spectrum disorder (ASD). Tani believes that people with ASD tend to minimize error by developing complex internal representation of reality, which can be modeled with a high setting of the meta prior. Due to this, individuals with ASD may lack the ability to generalize, and often prefer to interact with the same environment repetitively to avoid error and unfamiliar social interactions.

Therefore, the researchers believe finding a mechanism within the human brain akin to the meta prior may inform future ASD therapies.

More information: Ahmadreza Ahmadi et al. A Novel Predictive-Coding-Inspired Variational RNN Model for Online Prediction and Recognition, Neural Computation (2019). DOI: 10.1162/neco_a_01228