April 18, 2019 feature

A neurorobotics approach for building robots with communication skills

Researchers at the Okinawa Institute of Science and Technology have recently proposed a neurorobotics approach that could aid the development of robots with advanced communication capabilities. Their approach, presented in a paper pre-published on arXiv, is based on two key features: stochastic neural dynamics and prediction error minimization (PEM).

"Our research broadly focuses on building robots based on the key principles of the brain," Jungsik Hwang, one of the researchers who carried out the study, told TechXplore. "In this study, we focused on the prediction error minimization (PEM) principle. The main idea is that the brain is a prediction machine, making predictions consistently and minimizing prediction error when a prediction differs from observations. This theory has been widely applied to explain many aspects of cognitive behaviors. In this study, we tried to examine whether this principle can be applied to a social situation."

In recent years, researchers have carried out numerous studies aimed at artificially replicating the innate communication abilities of many animals, including humans. While many of these studies have achieved promising results, most existing solutions do not achieve human-comparable accuracy.

"One of the challenging tasks for a robot with communication capabilities is recognizing another's intention behind observed behavior," Hwang explained. "A common approach for solving this problem is to consider it as a classification task. The objective then becomes to obtain the correct label (user intention) with given observation (user behavior) using the classifier. These days, the popular choice for such classifiers is deep neural network models, such as convolutional neural networks (CNNs) and long short-term memory (LSTM)."

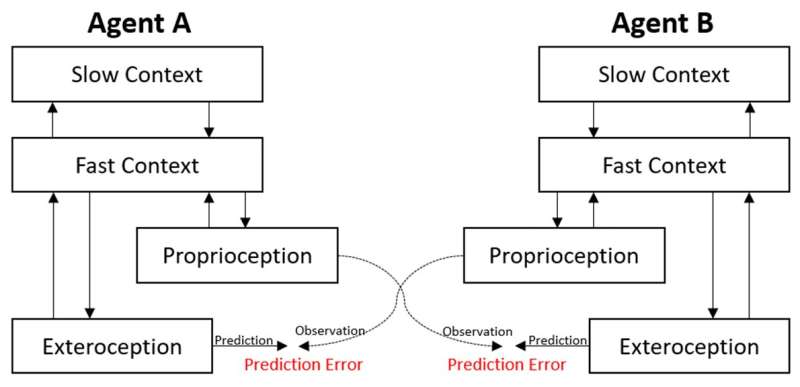

In their study, Hwang and his colleagues proposed a different approach for solving this problem based on stochastic neural dynamics and PEM. The researchers applied their approach to two small humanoid robots, called ROBOTIS OP2, and tested it in different situations that involved human-robot and robot-robot interactions.

"Using our approach, the robot consistently makes predictions about the behavior of the agent that it is interacting with," Hwang said. "When a prediction is different from their observation, the robot updates its belief so that the correct prediction can be made (i.e. minimizing prediction error). Therefore, in this approach intention recognition is not a classification task, but an active process that involves updating 'beliefs' to understand what has happened in the recent past. In machine learning terms, this can be considered as a kind of online learning."

In preliminary evaluations using humanoid robots, the researchers found that being able to predict others' behavior and minimize prediction error played a key role in social situations. Using their approach, the robots were able to imitate the actions of the agents they were interacting with; a human user in HRI (human-robot interaction) settings and another robot in RRI (robot-robot interaction) settings. When their approach was not applied to the robots, on the other hand, the robots' interactions with other agents were marked by mundane patterns and repetitive behaviors.

"By means of the PEM mechanism, the robot can not only quickly adapt to a changing environment but also forecast what's going to happen in the future," Hwang explained. "This method can thus be applied to other ambient intelligence services in which AI consistently makes predictions about users and adapts to them, or even proactively provides suggestions based on past observations."

In the future, the approach developed by Hwang and his colleagues could inform the development of robots with better communication capabilities. Interestingly, the researchers also observed that when two robots interacted with each other using their approach, some new and unusual communication patterns emerged, suggesting that their approach enables a more advanced type of communication.

"There are still many interesting research directions that can be explored in this setting," Hwang said. "For instance, I'm interested in having a gestural Turing test in which a user interacts with a robot which can be controlled by another person behind the wall or AI. If one cannot identify who's operating the robot, can we say the robot has the intelligence to interact with people? What sorts of the brain's principles would be essential to illustrate human-likeness in such social settings? These are some questions that I'd like to explore in the future."

More information: Jungsik Hwang, Nadine Wirkuttis, Jun Tani. A neurorobotics approach to investigating the emergence of communication in robots. arXiv:1904.02858 [cs.RO]. arxiv.org/abs/1904.02858

© 2019 Science X Network