December 23, 2019 feature

A new deep learning model for EEG-based emotion recognition

Recent advances in machine learning have enabled the development of techniques to detect and recognize human emotions. Some of these techniques work by analyzing electroencephalography (EEG) signals, which are essentially recordings of the electrical activity of the brain collected from a person's scalp.

Most EEG-based emotion classification methods introduced over the past decade or so employ traditional machine learning (ML) techniques such as support vector machine (SVM) models, as these models require fewer training samples and there is still a lack of large-scale EEG datasets. Recently, however, researchers have compiled and released several new datasets containing EEG brain recordings.

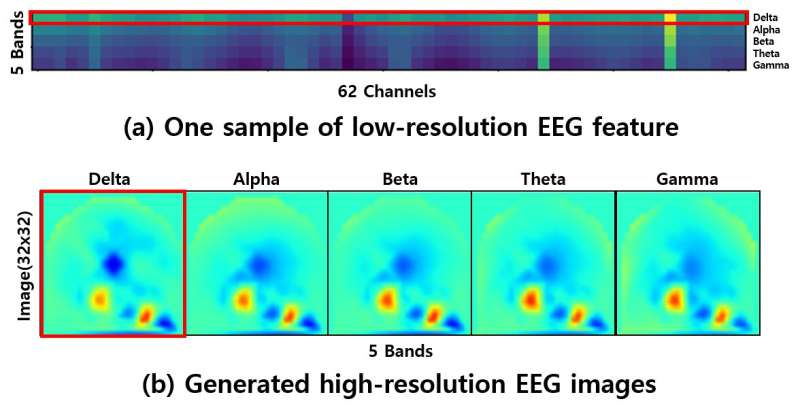

The release of these datasets opens up exciting new possibilities for EEG-based emotion recognition, as they could be used to train deep-learning models that achieve better performance than traditional ML techniques. Unfortunately, however, the low resolution of EEG signals contained in these datasets could make training deep-learning models rather difficult.

"Low-resolution problems remain an issue for EEG-based emotion classification," Sunhee Hwang, one of the researchers who carried out the study, told TechXplore. "We have come up with an idea to solve this problem, which involves generating high-resolution EEG images."

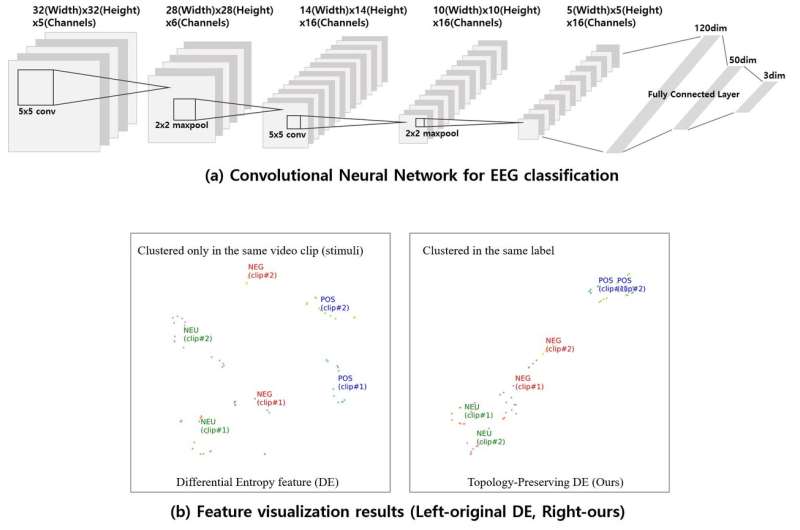

To enhance the resolution of available EEG data, Hwang and her colleagues first generated so-called "topology-preserving differential entropy features" using the electrode coordinates at the time when the data was collected. Subsequently, they developed a convolutional neural network (CNN) and trained it on the updated data, teaching it to estimate three general classes of emotions (i.e., positive, neutral and negative).

"Prior methods tend to ignore the topology information of EEG features, but our method enhances the EEG representation by learning the generated high-resolution EEG images," Hwang said. "Our method re-clusters the EEG features via the proposed CNN, which enables the effect of clustering to achieve a better representation."

The researchers trained and evaluated their approach on the SEED dataset, which contains 62-channel EEG signals. They found that their method could classify emotions with a remarkable average accuracy of 90.41 percent, outperforming other machine-learning techniques for EEG-based emotion recognition.

"If the EEG signals are recorded from different emotional clips, the original DE features cannot be clustered," Hwang added. "We also applied our method on the task of estimating a driver's vigilance to show its off-the-shelf availability."

In the future, the method proposed by Hwang and her colleagues could inform the development of new EEG-based emotion recognition tools, as it introduces a viable solution for overcoming the issues associated with the low-resolution of EEG data. The same approach could also be applied to other deep-learning models for the analysis of EEG data, even those designed for something other than classifying human emotions.

"For computer vision tasks, large-scale datasets enabled the huge success of deep-learning models for image classification, some of which have reached beyond human performance," Hwang said. "Also, complex data preprocessing is no longer necessary. In our future work, we hope to generate large-scale EEG datasets using a generated adversarial network (GAN)."

More information: Sunhee Hwang et al. Learning CNN features from DE features for EEG-based emotion recognition, Pattern Analysis and Applications (2019). DOI: 10.1007/s10044-019-00860-w

The researchers' work was supported by the Institute for Information & Communications Technology Promotion (IITP) grant, funded by the Korea government (No.2017-0-00451, Development of BCI based Brain and Cognitive Computing Technology for Recognizing Users Intentions using Deep Learning).

© 2019 Science X Network