Future autonomous machines may build trust through emotion

Army research has extended the state-of-the-art in autonomy by providing a more complete picture of how actions and nonverbal signals contribute to promoting cooperation. Researchers suggested guidelines for designing autonomous machines such as robots, self-driving cars, drones and personal assistants that will effectively collaborate with soldiers.

Dr. Celso de Melo, computer scientist with the U.S. Army Combat Capabilities Development Command's Army Research Laboratory at CCDC ARL West in Playa Vista, California, in collaboration with Dr. Kazunori Teradafrom Gifu University in Japan, recently published a paper in Scientific Reports where they show that emotion expressions can shape cooperation.

Autonomous machines that act on people's behalf are poised to become pervasive in society, de Melo said; however, for these machines to succeed and be adopted, it is essential that people are able to trust and cooperate with them.

"Human cooperation is paradoxical," de Melo said. "An individual is better off being a free rider, while everyone else cooperates; however, if everyone thought like that, cooperation would never happen. Yet, humans often cooperate. This research aims to understand the mechanisms that promote cooperation with a particular focus on the influence of strategy and signaling."

Strategy defines how individuals act in one-shot or repeated interaction. For instance, tit-for-tat is a simple strategy that specifies that the individual should act as his/her counterpart acted in the previous interaction.

Signaling refers to communication that may occur between individuals, which could be verbal (e.g., natural language conversation) and nonverbal (e.g., emotion expressions).

This research effort, which supports the Next Generation Combat Vehicle Army Modernization Priority and the Army Priority Research Area for Autonomy, aims to apply this insight in the development of intelligent autonomous systems that promote cooperation with soldiers and successfully operate in hybrid teams to accomplish a mission.

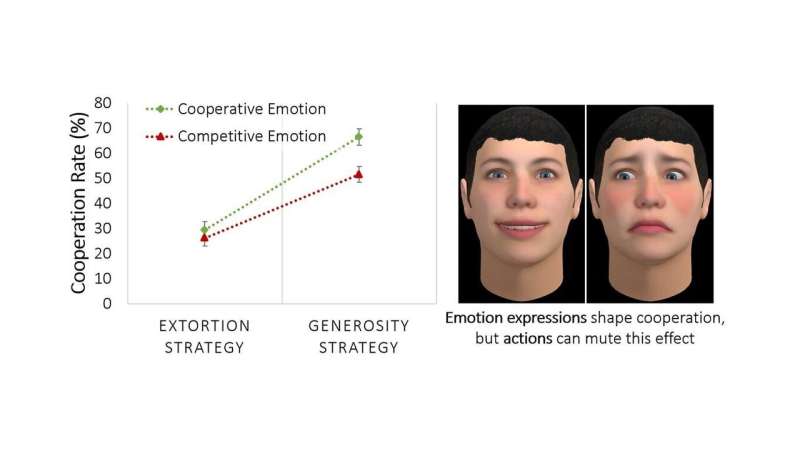

"We show that emotion expressions can shape cooperation," de Melo said. "For instance, smiling after mutual cooperation encourages more cooperation; however, smiling after exploiting others—which is the most profitable outcome for the self—hinders cooperation."

The effect of emotion expressions is moderated by strategy, he said. People will only process and be influenced by emotion expressions if the counterpart's actions are insufficient to reveal the counterpart's intentions.

For example, when the counterpart acts very competitively, people simply ignore-and even mistrust-the counterpart's emotion displays.

"Our research provides novel insight into the combined effects of strategy and emotion expressions on cooperation," de Melo said. "It has important practical application for the design of autonomous systems, suggesting that a proper combination of action and emotion displays can maximize cooperation from soldiers. Emotion expression in these systems could be implemented in a variety of ways, including via text, voice, and nonverbally through (virtual or robotic) bodies."

According to de Melo, the team is very optimistic that future soldiers will benefit from research such as this as it sheds light on the mechanisms of cooperation.

"This insight will be critical for the development of socially intelligent autonomous machines, capable of acting and communicating nonverbally with the soldier," he said. "As an Army researcher, I am excited to contribute to this research as I believe it has the potential to greatly enhance human-agent teaming in the Army of the future."

The next steps for this research include pursuing further understanding of the role of nonverbal signaling and strategy in promoting cooperation and identifying creative ways to apply this insight on a variety of autonomous systems that have different affordances for acting and communicating with the soldier.

More information: Celso M. de Melo et al, The interplay of emotion expressions and strategy in promoting cooperation in the iterated prisoner's dilemma, Scientific Reports (2020). DOI: 10.1038/s41598-020-71919-6