From beekeepers to ocean mappers, Lobe aims to make it easy for anyone to train machine learning models

Sean Cusack has been a backyard beekeeper for 10 years and a tinkerer for longer. That's how he and an entomologist friend got talking about building an early warning system to alert hive owners to potentially catastrophic threats.

They envisioned installing a motion-sensor-activated camera at a beehive entrance and using machine learning to remotely identify when invaders like mites or wasps or potentially even the Asian giant hornet were getting in.

"A threat like that could kill your hive in a couple of hours, and it'd be game over," Cusack said. "But had you known within 10 minutes of it happening and could get out there and get involved, you could potentially rescue whole colonies."

It wasn't until Cusack heard about Lobe, an app that aims to make machine learning easier for people to use and helps them train models without writing code, that he saw a manageable way to bring the project to reality.

"I'm pretty tech savvy, but when I'd tried to do some machine learning things in the past I found it to be pretty intimidating or overwhelming to put all the pieces of the puzzle together," said Cusack, a Microsoft software engineer who normally works in enterprise web development. "Lobe immediately clicked for me."

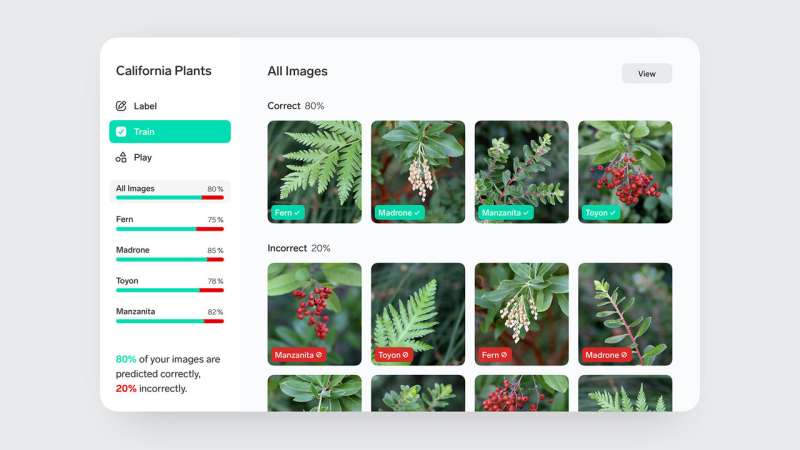

The free app, which Microsoft is making available today in public preview, helps people with no data science experience import images into Lobe and easily label them to create a machine learning dataset. Lobe automatically selects the right machine learning architecture and starts training without any setup or configuration. Users can evaluate the model's strengths and weaknesses with real-time visual results, play with the model and offer feedback to boost performance.

Today, Lobe supports image classification but plans to expand to other model and data types in the future, Microsoft says.

Once training is done, the models can be easily exported to run on industry standard platforms and work in apps, websites or devices. That allows people to create end-to-end machine learning solutions at home or in the workplace, such as creating an alert when a resident raccoon gets their garbage or flagging when an employee in a dangerous situation isn't wearing a helmet.

Early customers include The Nature Conservancy, which is using the Lobe app as part of a larger project to map and protect Caribbean marine resources and pick out which vacation photos uploaded by tourists visiting those regions relate to whale and dolphin watching.

Other customers have used Lobe to build apps that can help identify harmful plants like poison oak on a hike, or that use a camera to send an alert when they accidentally leave the garage door open or when the street parking spot in front of their house opens up.

"Lobe is taking what is a sophisticated and complex piece of technology and making it actively fun," said Bill Barnes, manager for Lobe, which Microsoft acquired and began incubating in 2018. "What we find is that it inspires people. It fills them with confidence that they can actually use machine learning. And when you have confidence you become more creative and start looking around and asking 'What other stuff can I do with this?'"

Lobe, which is available for download on Windows or Mac computers, uses open-source machine learning architectures and transfer learning to train custom machine learning models on the user's own machine. All the data is kept private, with no internet connection or logins required. Because training is automatic, people can start by simply importing images of the things they want Lobe to recognize.

In Cusack's beehive project, which he proved out during the latest Microsoft Hackathon, he used a motion sensor camera that took pictures of honeybees as they flew into the hive, as well as invaders like wasps, earwigs and the giant Asian hornet. Because sightings of the hornet in the wild are still rare, Cusack printed out pictures, attached them to sticks and stuck them in the beehive to mimic an invasive threat.

Lobe used these images to create a machine learning model that can distinguish among the different insects and run on a small Raspberry Pi device at the entrance of the hive to alert owners to trouble.

Lobe fills a sweet spot for customers looking for a simple and quick way to get started with machine learning using their PCs or Macs without requiring any dependency on the cloud, Microsoft says. It complements Azure AI's services for customers looking to leverage cloud computing capabilities.

"We really want to empower more people to leverage machine learning and try it for the first time," said Jake Cohen, Lobe senior program manager. "We want them to be able to use it in ways that they either could not before or didn't realize they could before."

The Nature Conservancy is using Lobe to support its Mapping Ocean Wealth project, which seeks to map how and where tourism, fishing and other activities are potentially affecting important ocean resources—with the goal of helping officials in five Caribbean nations make more informed conservation and economic decisions.

The nonprofit is using Lobe to flag vacation photos depicting whale or dolphin watching activities that visitors to those countries have uploaded to a popular travel website. The photos have been stripped of all personal information but retain geographic data, which can help give decision makers a rough idea of how popular those nature-based tourism activities are in different locations.

"There are a lot of good fishing maps, there are a lot of good shipping maps and maps that show where different habitats are. But it's actually quite hard to capture spatial patterns of what tourists are doing and where and at what intensity," said Kate Longley-Wood, ocean mapping coordinator for The Nature Conservancy. "So we've found that these crowdsourced datasets can be really helpful in filling those gaps."

Before using Lobe, The Nature Conservancy had to contract with data science researchers and students to create a custom machine learning model that could identify tourists engaging with coral reefs. But Lobe has allowed the nonprofit to do that same work in house, using staff who have no programming or data science experience.

To train the model, Longley-Wood collected two sets of images and imported them into Lobe. The first were of "whale and dolphin watching" vacation photos of people who are clearly engaged in those activities. The second contain images that are "not whale or dolphin"—pictures of open water, other types of boats, people snorkeling.

One advantage of Lobe is that it's very easy to see where the model is getting things wrong and quickly improve its accuracy, Longley-Wood said. If the model gets confused and incorrectly labels a picture of a person swimming next to a boat as a whale watching photo, you can correct it with the click of a button.

Another early customer, Chris Cachor, is a software engineer for Sincro, an Ansira company focused on automotive marketing. He helps local car dealerships get the best performance out of social media ads.

People are less likely to engage with ads featuring stock images of a car model for sale, as opposed to an authentic photo of the car as it appears on the lot, Cachor said. Yet scripts designed to flag generic car photos haven't always been able to keep up with increasingly sophisticated computer-generated imagery, he said.

Cachor said he'd thought about using machine learning to automate that task, but the tools he had run across seemed too cumbersome and time consuming to learn. With Lobe, he was able to import and label examples of stock, computer-generated and authentic car images. Within minutes, he had his first version of a computer vision model to weed out photos that are less likely to perform well in ads.

"It was so cool to see results right away without it becoming a weekend-long academic project," Cachor said. "It kind of took you from zero to 60 really quick."